Terarium help

| Author(s) | Uncharted Software Inc. |

| Copyright | Copyright © 2025 Uncharted Software Inc. |

Table of Contents

Home ↵

About Terarium¶

Terarium is a modeling and simulation workbench designed to help you assess and contribute to the scientific landscape. New to the workbench? Check out these topics:

Regardless of your level of programming experience, Terarium allows you to:

- Extract models from academic literature

- Parameterize and calibrate them

- Simulate a variety of scenarios

- Analyze the results

Need more help? Check out these topics:

Ended: Home

Get started ↵

Using Terarium¶

Terarium supports your scientific decision making by helping you organize, refine, and communicate the results of your modeling processes. You can:

- Gather existing knowledge.

- Break down complex scientific operations into separate, easy-to-configure tasks.

- Create reproducible visual representations of how your resources, processes, and results chain together.

How Terarium represents your modeling work¶

The following concepts describe how Terarium organizes your modeling work to help you manage, visualize, and run scientific processes.

-

Project

A workspace for storing modeling resources, organizing workflows, and recording and sharing results.

-

Resource

Scientific knowledge—models, datasets, or documents (PDF)—used to build workflows and extract insights.

-

Workflow

A visual canvas for building and capturing your modeling processes. Workflows show how resources move between different operators to produce results.

-

Operator

A part of a workflow that performs tasks like data transformation or simulation.

Creating a project¶

Create a project for a problem you want to model and then:

- Upload existing models, datasets, and documents to build a library of relevant knowledge.

- Visually construct different modeling workflows to transform the resources and test different models.

Create a project

- On the Home page, do one of the following actions:

- To start from scratch, click New project.

- To find a project to copy, search My projects or Public projects and then click > Copy.

- To upload a project, click Upload project and drag in or browse to the location of your .project file.

- In the new project, edit the overview to capture your goals and save results over time.

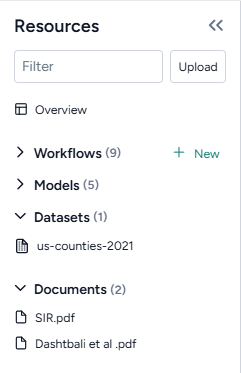

Gathering resources¶

Use the Resources panel to upload and access your models, datasets, and documents.

Note

You can also add resources by:

- Copying them from other projects.

- Creating them using Terarium's library of operators.

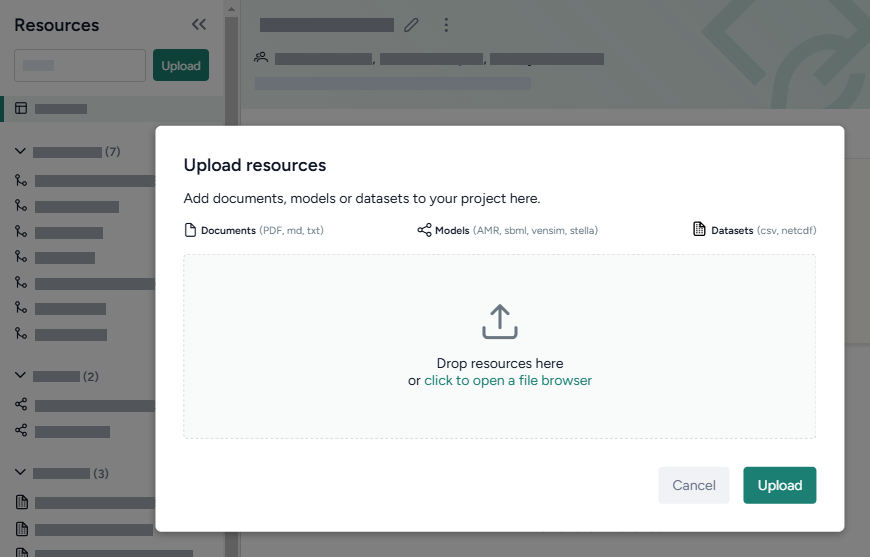

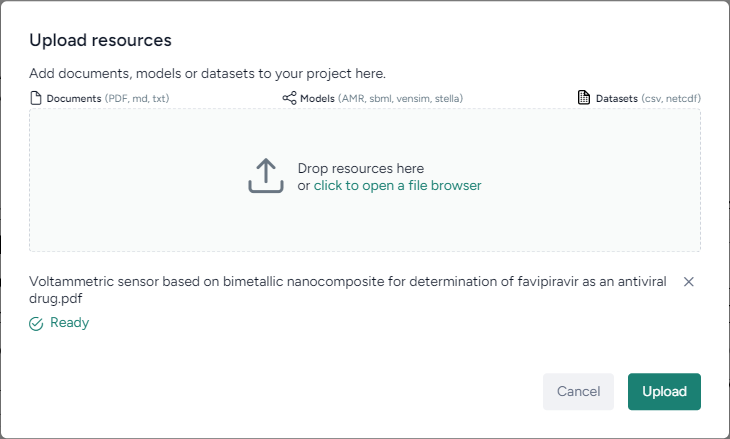

Upload resources

- Do one of the following actions:

- Drag your files into the Resources panel.

- Click Upload and then click open a file browser to navigate to the location of the files you want to add.

-

Click Upload.

Note

To view a resource, click its title in the Resources panel.

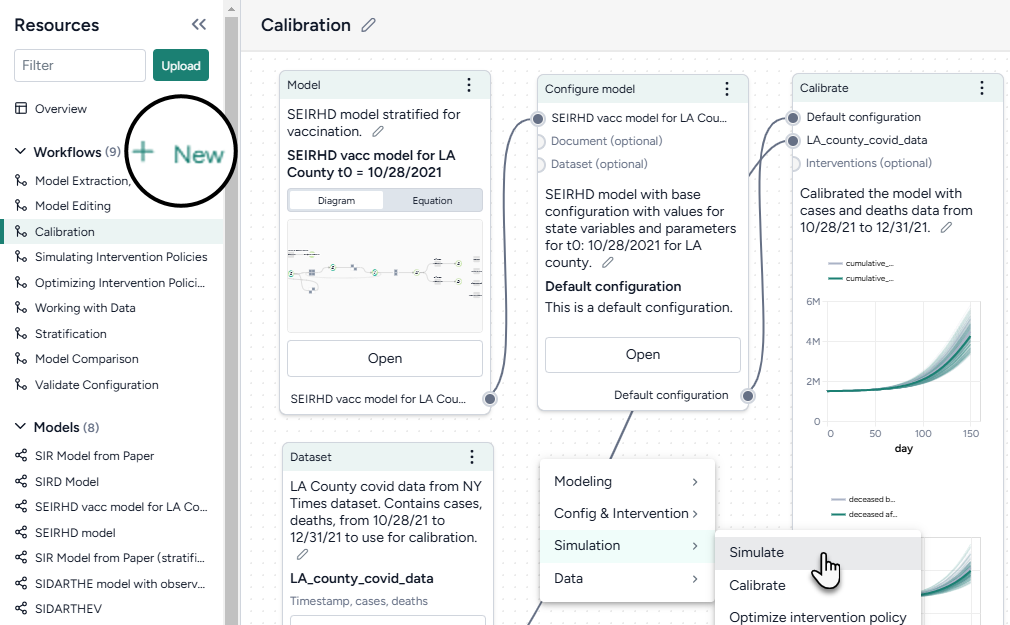

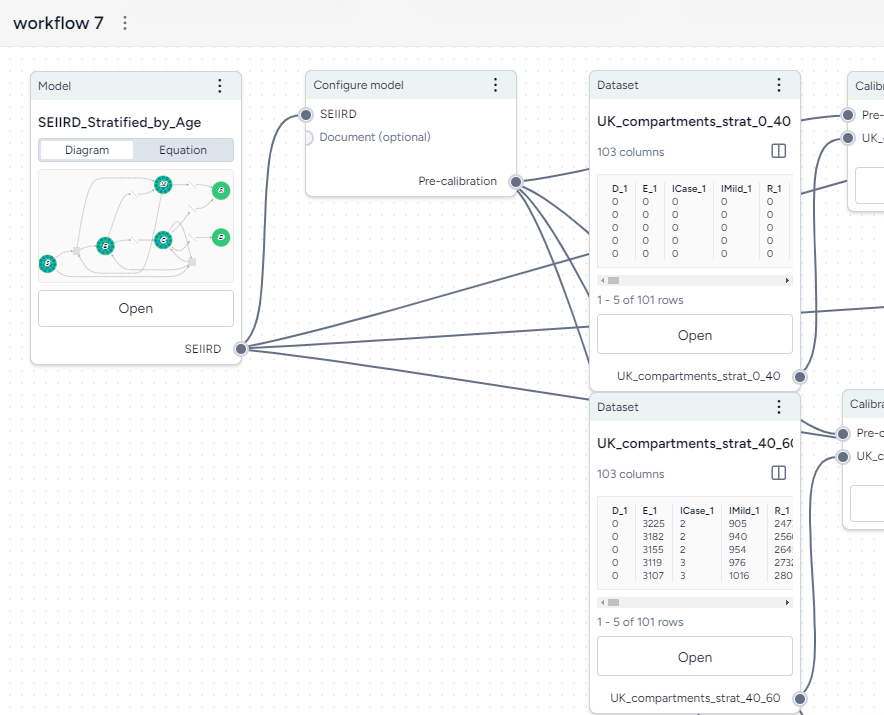

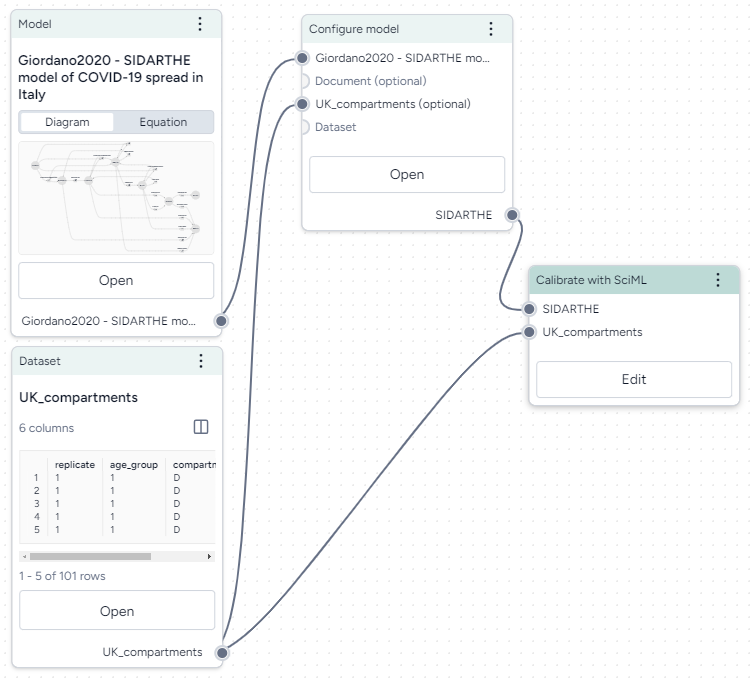

Building scientific modeling workflows¶

Create a workflow to visually build your modeling processes. Each box is a resource or an operator that handles a task like transformation and simulation. Chain their outputs and inputs to:

- Recreate, reuse, and modify existing models and datasets to suit your modeling needs.

- Rapidly create scenarios and interventions by configuring, validating, calibrating, and optimizing models.

Create a workflow

- In the Workflows section of the Resource panel, click New.

- Select a template, fill out the required fields, and then click Create.

-

Use the canvas to customize your workflow:

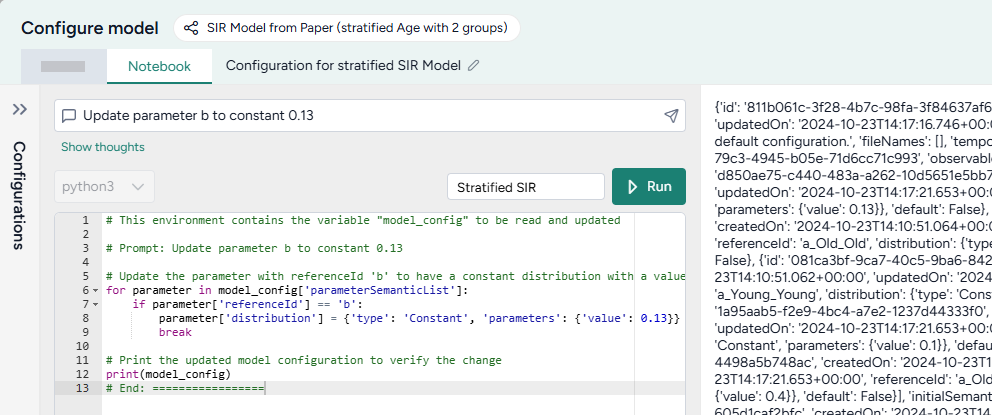

Using the library of operators¶

Terarium's operators support various ways for you to configure complex scientific tasks. For example, you can drill down to access:

- A guided wizard for quickly configuring common settings.

- A notebook for direct coding.

- An integrated AI assistant for creating and refining code even if you don't have any programming experience.

Use a Terarium operator

- Make sure you've connected all the required inputs.

- Click Open on the operator node.

-

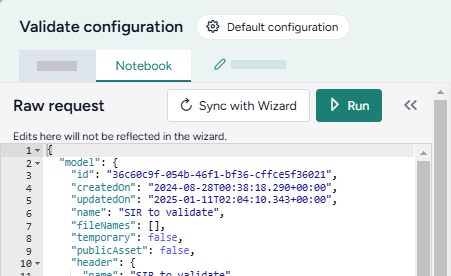

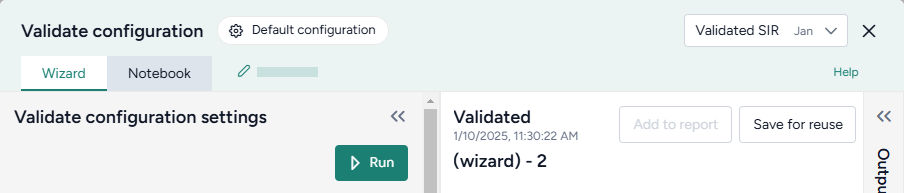

Switch to the Wizard or Notebook view depending on your preference.

Note

Any changes you make in the Wizard view are automatically translated into code in the Notebook view.

-

Modeling

-

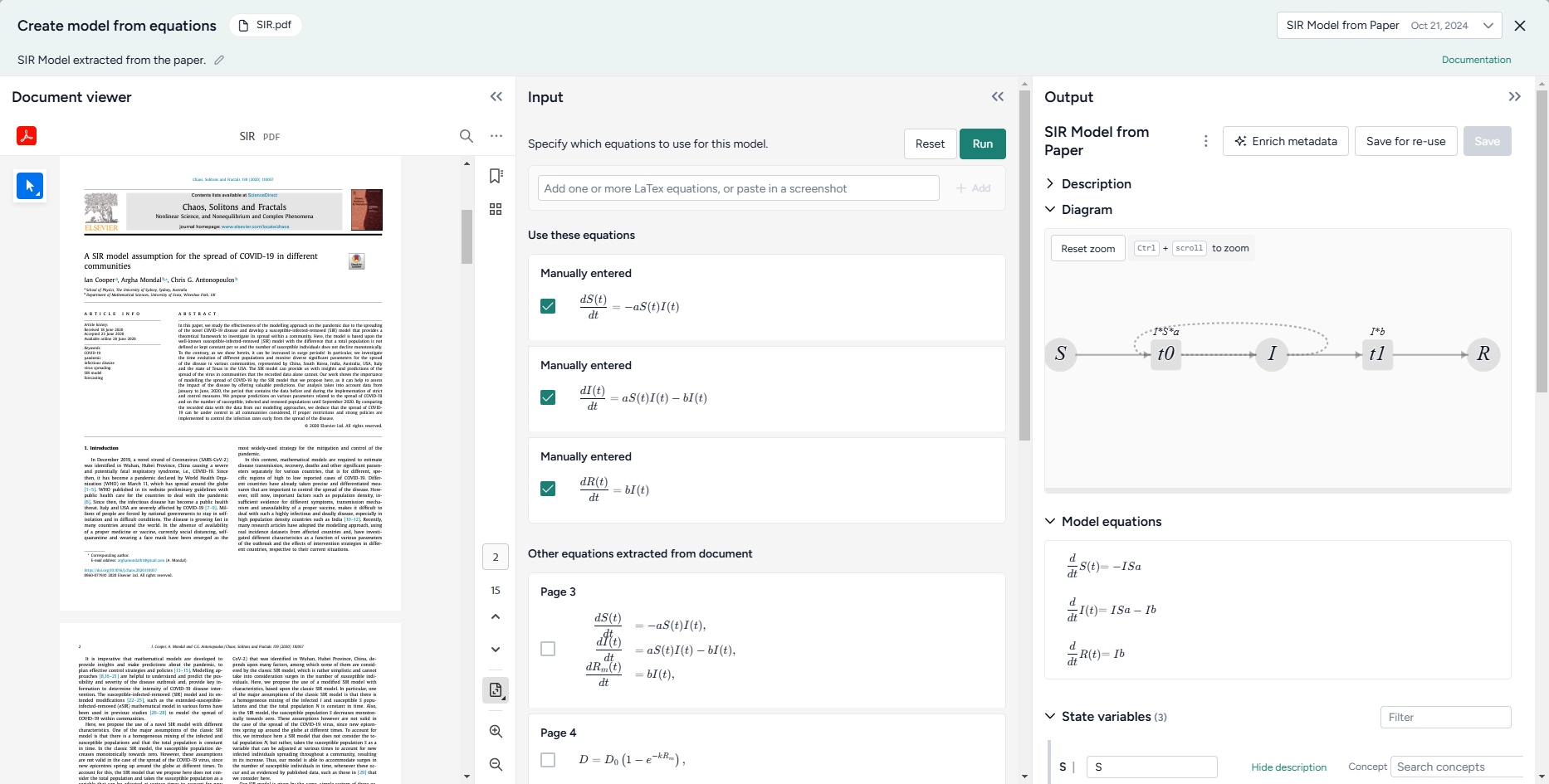

Create model from equations

Build a model using LaTeX expressions or equations extracted from a paper.

Source code -

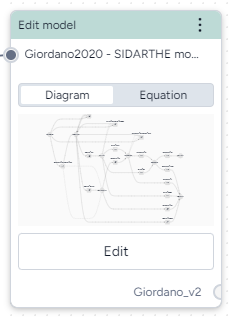

Edit model

Modify model states and transitions using an AI assistant.

Source code overview -

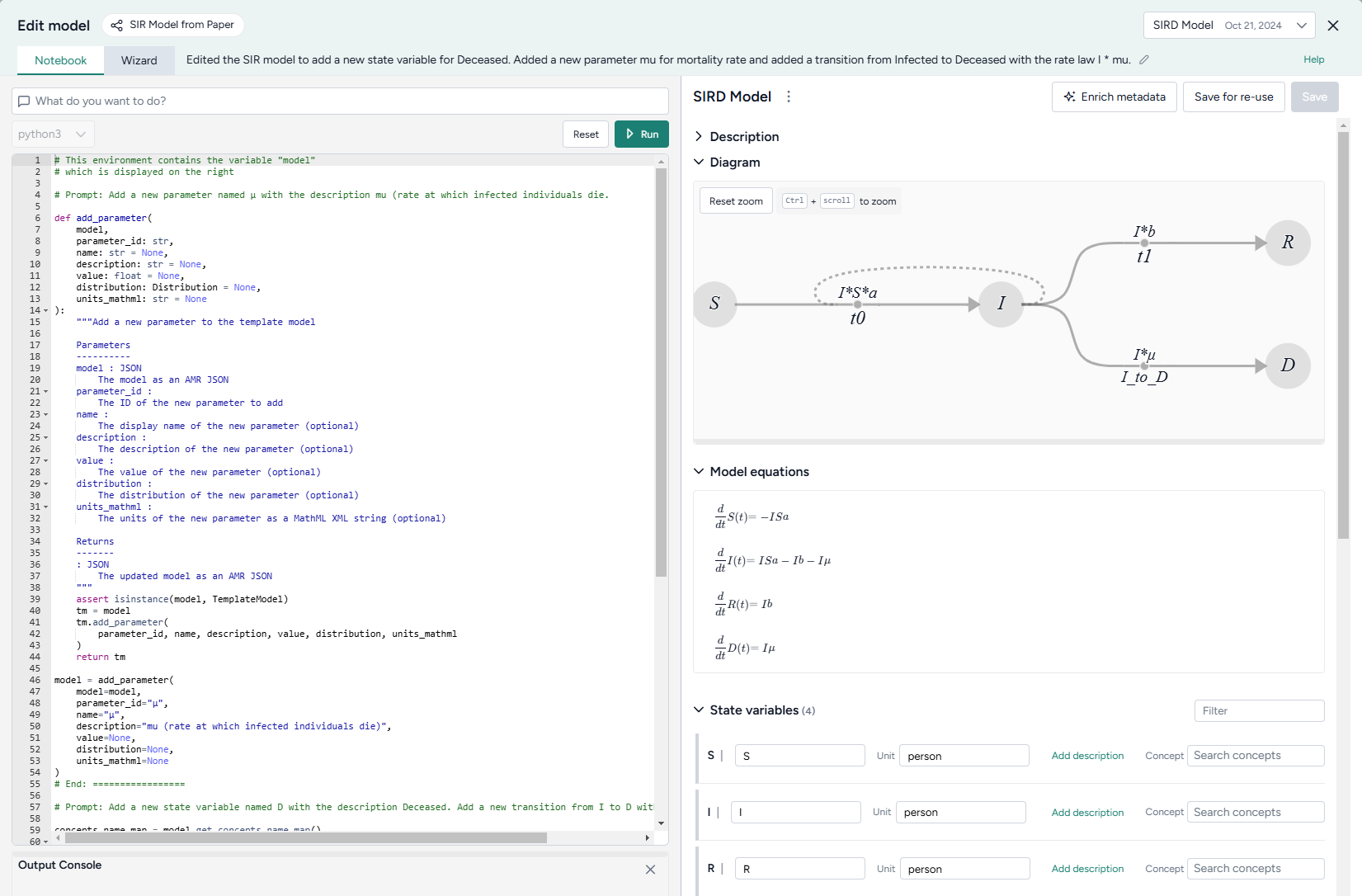

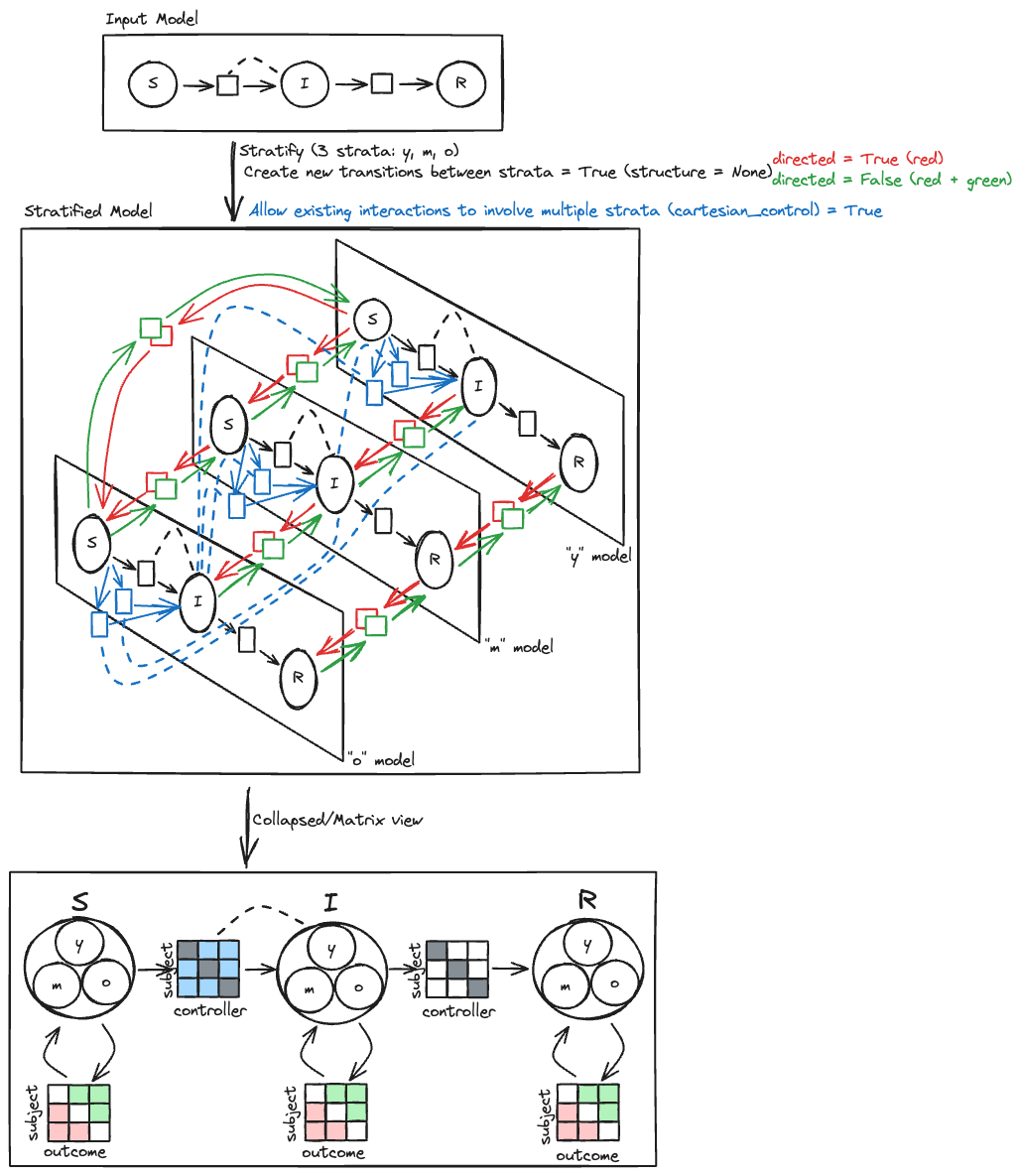

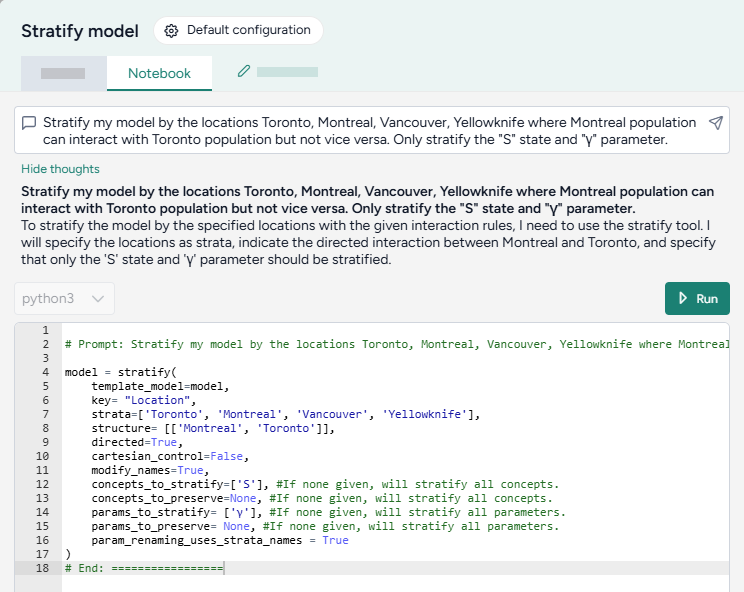

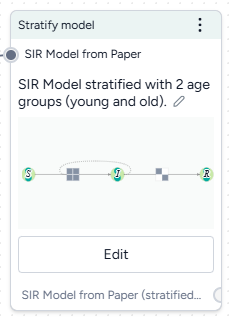

Stratify model

Divide populations into subsets along characteristics such as age or location.

Source code overview -

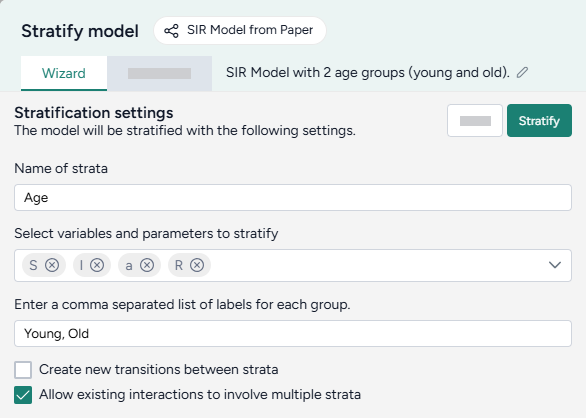

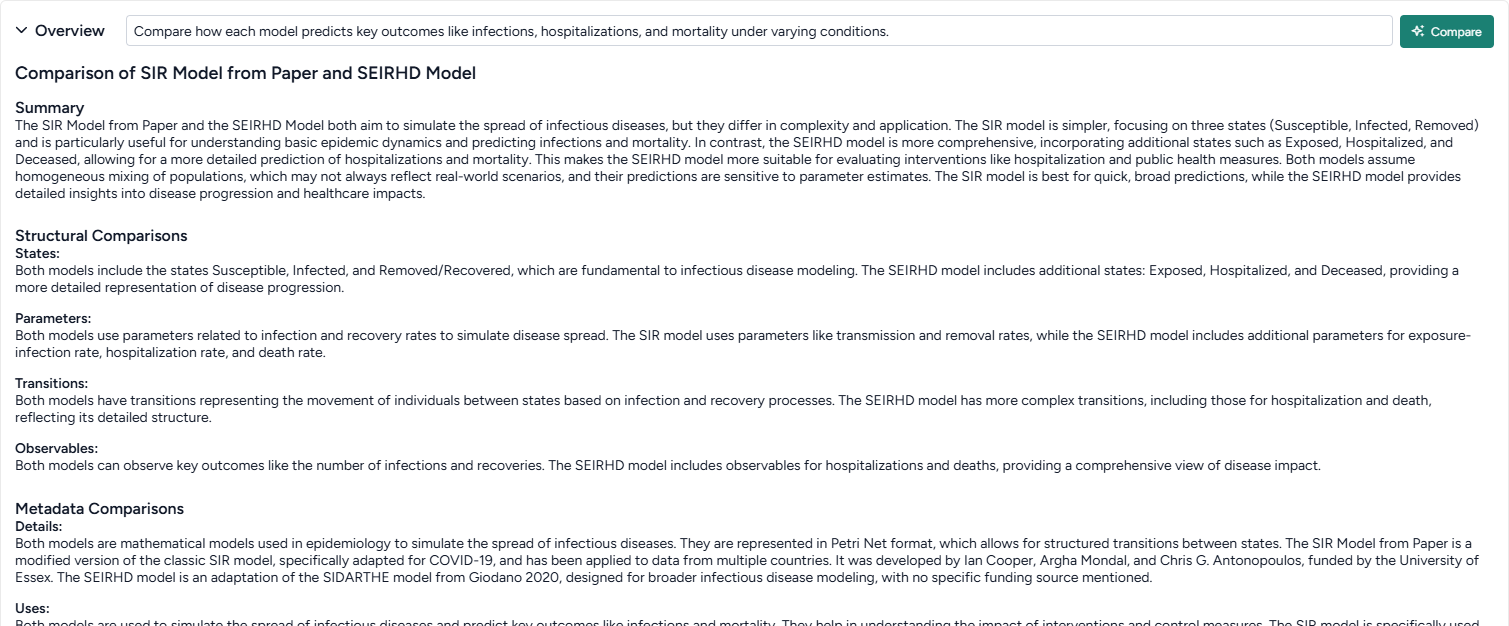

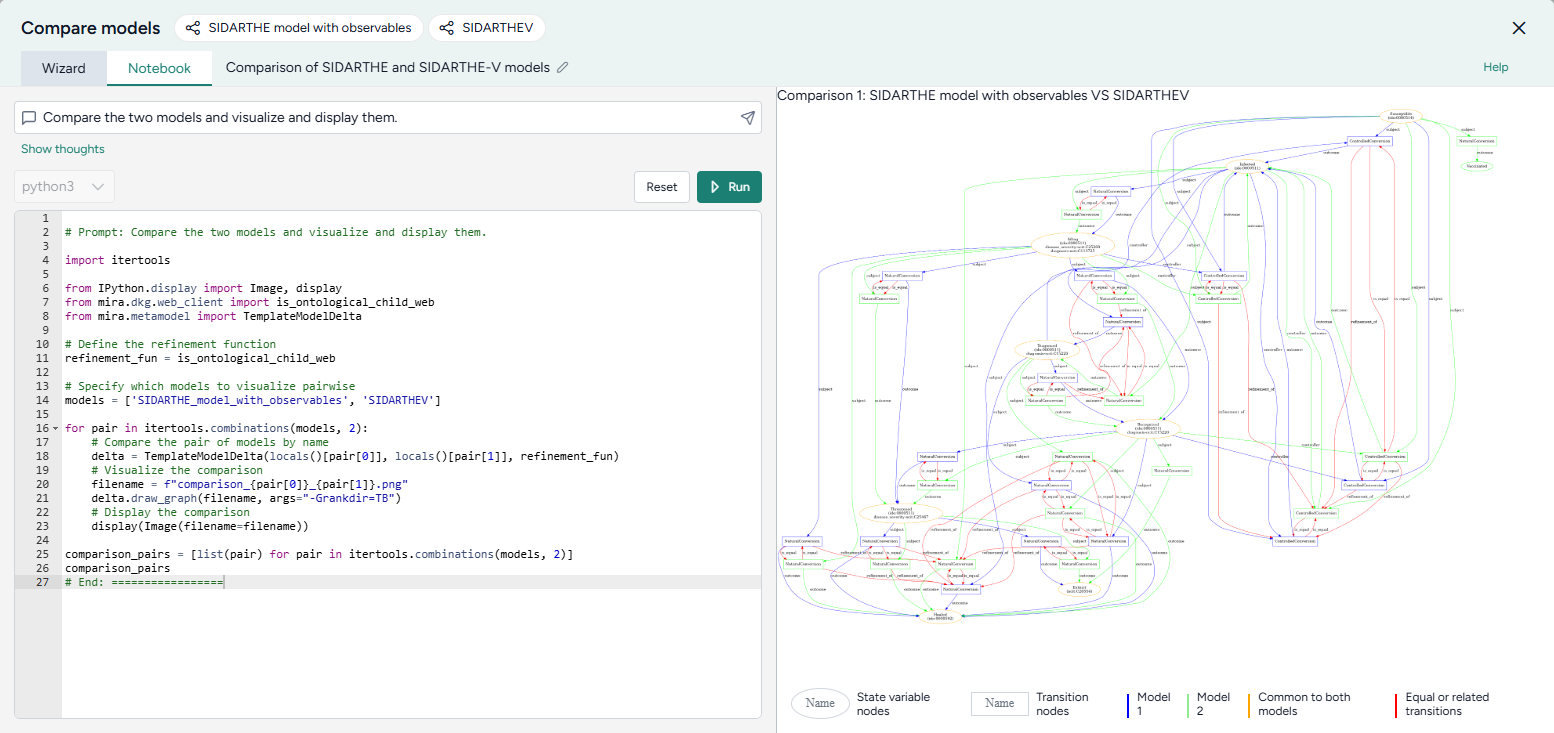

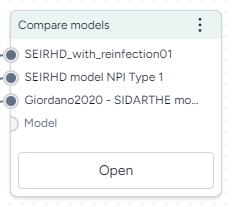

Compare models

Generate side-by-side summaries of two or more models or prompt an AI assistant to visually compare them.

Source code overview

-

-

Simulation

- Simulate

Run a simulation of a model under specific conditions.

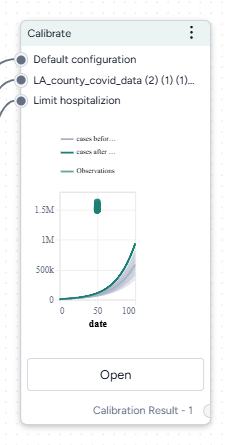

[Source code]https://github.com/ciemss/pyciemss/blob/e3d7d2216494bc0217517173520f99f3ba2a03ea/pyciemss/interfaces.py#L357){ target="_blank" rel="noopener noreferrer" } - Calibrate

Determine or update the value of model parameters given a reference dataset of observations.

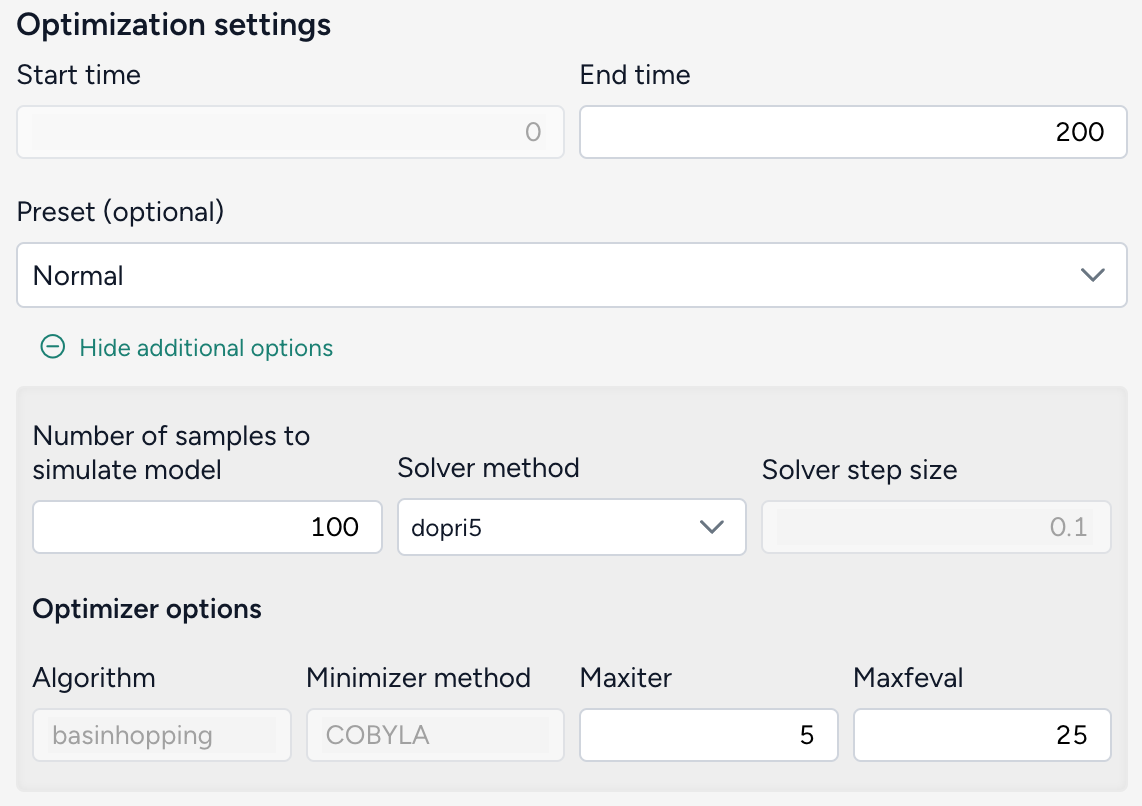

Source code - Optimize intervention policy

Determine the optimal values for variables that minimize or maximize an intervention given some constraints.

Source code - Simulate ensemble

Run a simulation of multiple models or model configurations under specific conditions.

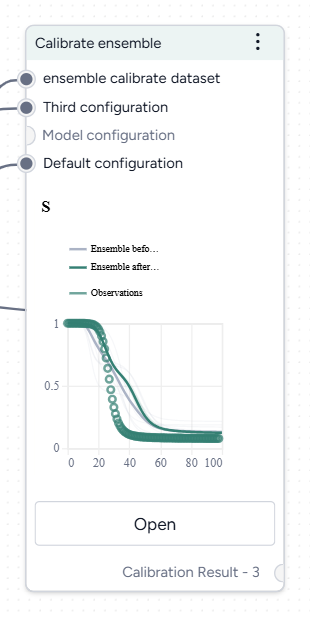

Source code - Calibrate ensemble

Extend the calibration process by working across multiple models simultaneously.

Source code

- Simulate

-

Data

-

Transform dataset

Modify a dataset by explaining your changes to an AI assistant.

Source code user guide -

Compare dataset

Compare the impacts of two or more interventions or rank interventions.

-

-

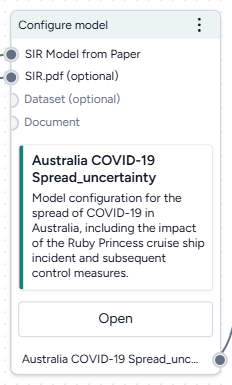

Config and intervention

- Configure model

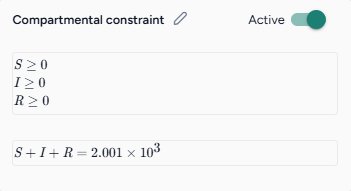

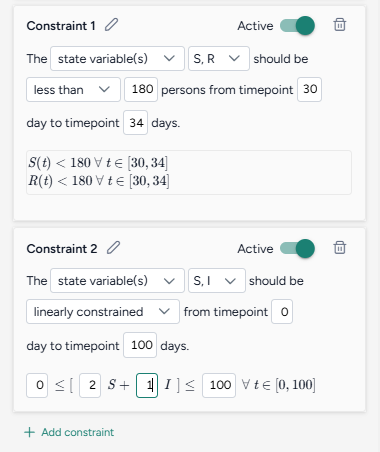

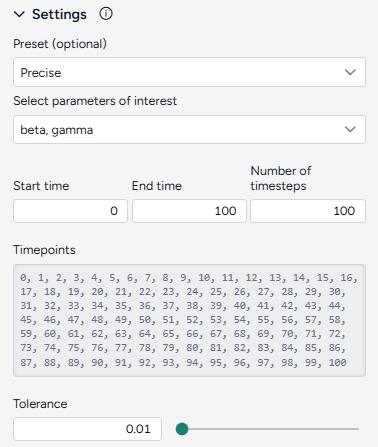

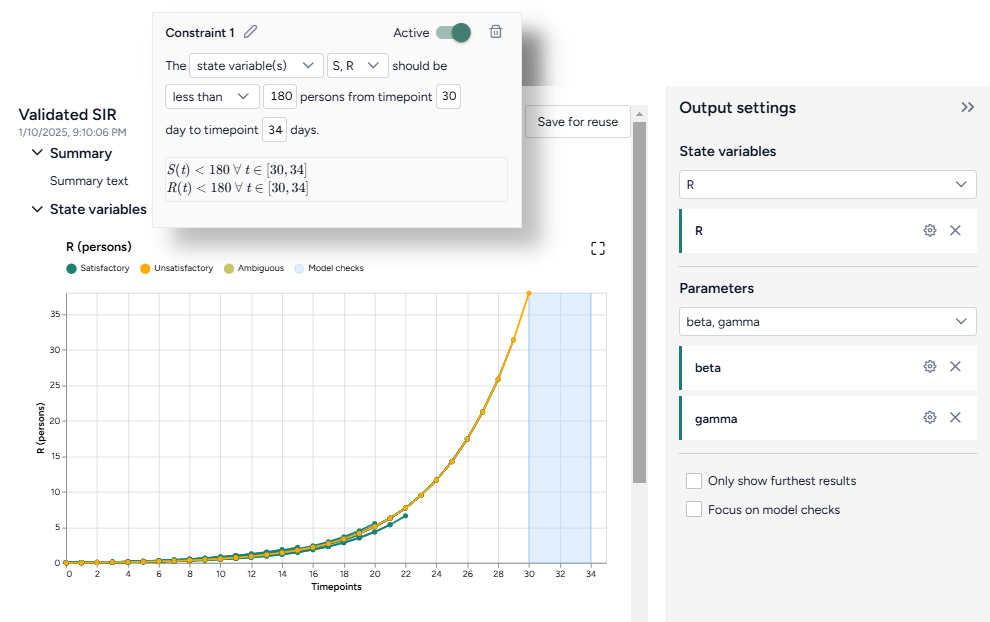

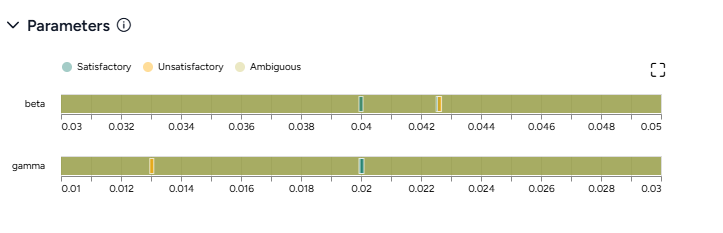

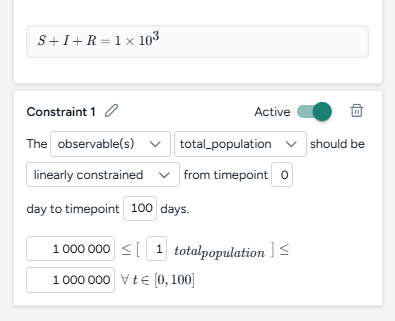

Edit variables and parameters or extract them from a reference resource. - Validate configuration

Determine if a configuration generates valid outputs given a set of constraints.

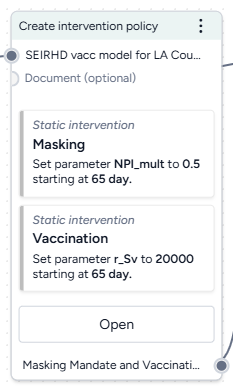

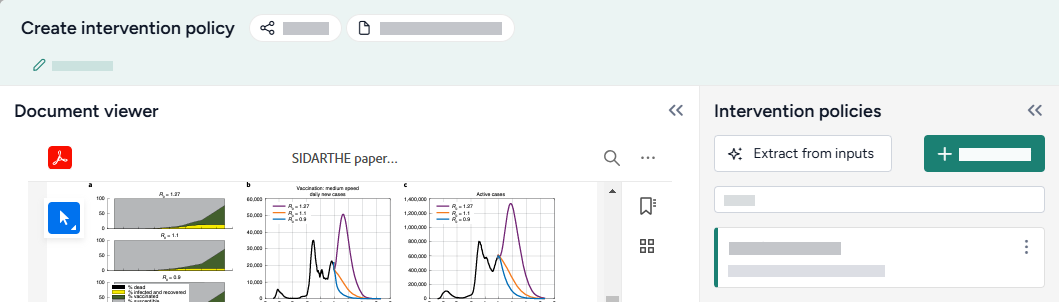

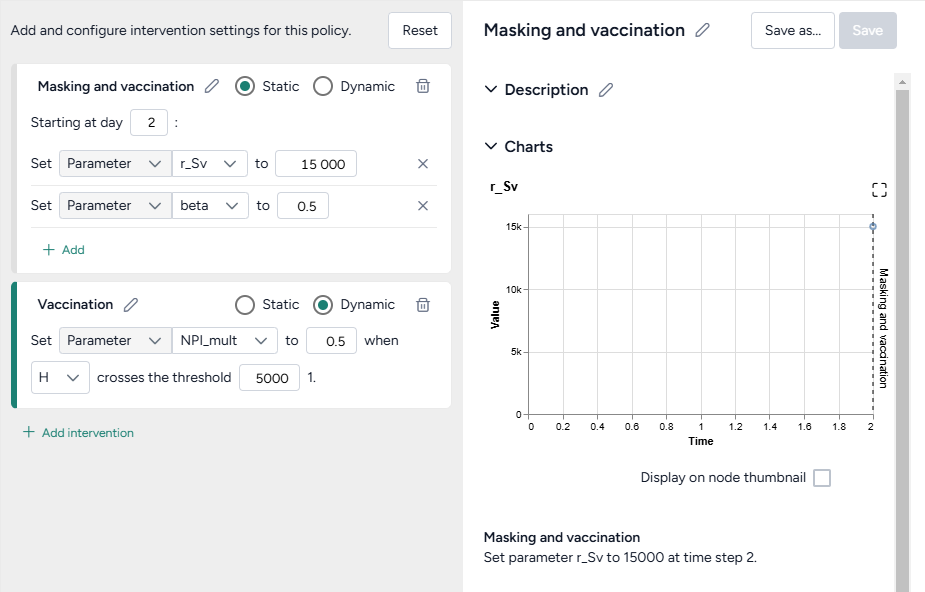

Source code repository - Create intervention policy

Define intervention policies to specify changes in state variables or parameters at specific points in time.

- Configure model

Recreate, modify, and simulate a model¶

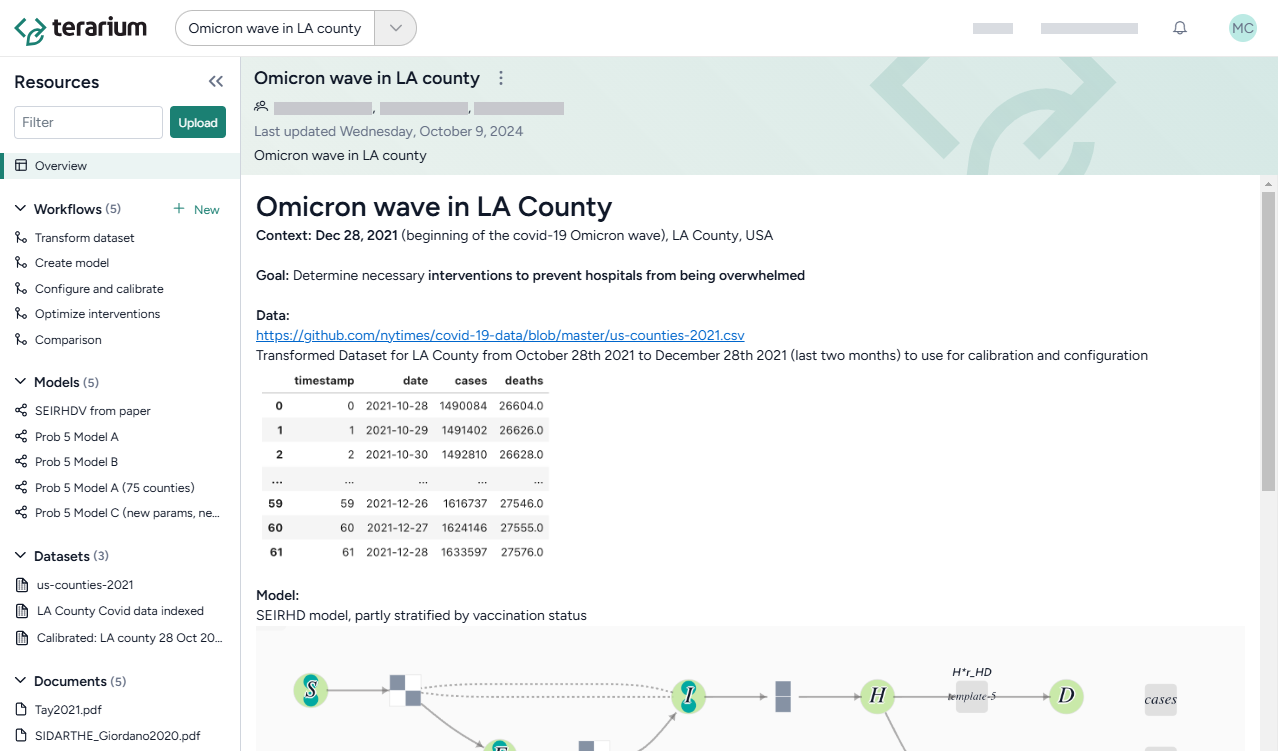

This tutorial is designed to help you learn how—all without needing to code—you can recreate, refine, and analyze models in Terarium. You can follow along by looking at the shared Terarium help sample project.

The goal of the modeling exercise in the project is to reduce COVID hospitalizations in LA county. Starting with only a dataset of cases and deaths and a few scientific papers describing disease models, it shows how to:

-

Upload and modify models and data

- Upload resources

- Create and compare models from equations

- Edit a model

- Stratify a model to account for dimensions like vaccination status

- Work with data

-

Simulate models and explore intervention policies

Try it out yourself!

To better understand the described modeling processes, see the sample workflow link at the top of each section. To try something out yourself, copy the models, documents, or datasets from the sample workflow into your own project.

To copy a model or dataset:

- Click its name in the Resources panel.

- Click > Add to project and select your project.

To copy a document:

- Click its name in the Resources panel.

- Click > Download this file and save it to your computer.

- Drag the file into the Resources panel of your prroject.

Upload and modify models and data¶

Upload resources¶

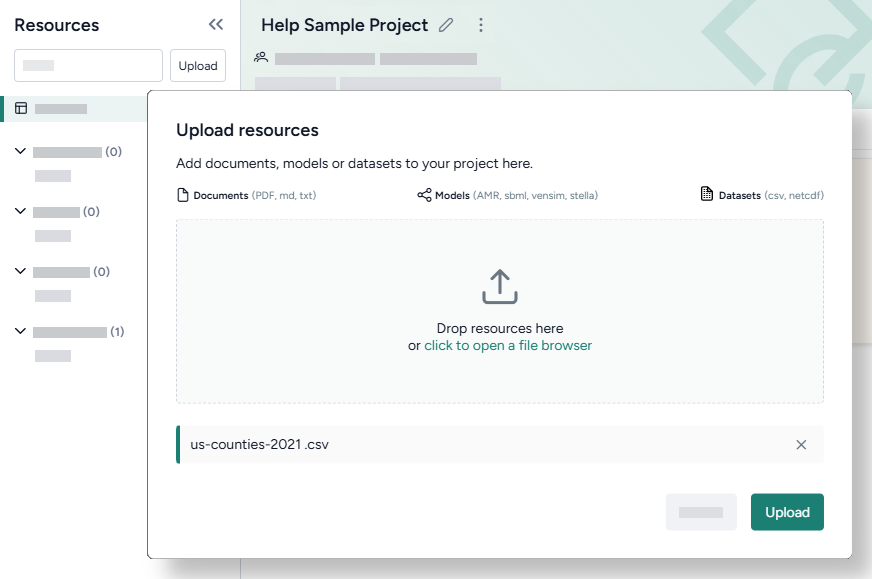

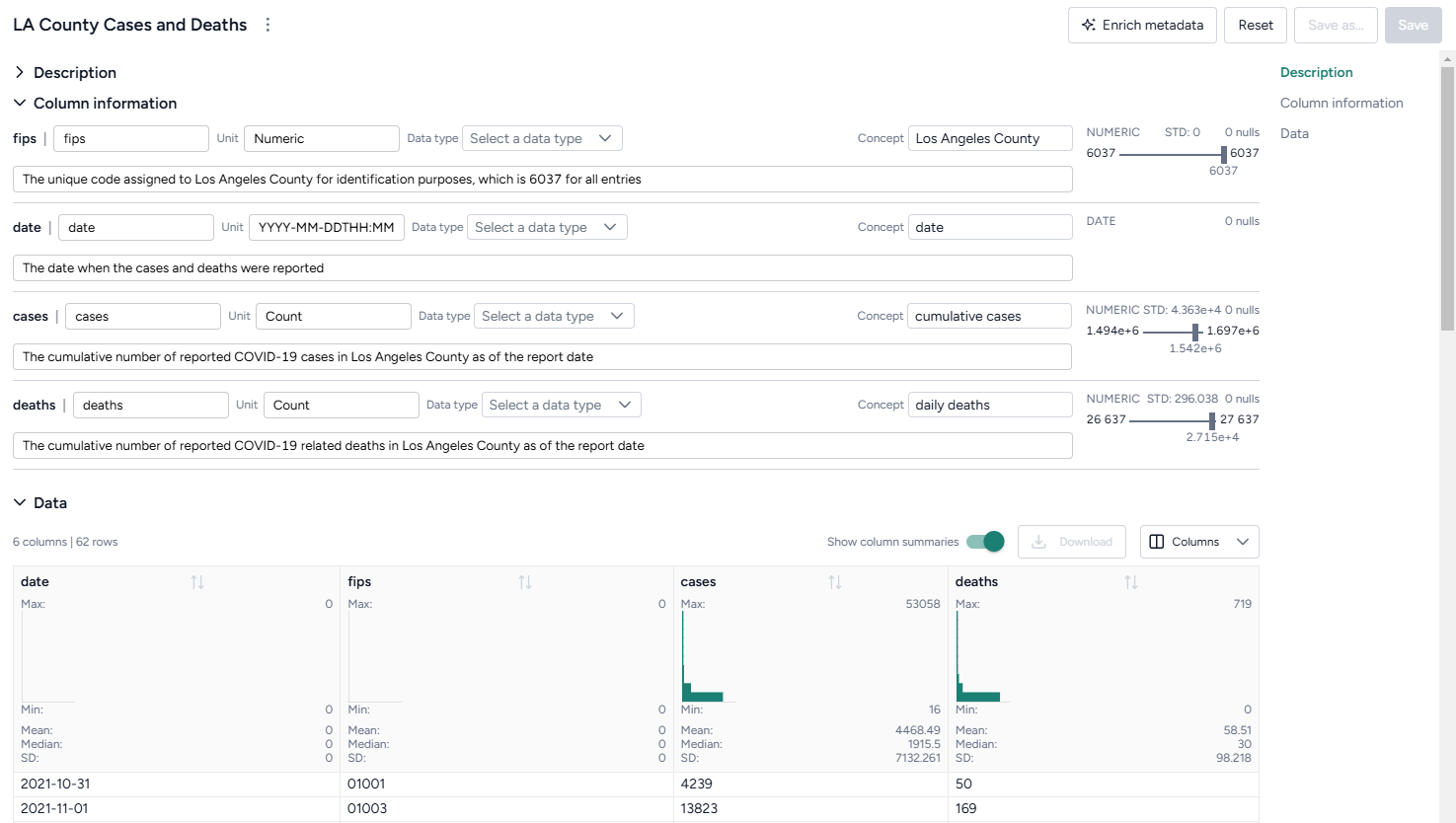

Begin setting up your project by uploading the models, papers (documents), and datasets you need for your modeling processes. In this case, that includes a dataset of U.S. COVID cases and deaths from 2021 and a set of papers describing different disease models.

Upload modeling resources

- Drag the dataset and document files into the Resources panel and then click Upload.

Create and compare models from equations¶

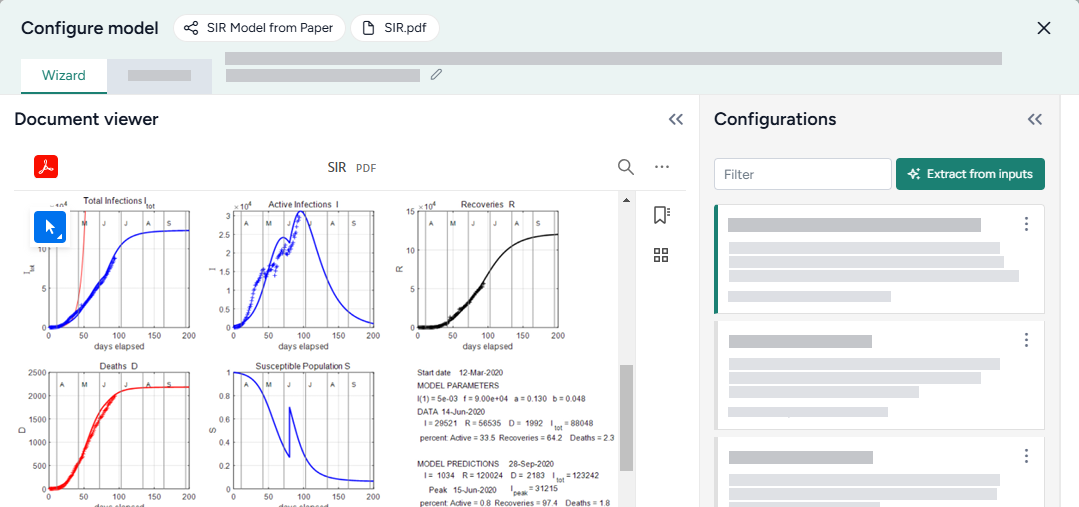

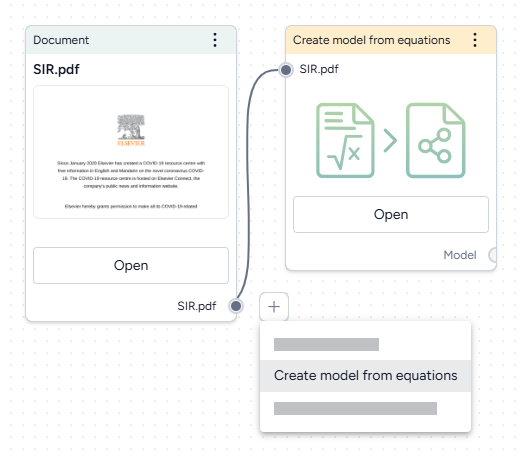

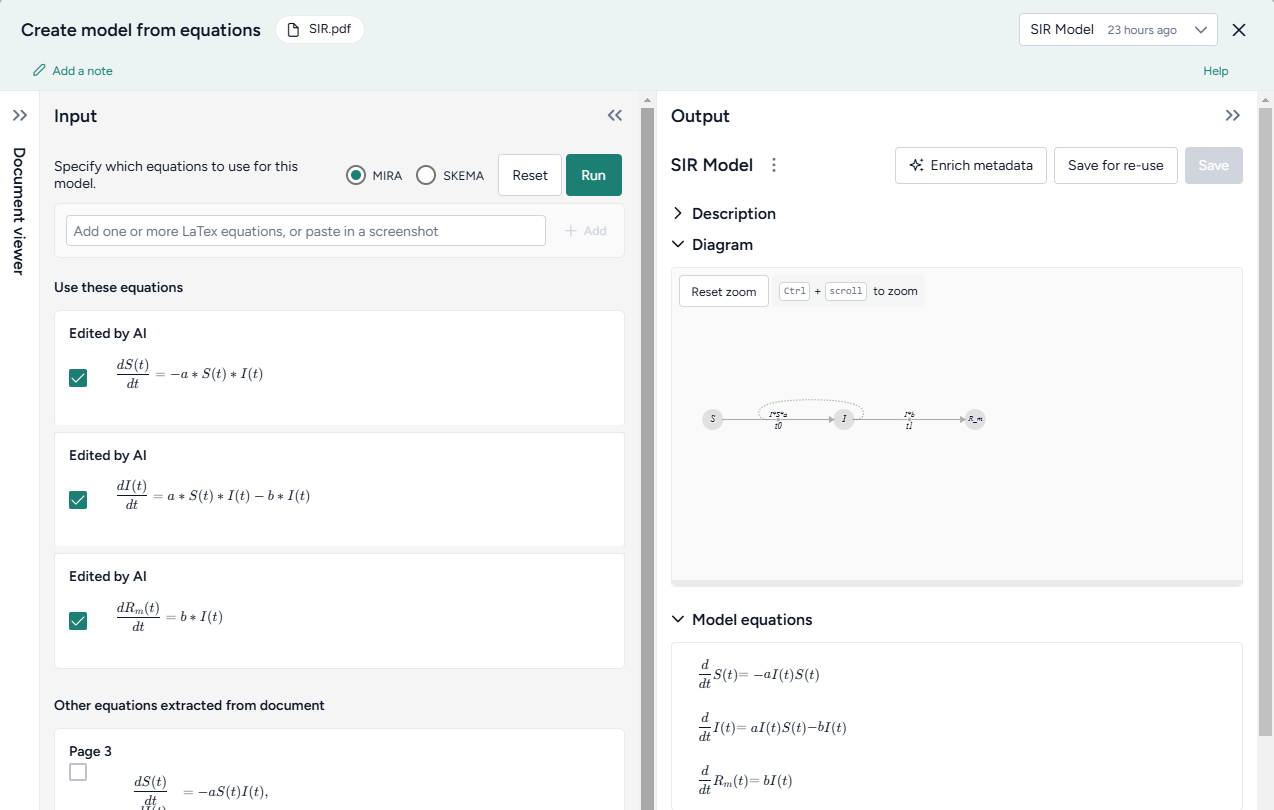

Terarium can automatically recreate a modelfrom a set of ordinary differential equations. In this case, we create models by extracting equations from the uploaded documents, but you could also get equations from pasted images or manually enter them as LaTeX.

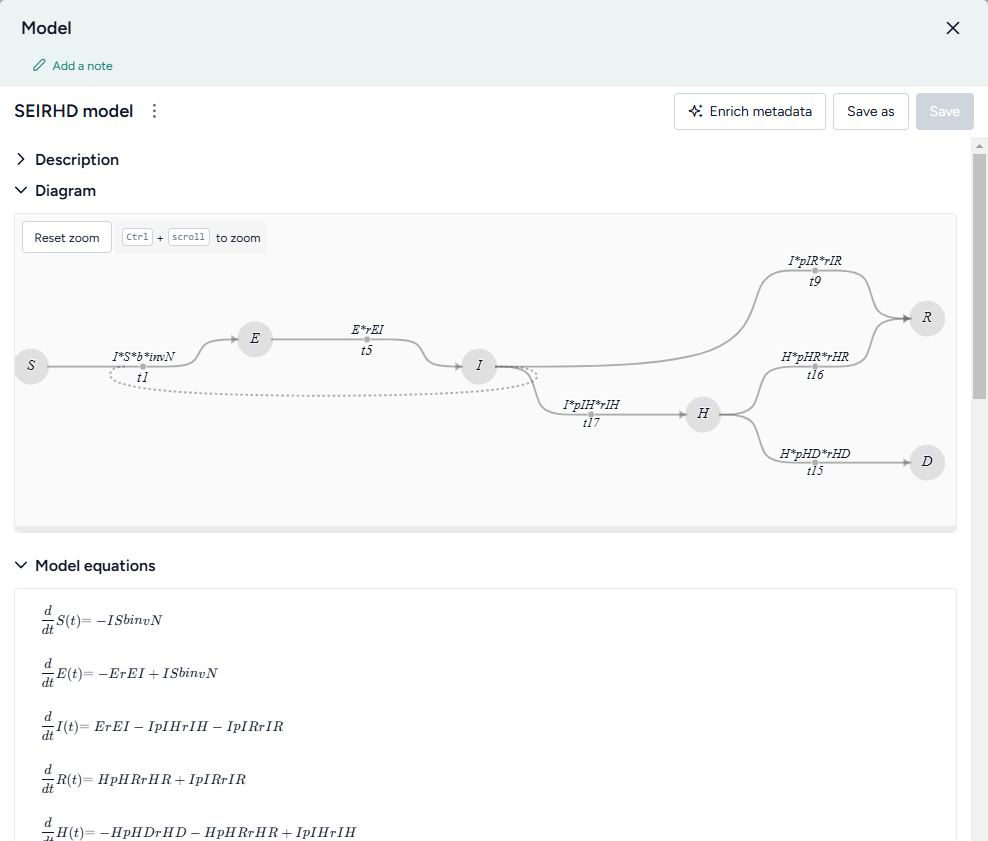

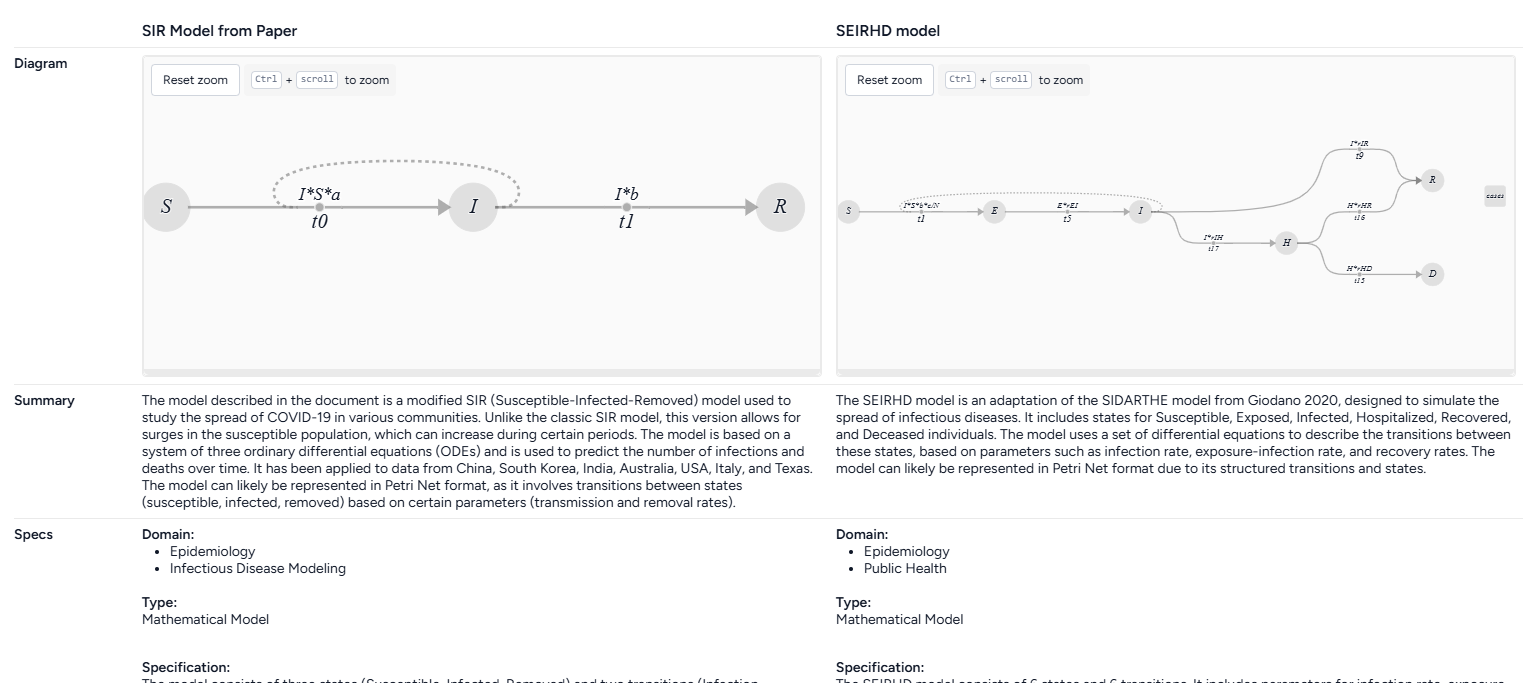

When the extraction and creation is complete, Terarium builds visual representations of the extracted SIR and SEIRHD models that show how people progress between disease states.

Recreate a model from a paper

- Drag each document into the workflow canvas, hover over its output, click Link > Create model from equations, and then click Open.

- Review the equations automatically extracted from the document. To make changes or correct extraction errors, click an equation to edit the LaTeX version.

- Select each equation you want to include in the model and then click Run > Mira.

- At the top of the Output panel, click Save for re-use, and then enter a unique name.

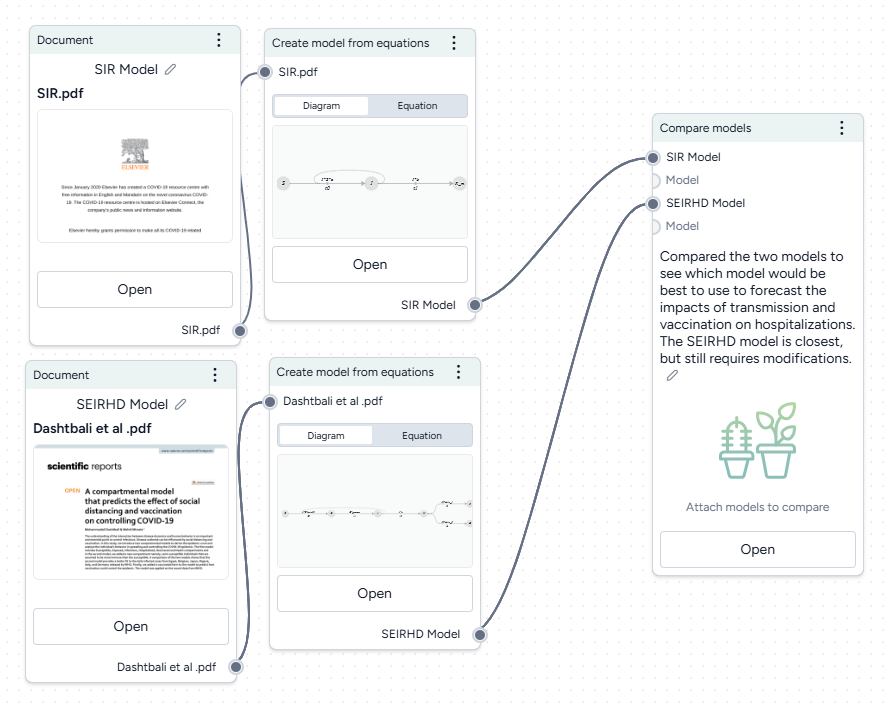

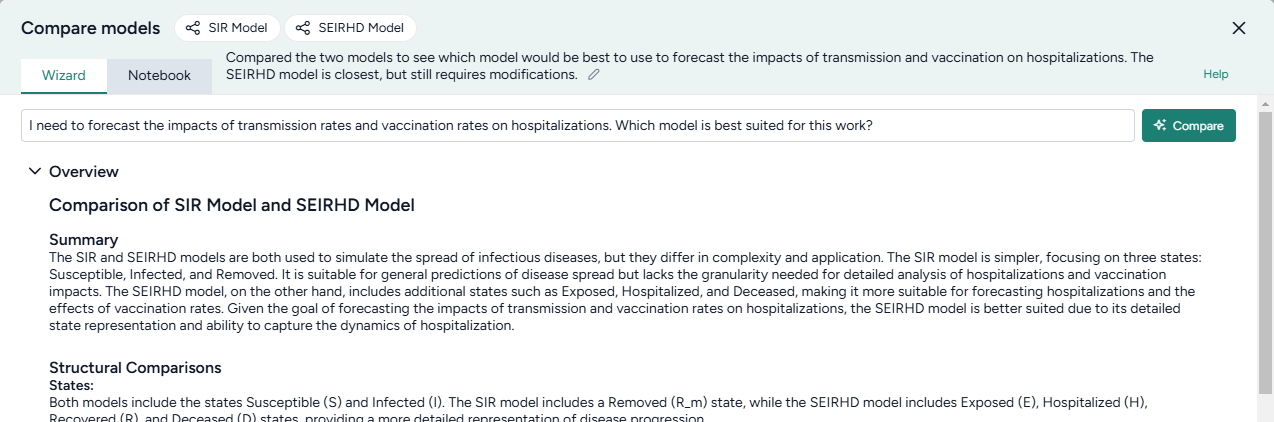

To understand the extracted SIR and SEIRHD models better and decide which one to use, we pipe them into a Compare models operator. This uses an AI assistant to create side-by-side model cards for each according to our modeling goal of reducing hospitalizations

Compare models

- Hover the output of a Create model from equations operator and click Link > Compare models.

- Click the output of the other Create model from equations operator and connect it to the new Compare models operator.

- Click Open.

-

Enter your goal for making the comparison. In this case:

I need to forecast the impacts of transmission rates and vaccination rates on hospitalizations. Which model is best suited for this work?

-

Click Compare and review the summary tailored to the specified goal.

The AI-generated summary indicates that the SEIRHD model would be best for forecasting the impacts of transmission and vaccination on hospitalizations.

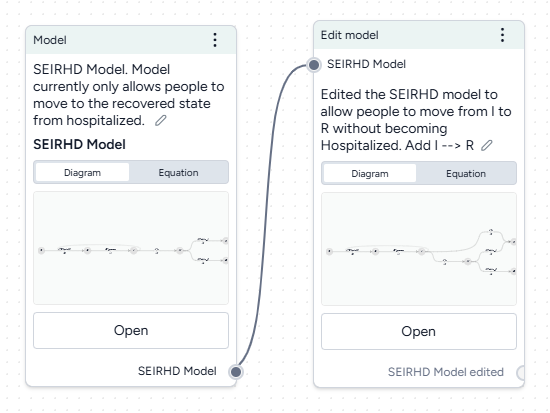

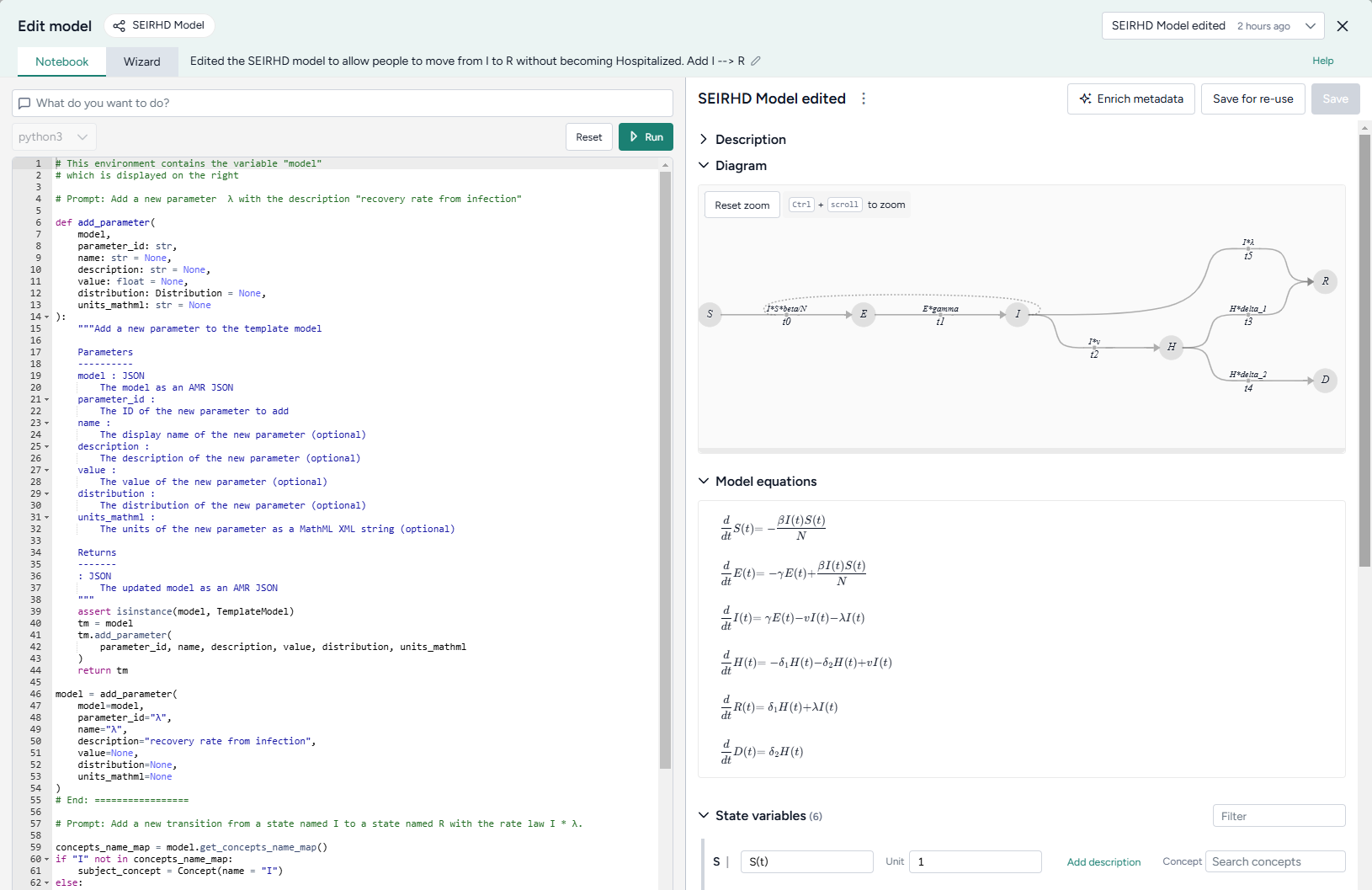

Edit models¶

Now we want to update the SEIRHD model to allow people to move from infected to recovered without becoming hospitalized. Even if you don't have any coding experience, you can use Terarium's AI-assisted Edit model notebook. The assistant simplifies the process of changing or building off an existing model—no knowledge of specialized modeling libraries needed!

Add a new transition from infected to recovered

- Pipe the model into an Edit model operator and then click Open.

-

Add a new parameter for the transition rate law by asking the AI assistant to:

Add a new parameter λ with the description "recovery rate from infection" -

Add the new transition by asking the assistant to:

Add a new transition from a state named I to a state named R with the rate law I * λ -

Click Run to apply the changes. Compare the edited model to the previous state by changing the output in the top right.

- Click Save for re-use and then enter SEIRHD edited as the name of the new model.

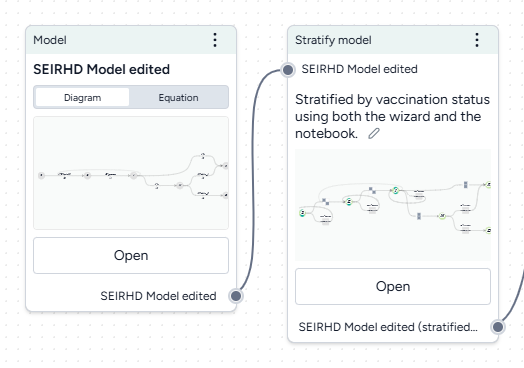

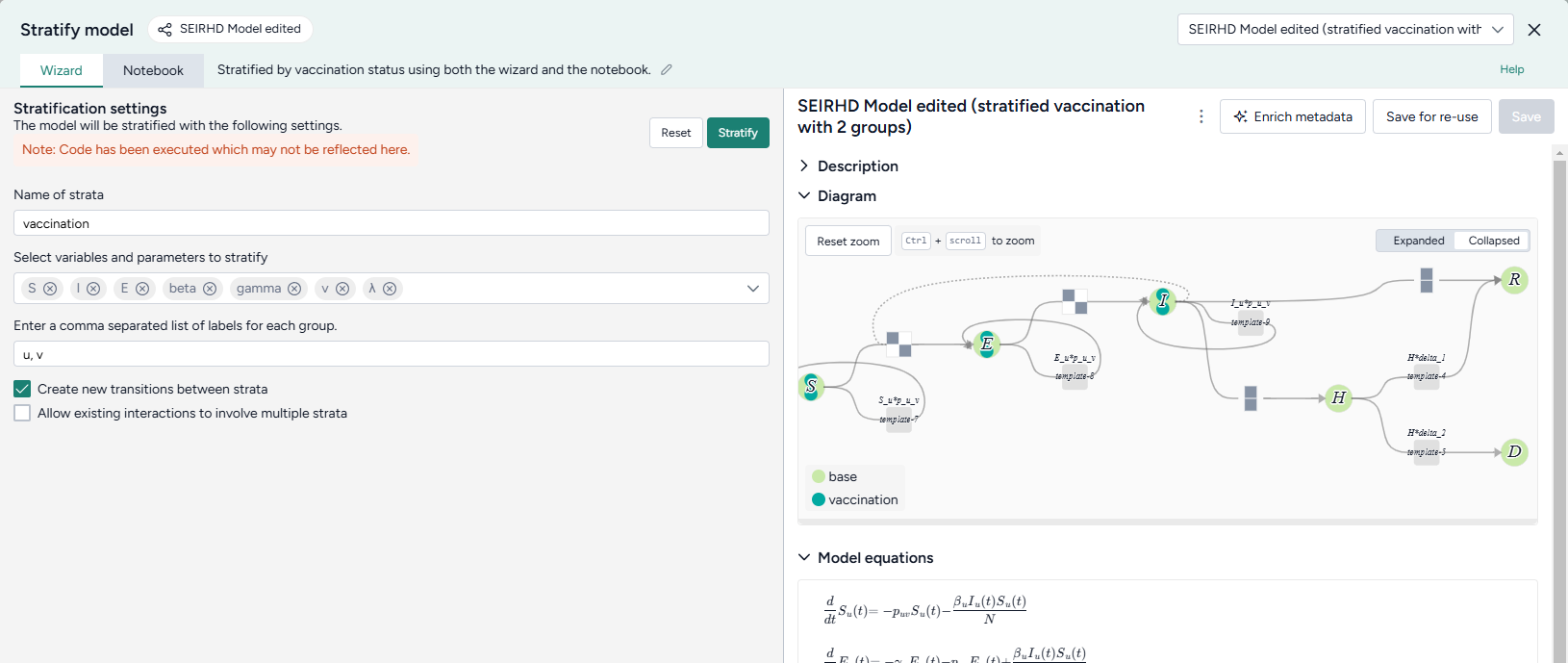

Stratify models¶

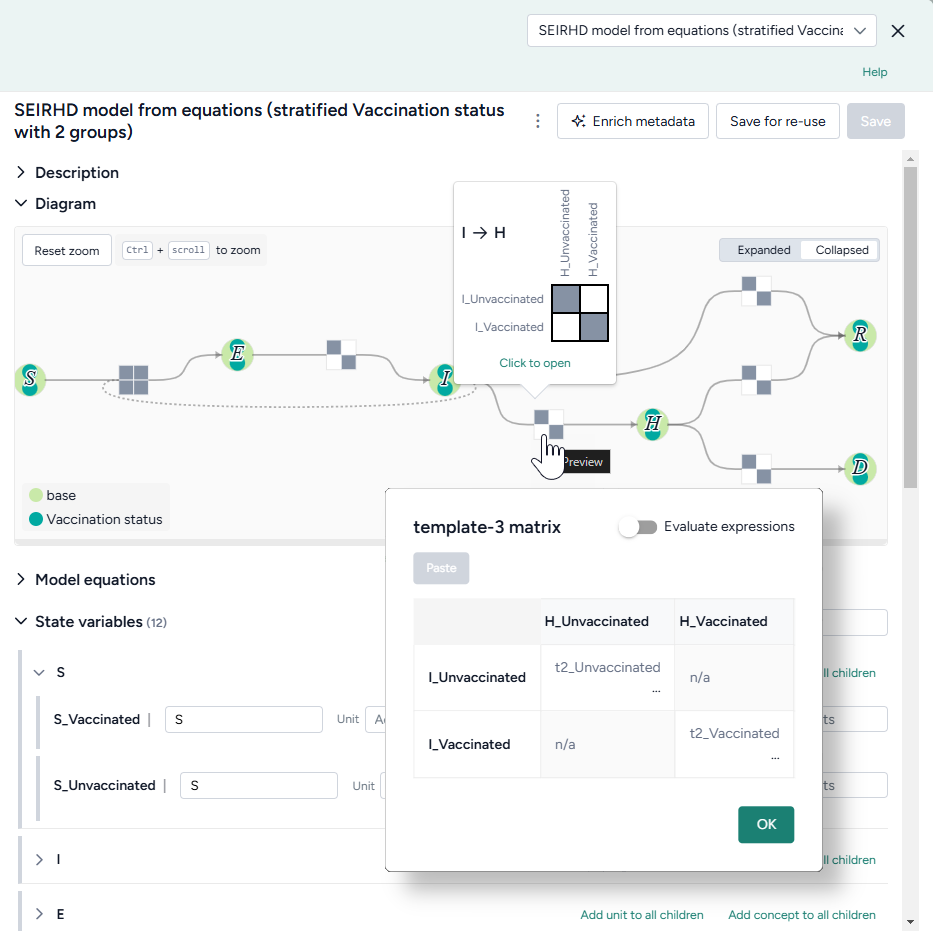

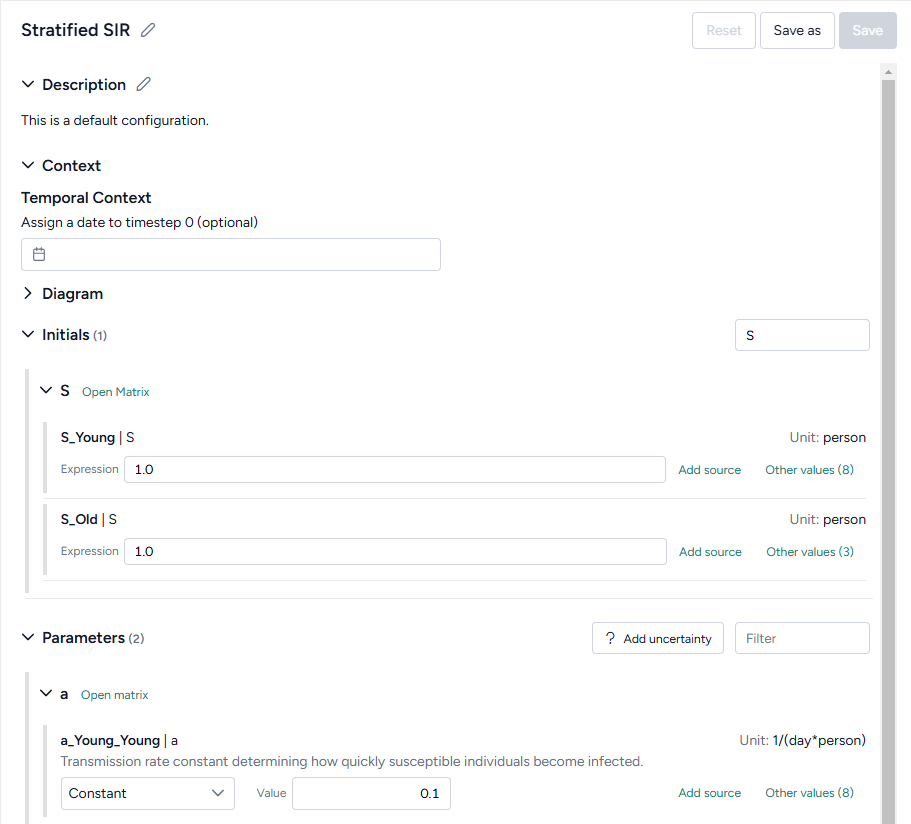

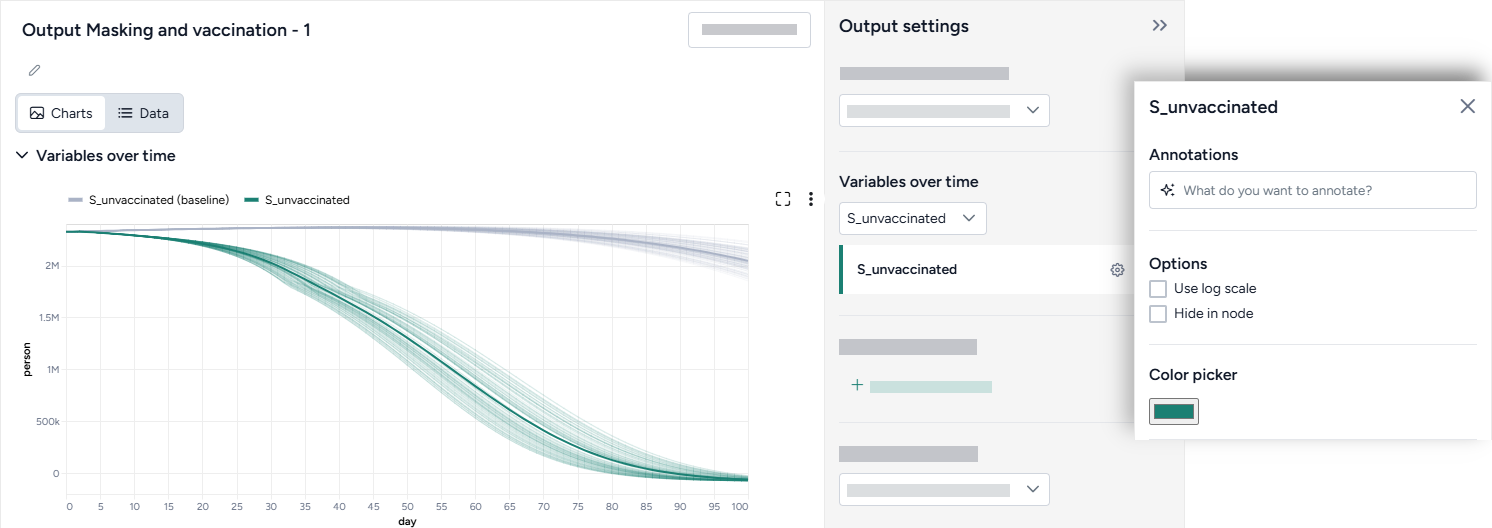

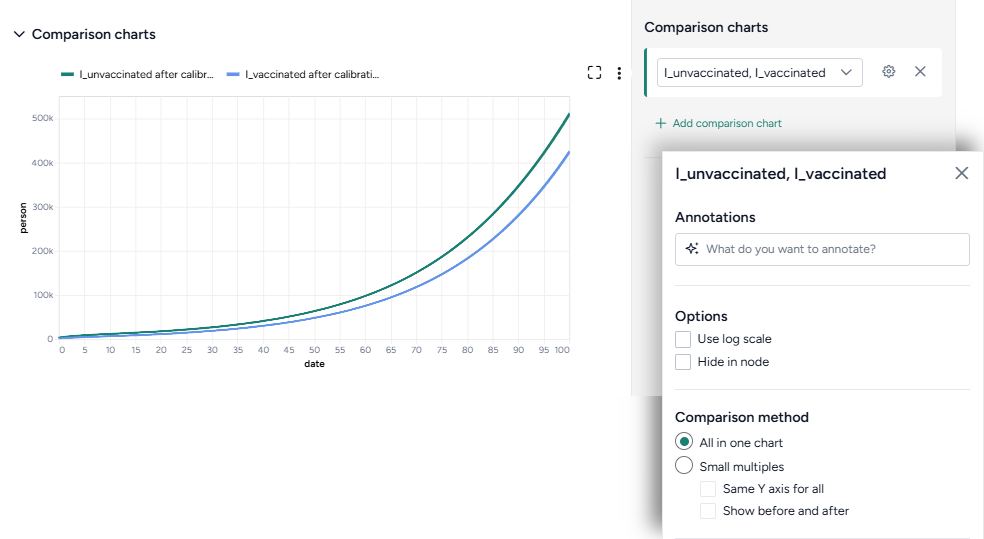

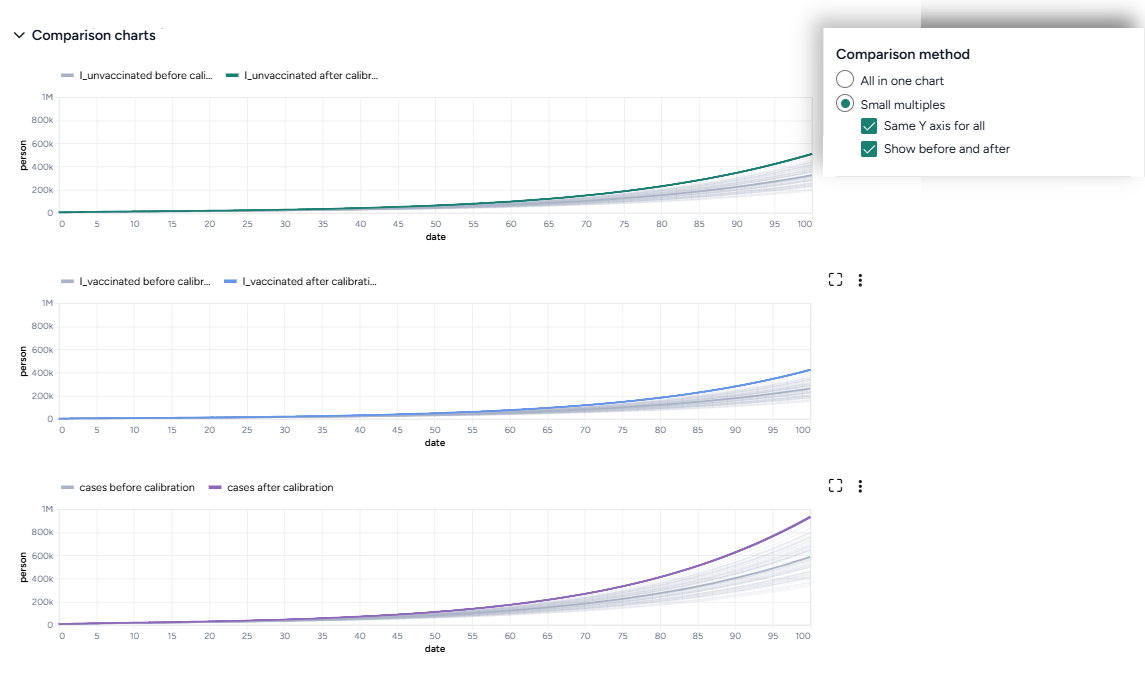

Now we want to stratify our edited model to account for vaccinated and unvaccinated groups. Terarium's stratification process is an error-proof approach to stratifying along any dimension, such as age, sex, and location.

Stratify the SEIRHD model by age

- Pipe the model into an Stratify model operator and then click Open.

- Name the strata "vaccination".

- Select to stratify the S, E, and I state variables and the beta (infection rate), gamma (latent time), v (hospitalized rate), and λ (recovery rate) parameters.

-

List the labels for each strata group:

u, v -

Choose the allowed transitions and interactions between strata:

- Select Create new transitions between strata to allow unvaccinated people to turn into vaccinated people.

- Clear Allow existing interactions between strata to prevent vaccinated and unvaccinated people from interacting with and infecting each other.

Note

In this case because vaccinated people cannot turn into unvaccinated people, additional settings must be configured in the Notebook view. For more information, see the code inside the Stratify model operator in the Terarium help sample project or see Stratify a model.

-

Click Stratify.

- Click Save for re-use and edit the name of the new model.

Work with data¶

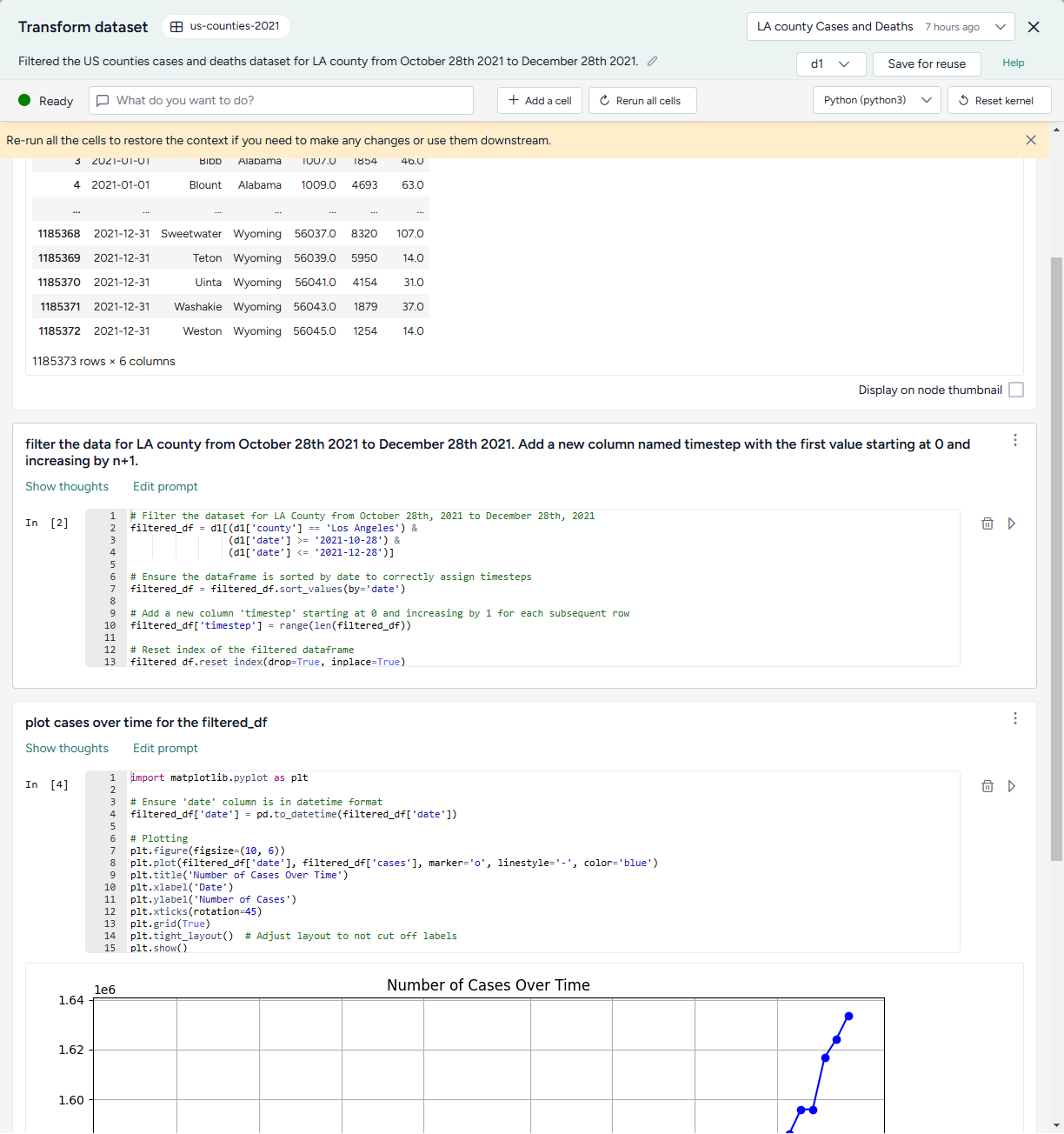

The uploaded dataset covers all of the U.S. for 2021. However, we're only interested in LA county. We can use Terarium's data transformation tools to filter down to just what we need.

We'll also add a timestep column, which we'll need later to calibrate our model to the historical data.

Filter the case data to focus on LA county

- Drag the dataset from the Resources panel onto the canvas, hover over its output, click link > Transform dataset, and then click Open.

- Preview the data by clicking Run .

-

Ask the assistant to filter the data:

filter the data for LA county from October 28th 2021 to December 28th 2021. Add a new column named timestep with the first value starting at 0 and increasing by n+1. -

Ask the assistant to plot the data over time:

plot cases over time for the filtered_df -

Inspect the generated code, change the following line to include

COVIDin the title, and then click Run to redraw the plot.plt.title('Number of Cases Over Time') -

Show the plot in the workflow by selecting Display on node thumbnail.

- At the top of the window, select filter_d1, click Save for reuse, and enter LA county cases and deaths as the name of the new dataset.

Doing your data transformations in Terarium helps make your modeling process more transparent and reproducible.

Simulate models and explore intervention policies¶

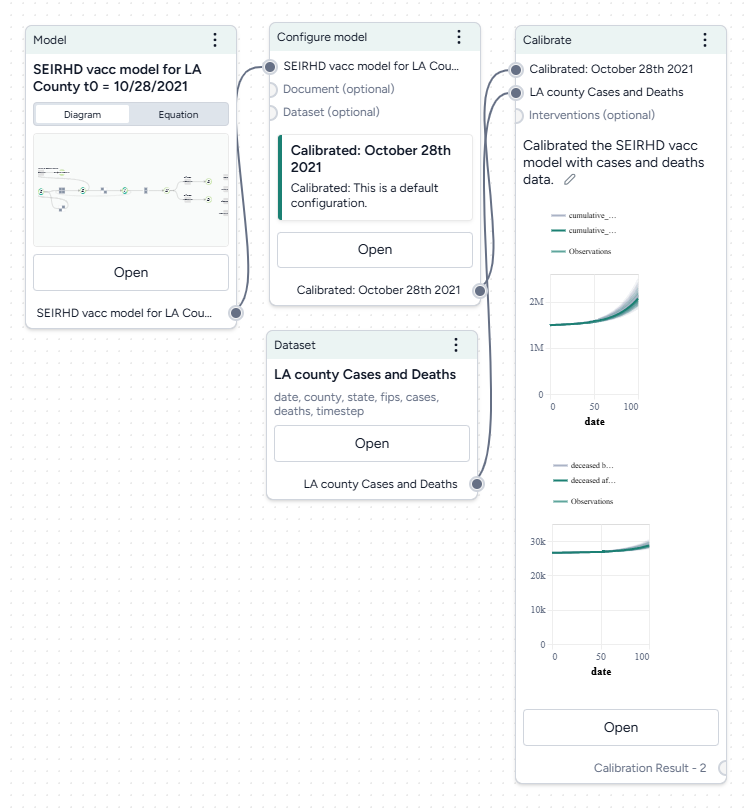

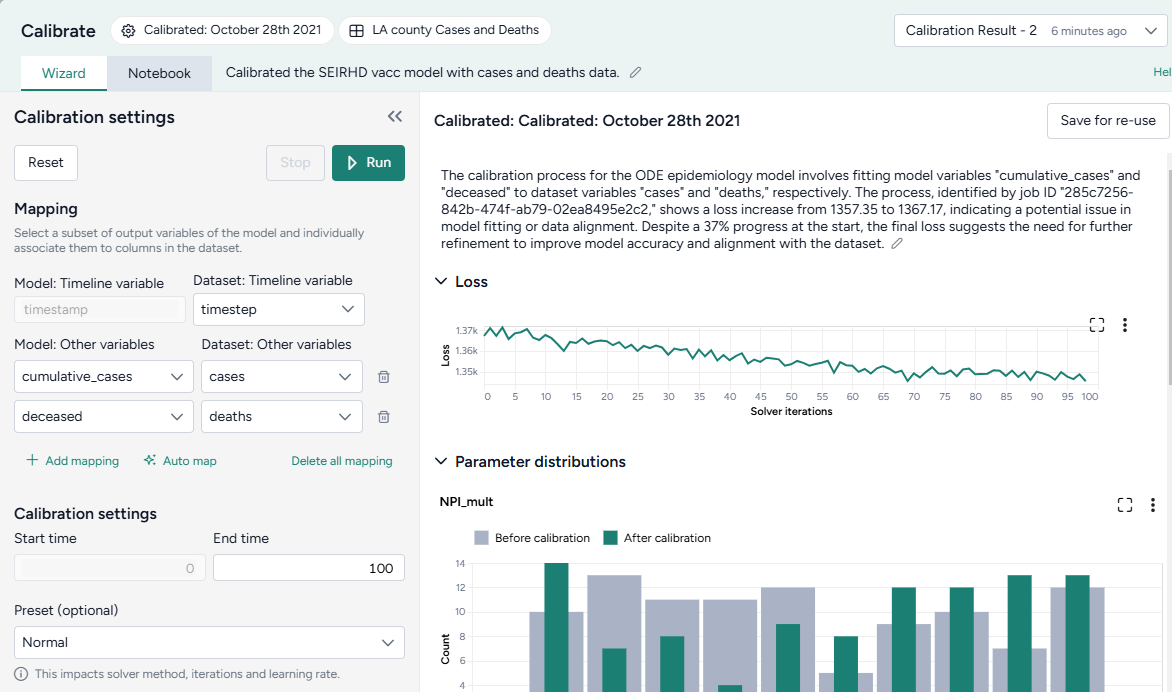

Configure and calibrate a model¶

Before you can simulate the modified SEIRHD model, we need to configure it to set the initial values for its states and parameters. To improve its performance, we can also adjust these by calibrating it against the context of the LA county data.

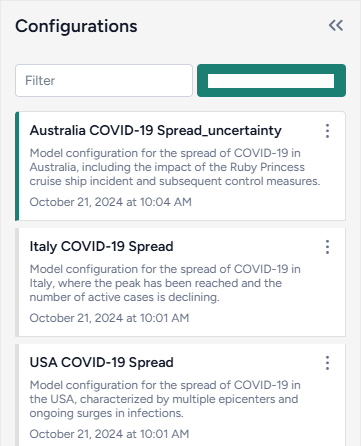

In this example, we'll work with an already existing model configuration, but normally you can manually create configurations based on your expert knowledge or automatically extract them from documents or datasets in your project.

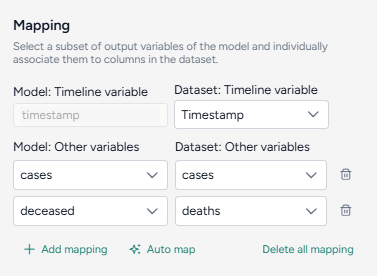

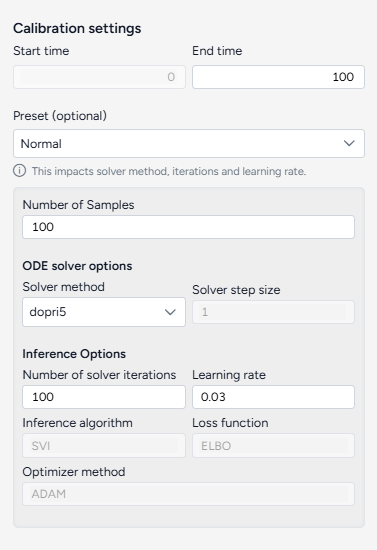

Calibrate the SEIRHD model to the LA county data

- Pipe the Configure model operator and the transformed dataset into a Calibrate operator and then click Open.

-

Map the model variables to the dataset variables:

- Set the Dataset: Timeline variable to timestep.

- Map model observables

cumulative casesanddeceasedto dataset variablescasesanddeathsrespectively.

-

Change the End time to 150 and click Run.

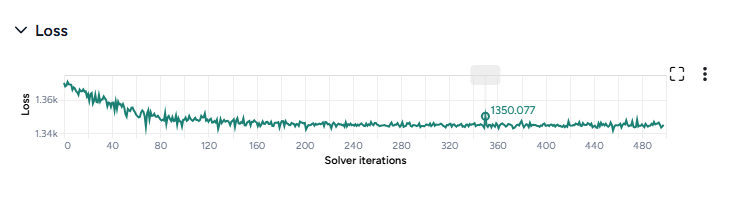

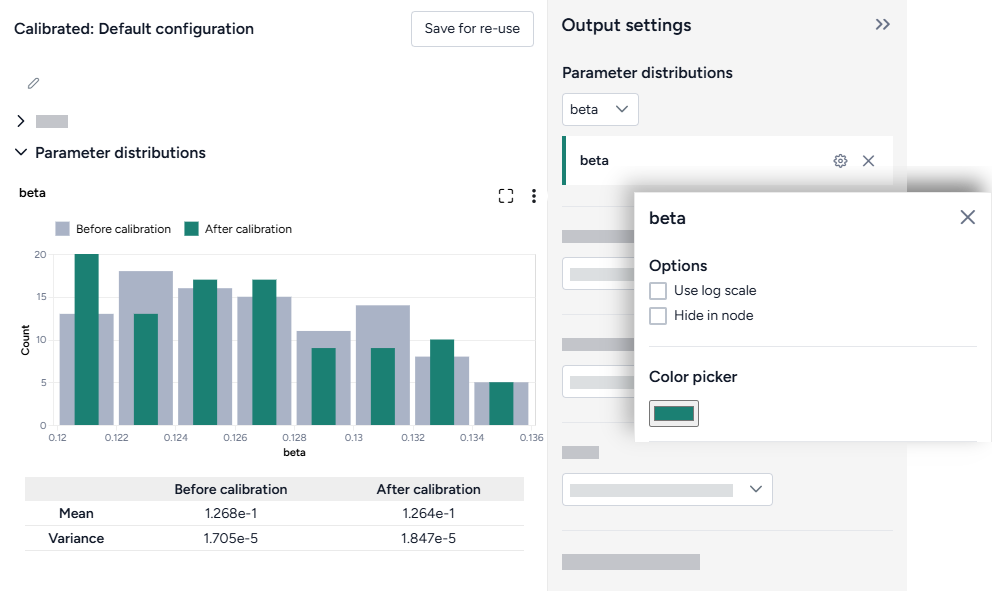

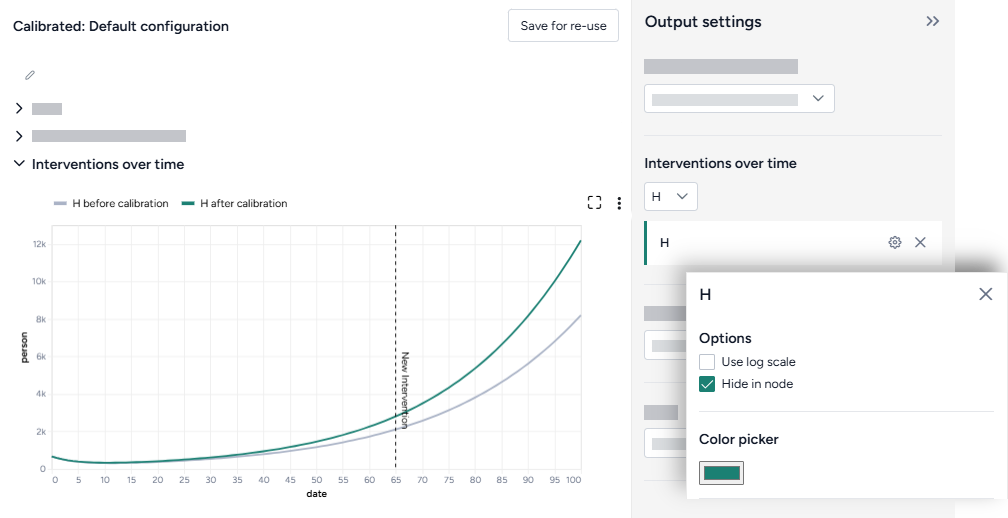

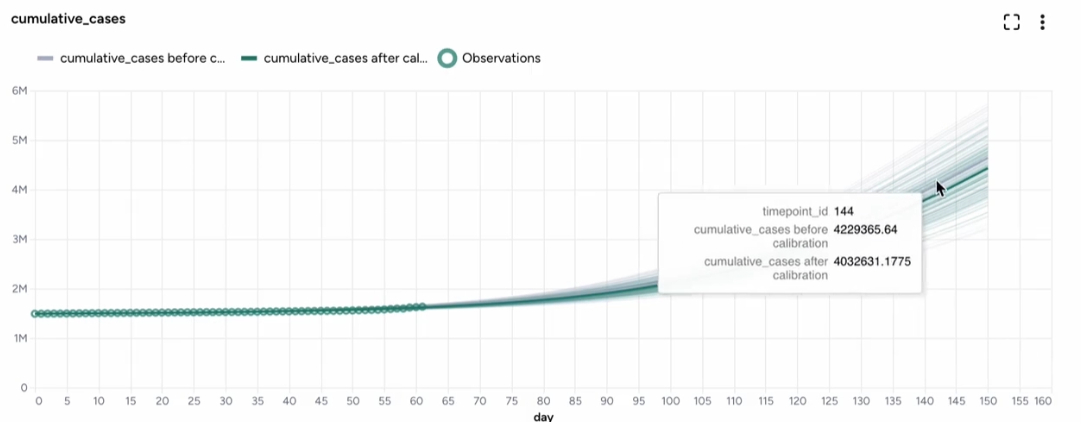

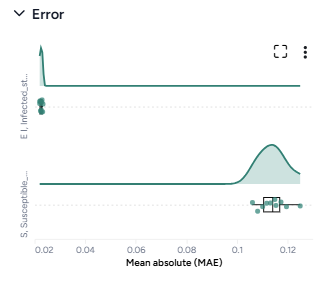

When you calibrate a model, you can review the following immediate visual feedback to help you spot issues quickly:

- A loss chart showing error over time.

- Cases and deaths data over time, with observations from the dataset and the projected number of cases before and after the calibration.

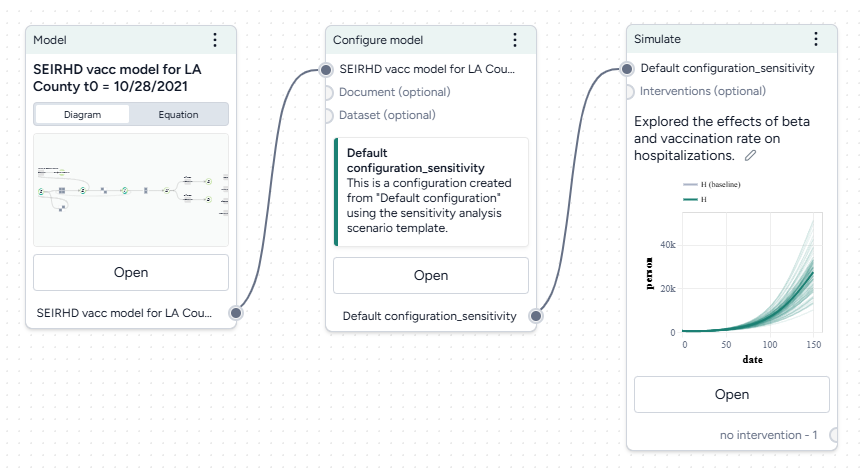

Run a sensitivity analysis¶

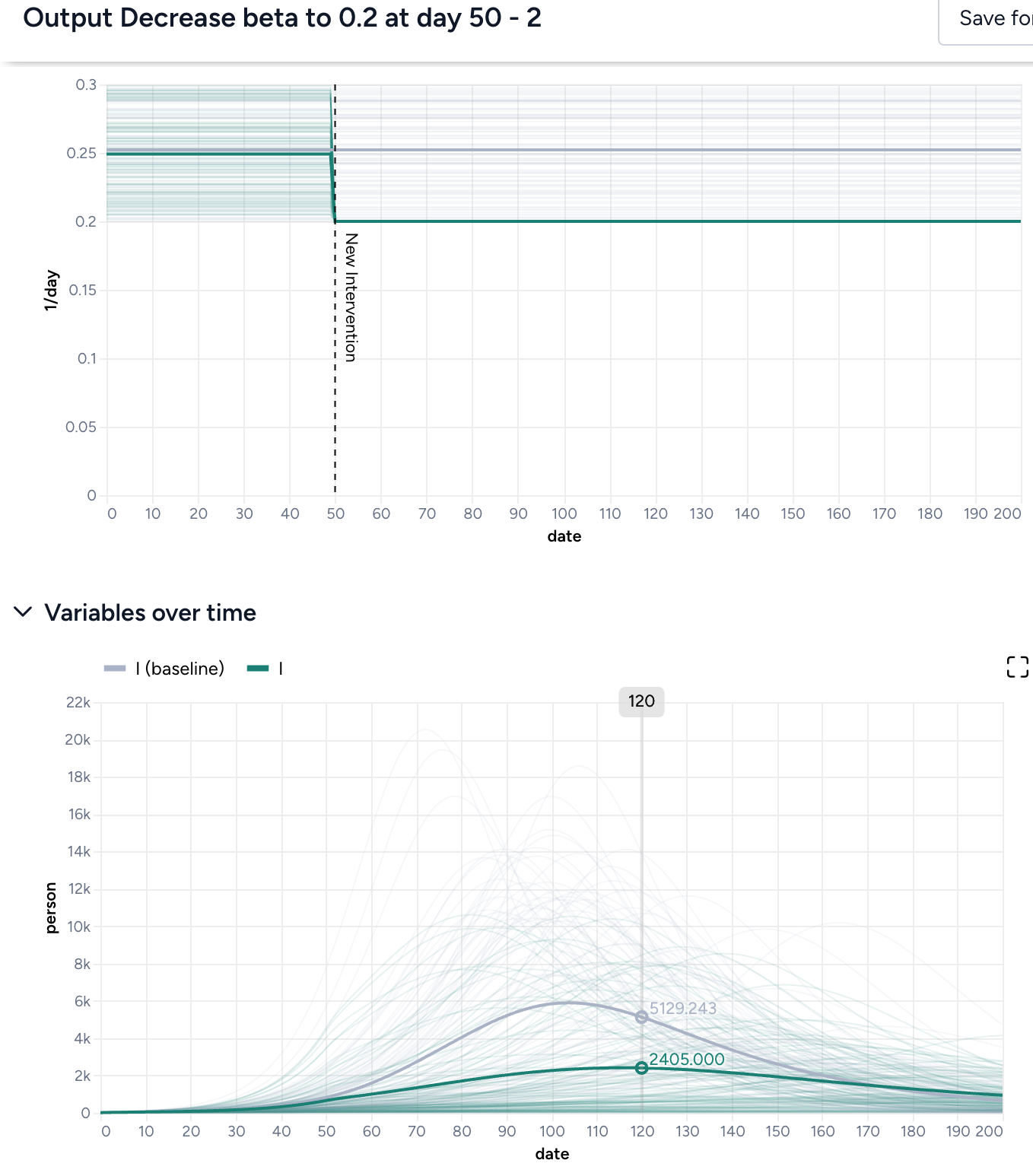

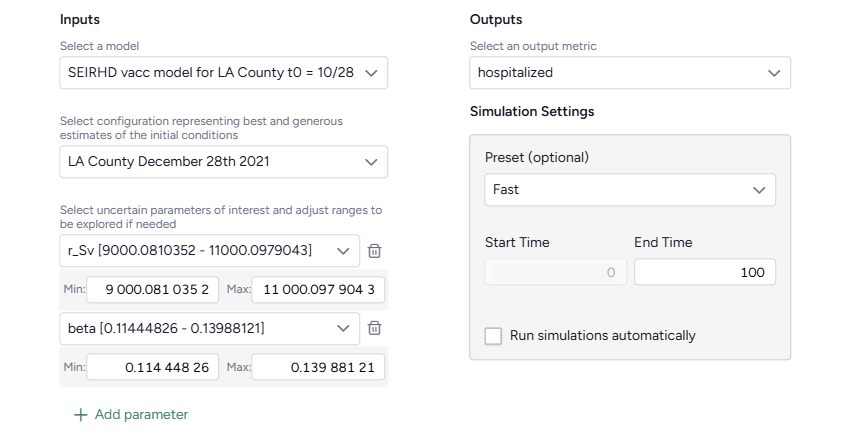

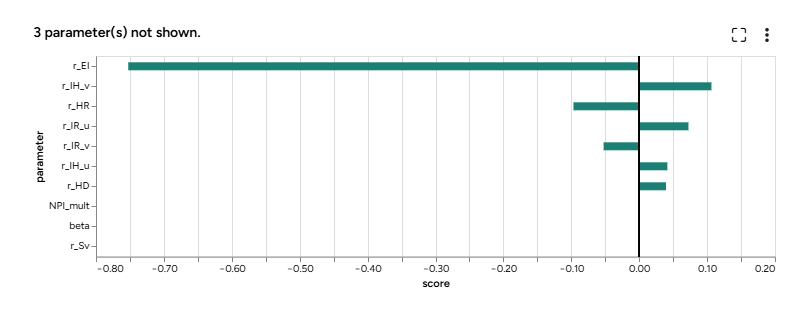

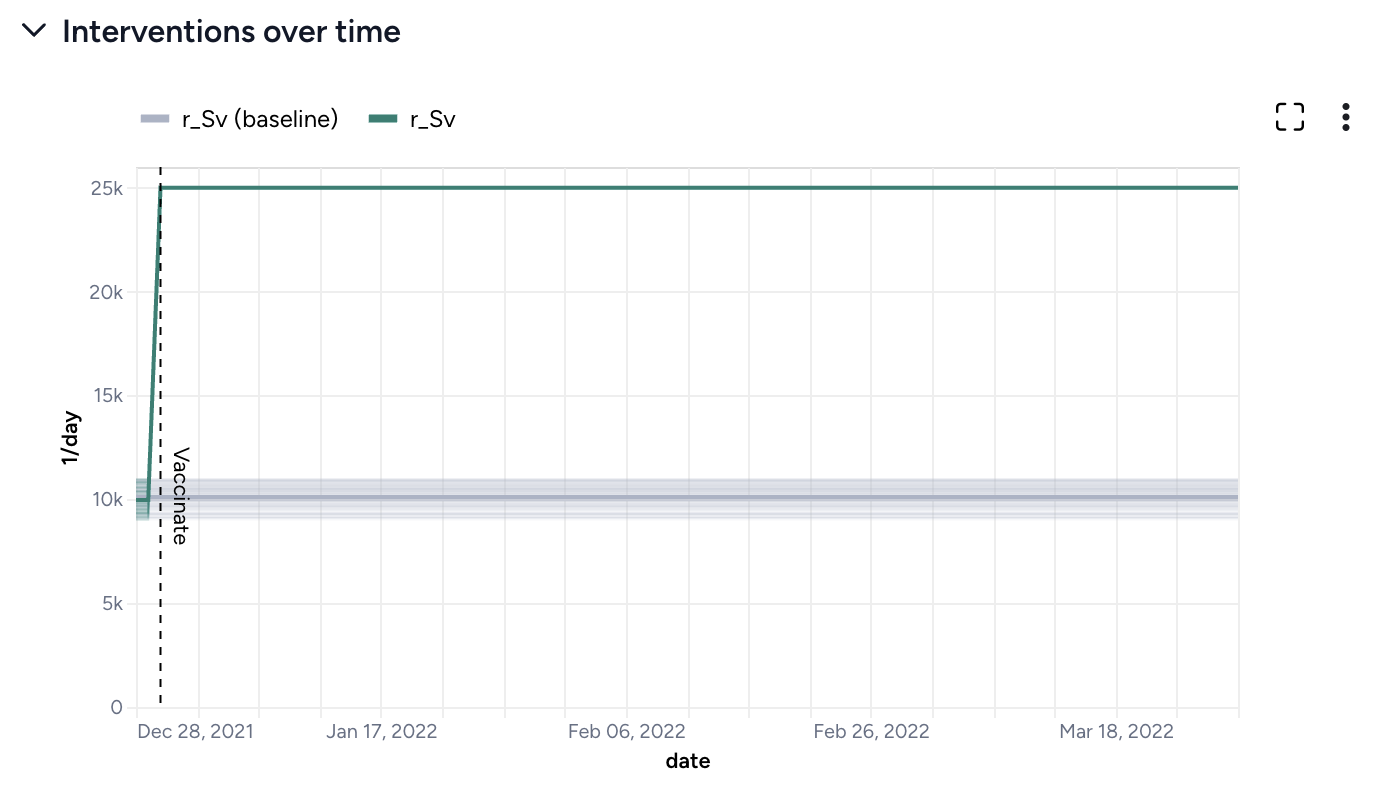

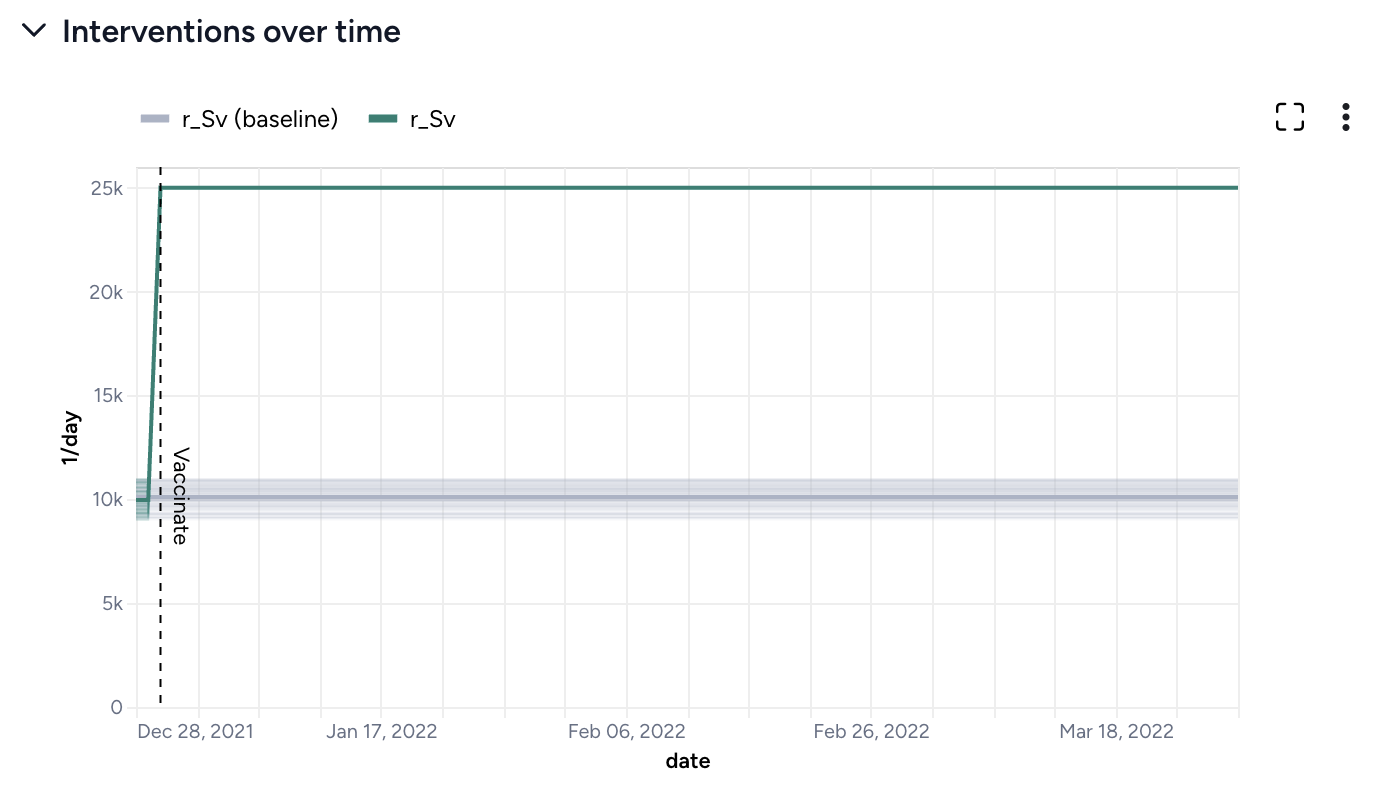

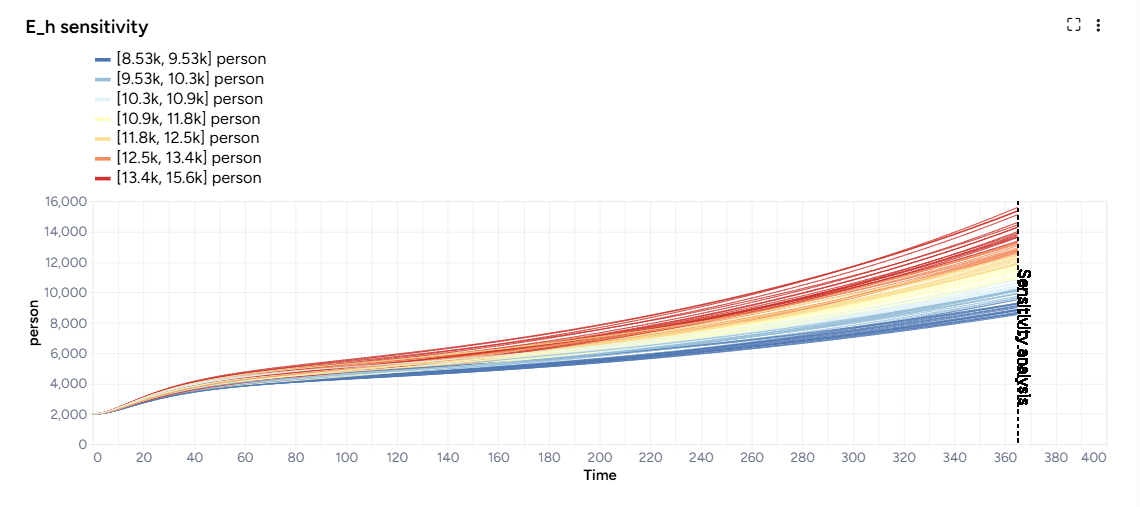

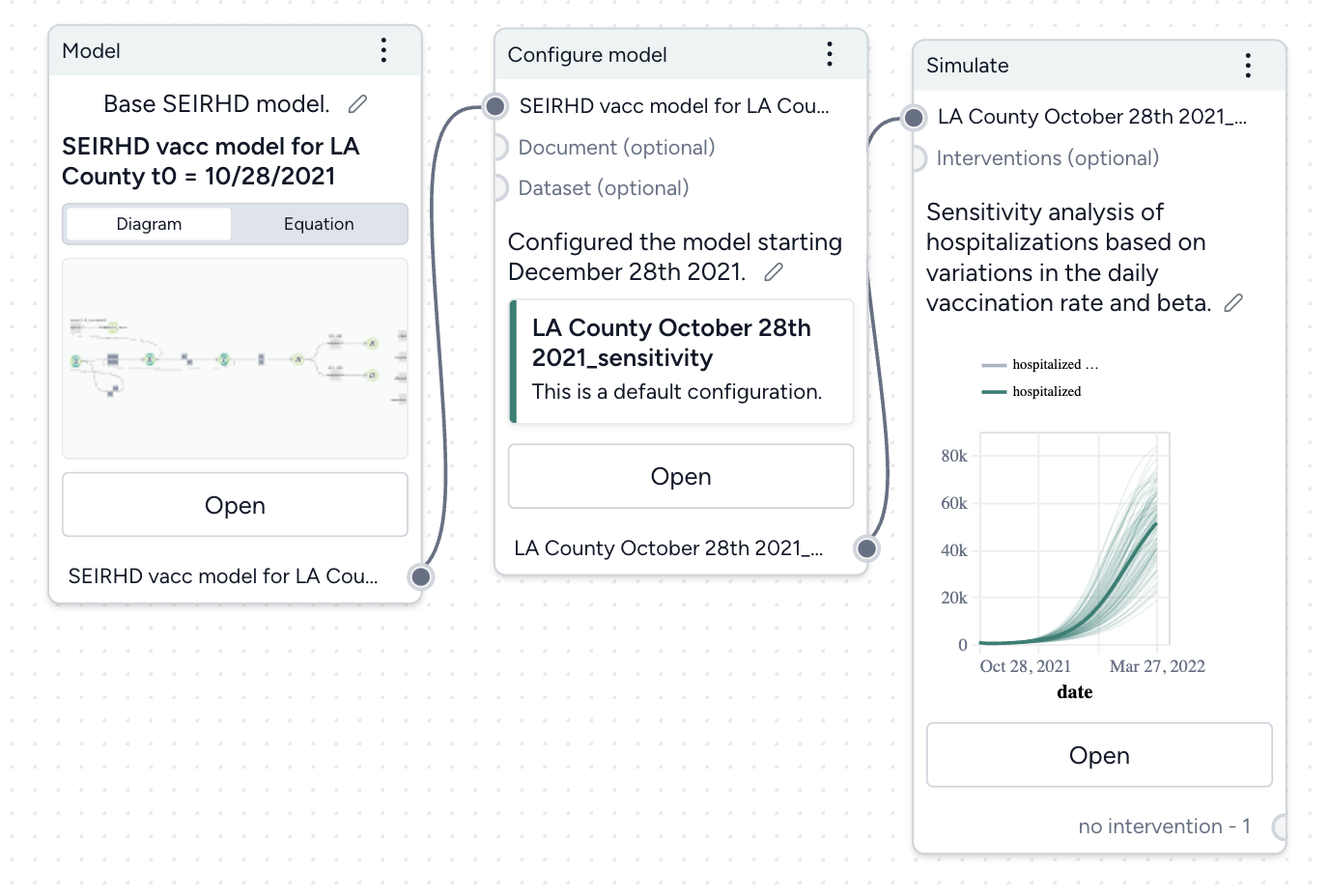

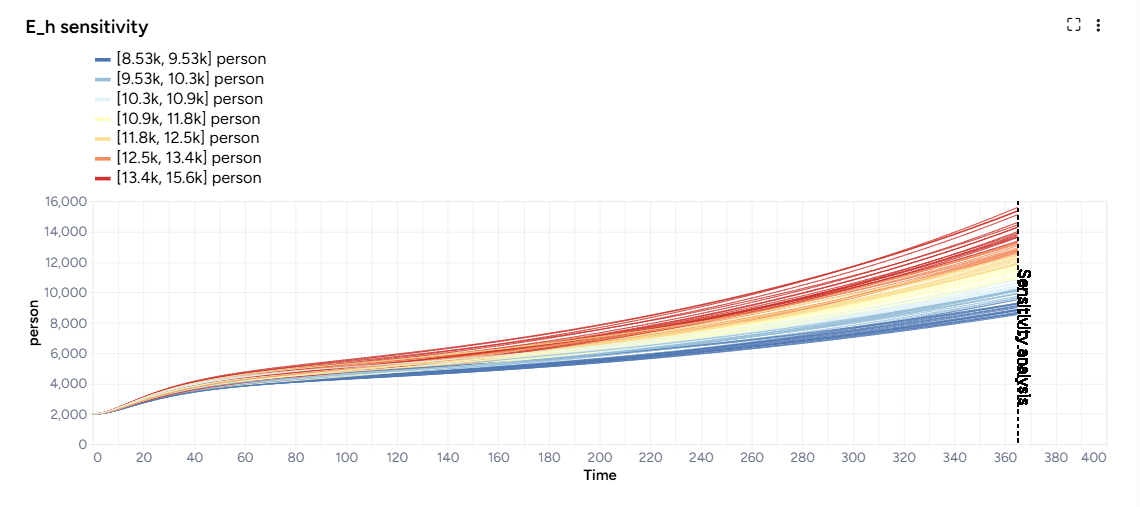

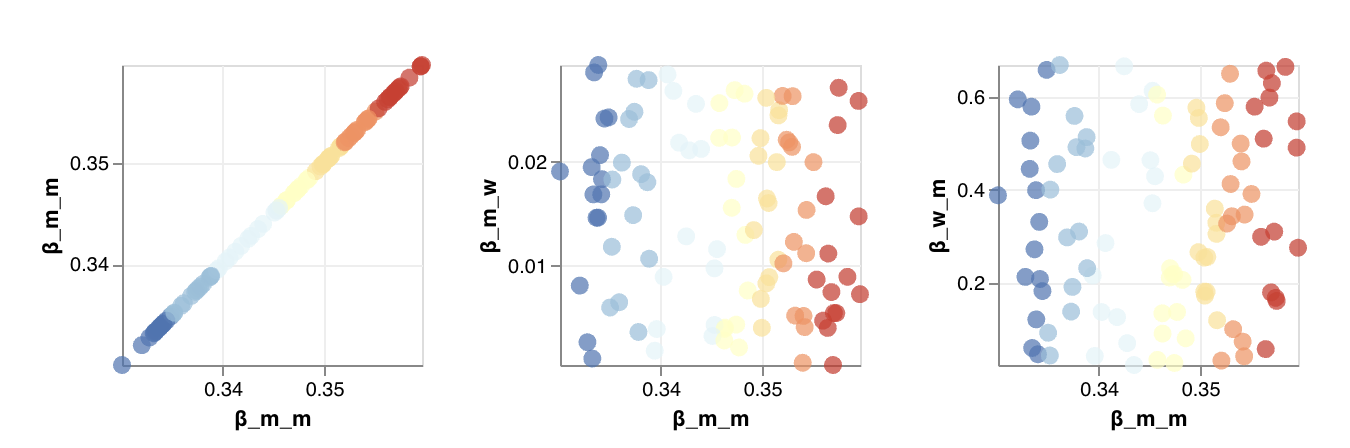

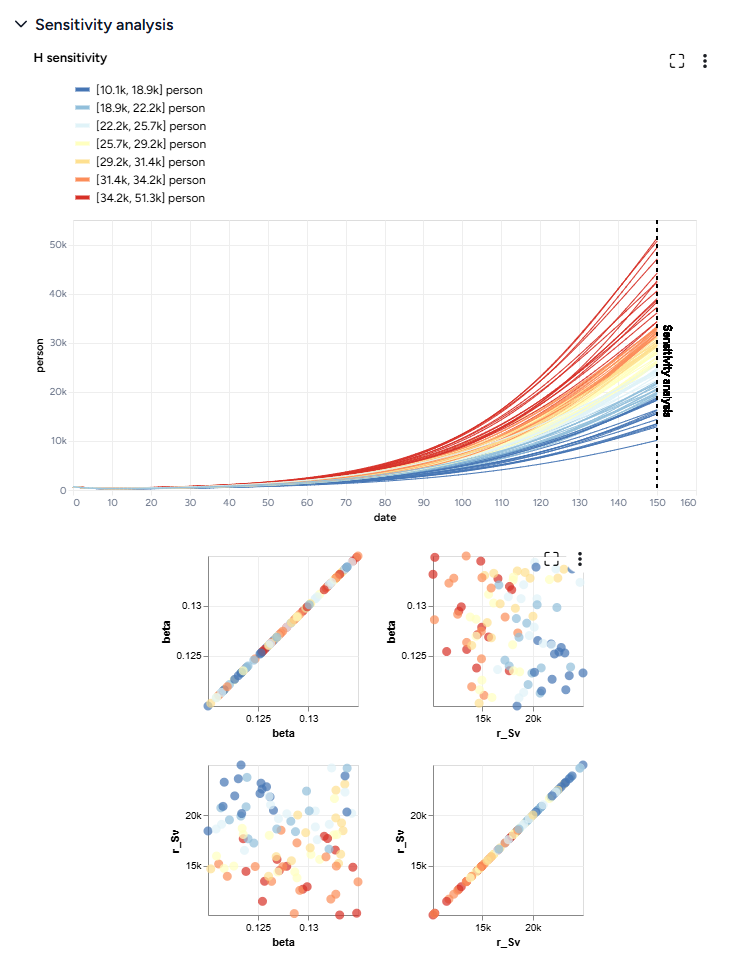

Next, we'll simulate our model configuration to perform a sensitivity analysis to explore the effects of infection rate (beta) and vaccination rate (r_Sv) on hospitalizations.

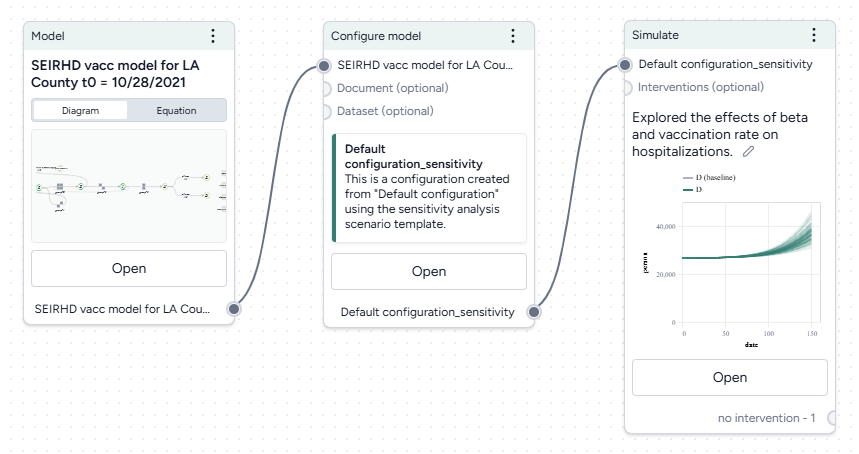

Run a sensitivity analysis with the Simulate operator

- Pipe the Configure model operator a Simulate operator and then click Open.

- Change the End time to 150 days and click Run.

The scatterplots below the sensitivity chart the parameters combine to affect hospitalizations shown in the chart above. Generally, high vaccination rates and low infection rates tend to reduce hospitalizations.

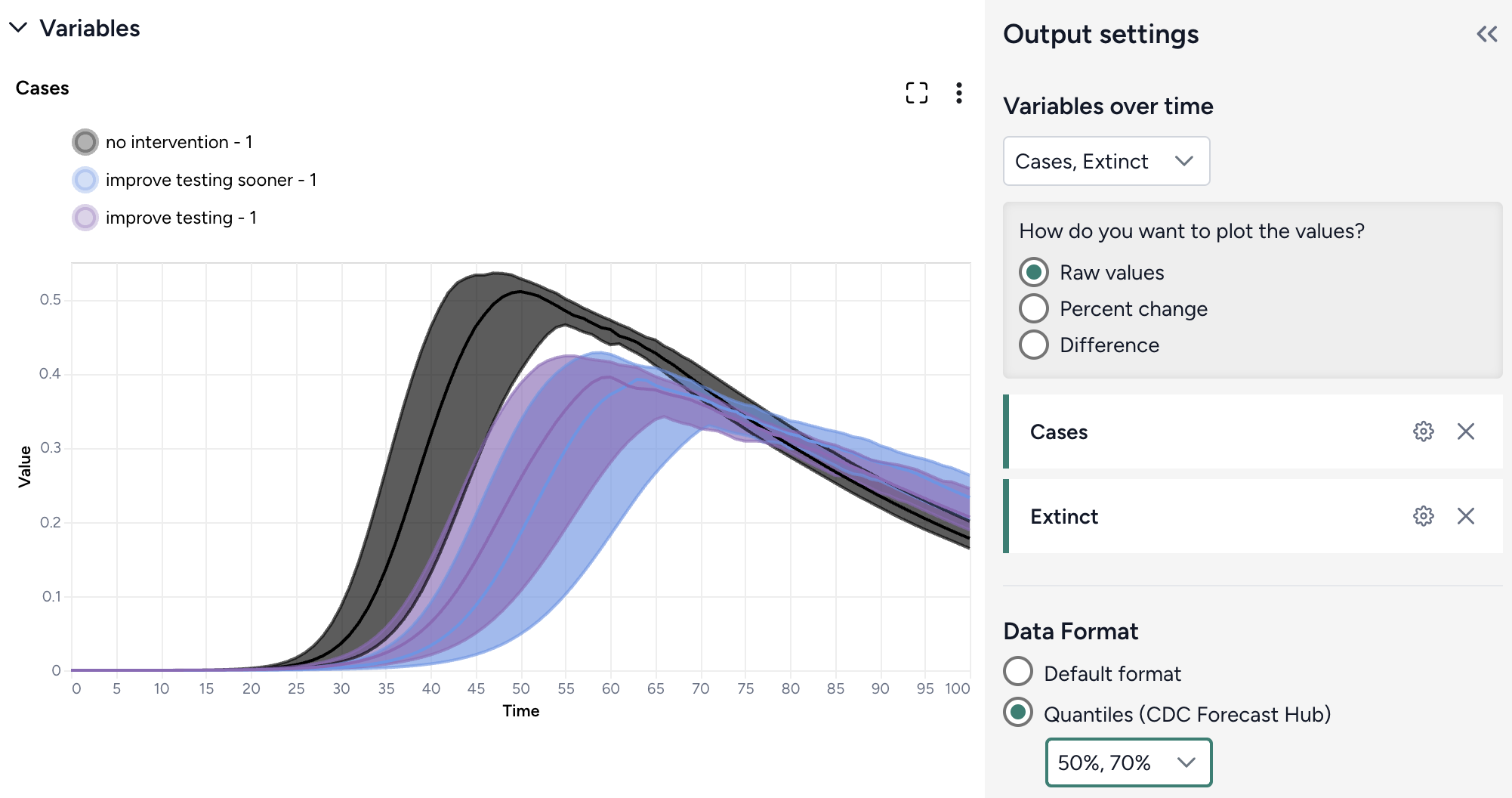

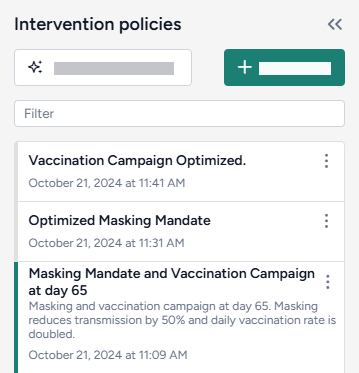

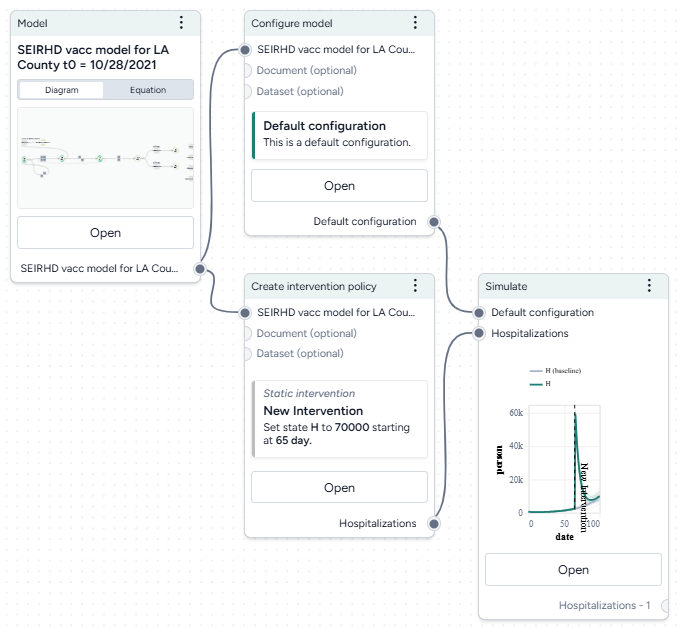

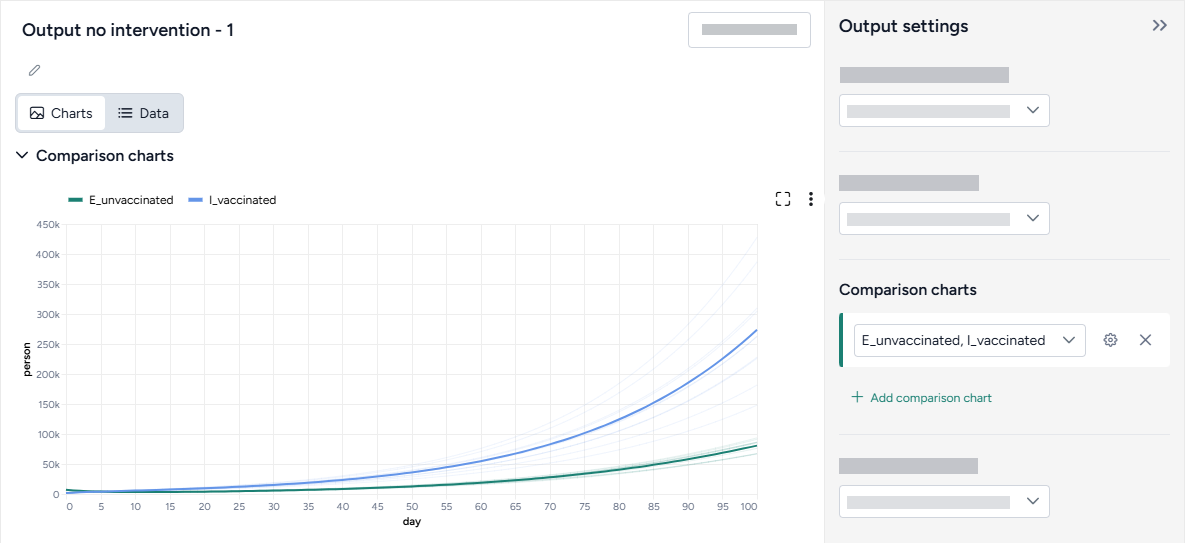

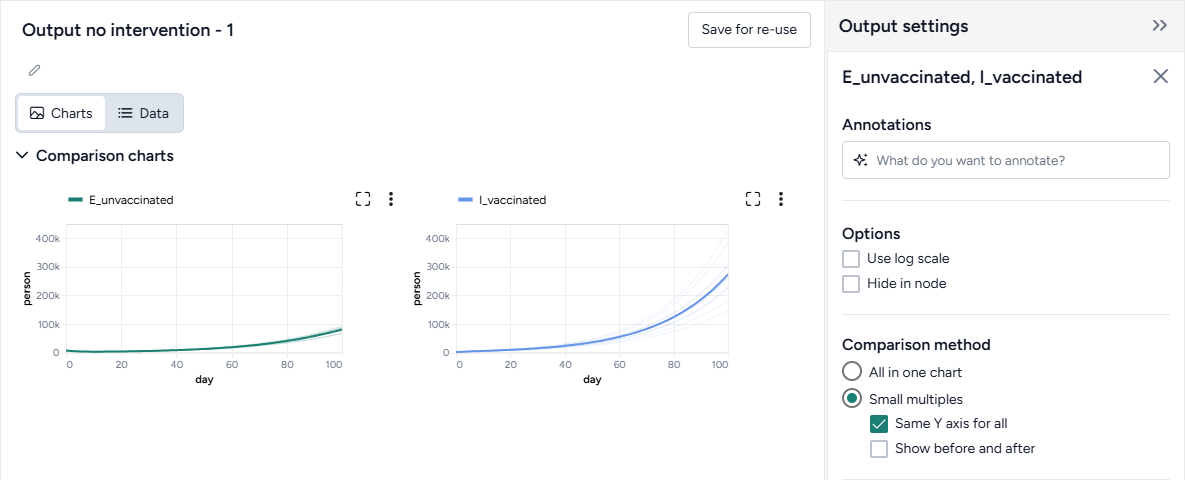

Create and simulate intervention policies¶

Our sensitivity analysis showed us the infection rates we should aim for to reduce hospitalizations. Now we can create different masking intervention policies to visualize the impact of different what-if masking scenarios that might get us there. For this, we'll use parameter NPI_mult, which is a multiplier for the transmission rate.

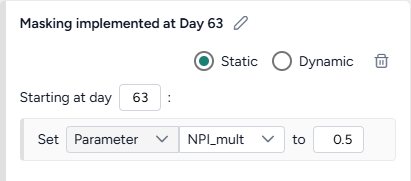

We'll create two policies:

- One that sets NPI_mult to 50% on day 63.

- One that sets to 50% only when hospitalizations cross 8,000.

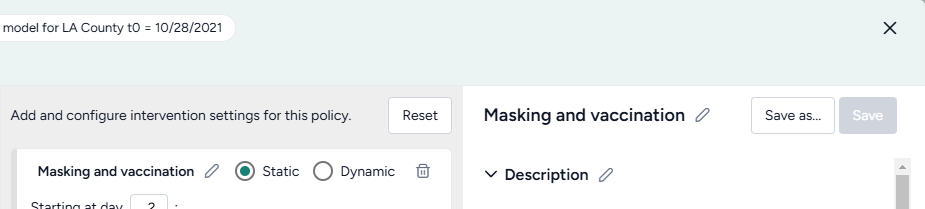

Create and simulate an intervention policy to increase masking

- Pipe the Configure model operator into two different Create intervention policy operators and then click Open.

- Set the intervention policies:

- On one policy, create a new Static intervention starting at day 63 that sets Paramater NPI_mult to 0.5.

- On the other, create a new Dynamic intervention that sets Paramater NPI_mult to 0.5 when hospitalizations (H) cross the threshold of 8,000.

- Save each intervention policy and give them unique names.

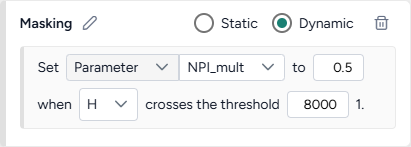

- Create three new Simulate operators:

- One the first, pipe in only the model configuration to get a baseline without interventions.

- On the second, pipe in the model configuration and the static intervention.

- On the third, pipe in the model configuration and the dynamic intervention.

- Open each Simulate operator, change the End time to 150 days, and click Run.

- Click Save for re-use to save each simulation result as a new dataset.

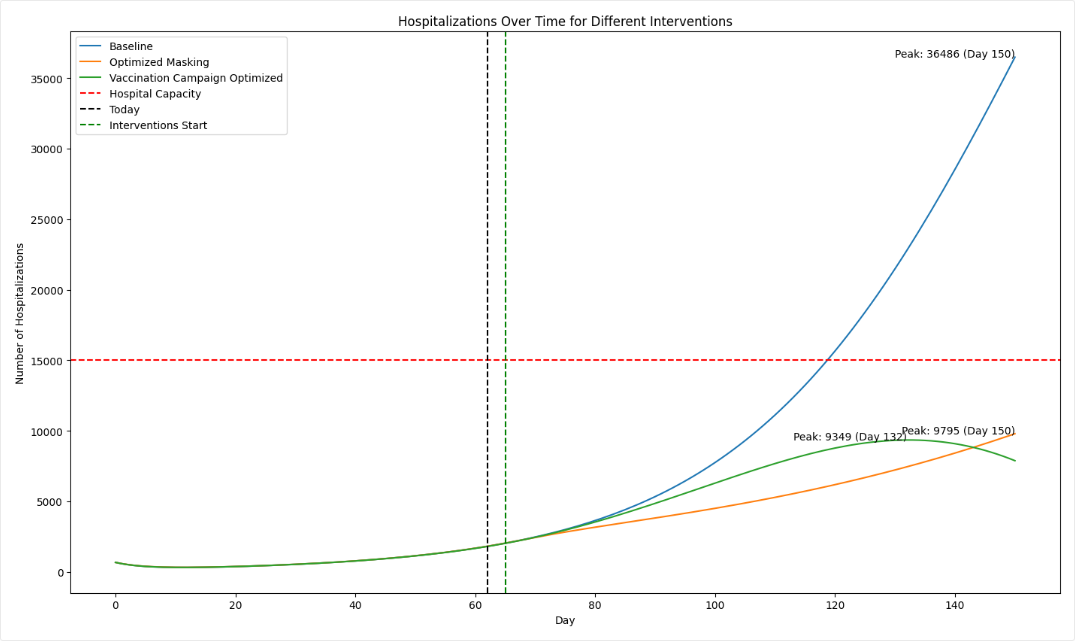

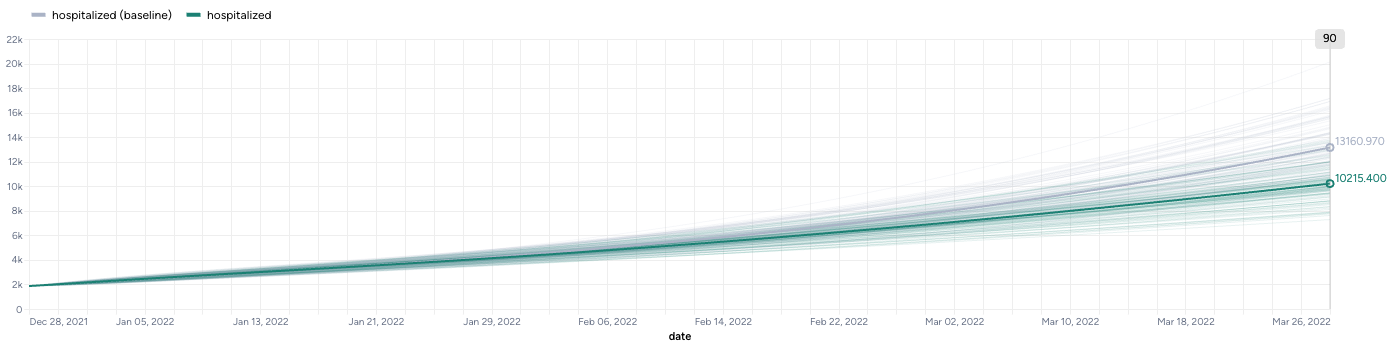

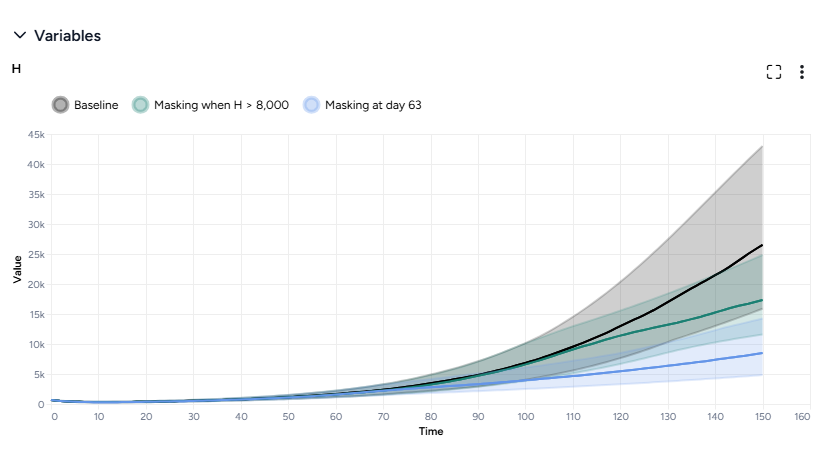

Both interventions reduce hospitalizations compared to the baseline, but the static intervention of introducing masking at day 63 is more effective than the dynamic intervention that waits for hospitalizations to reach 8,000.

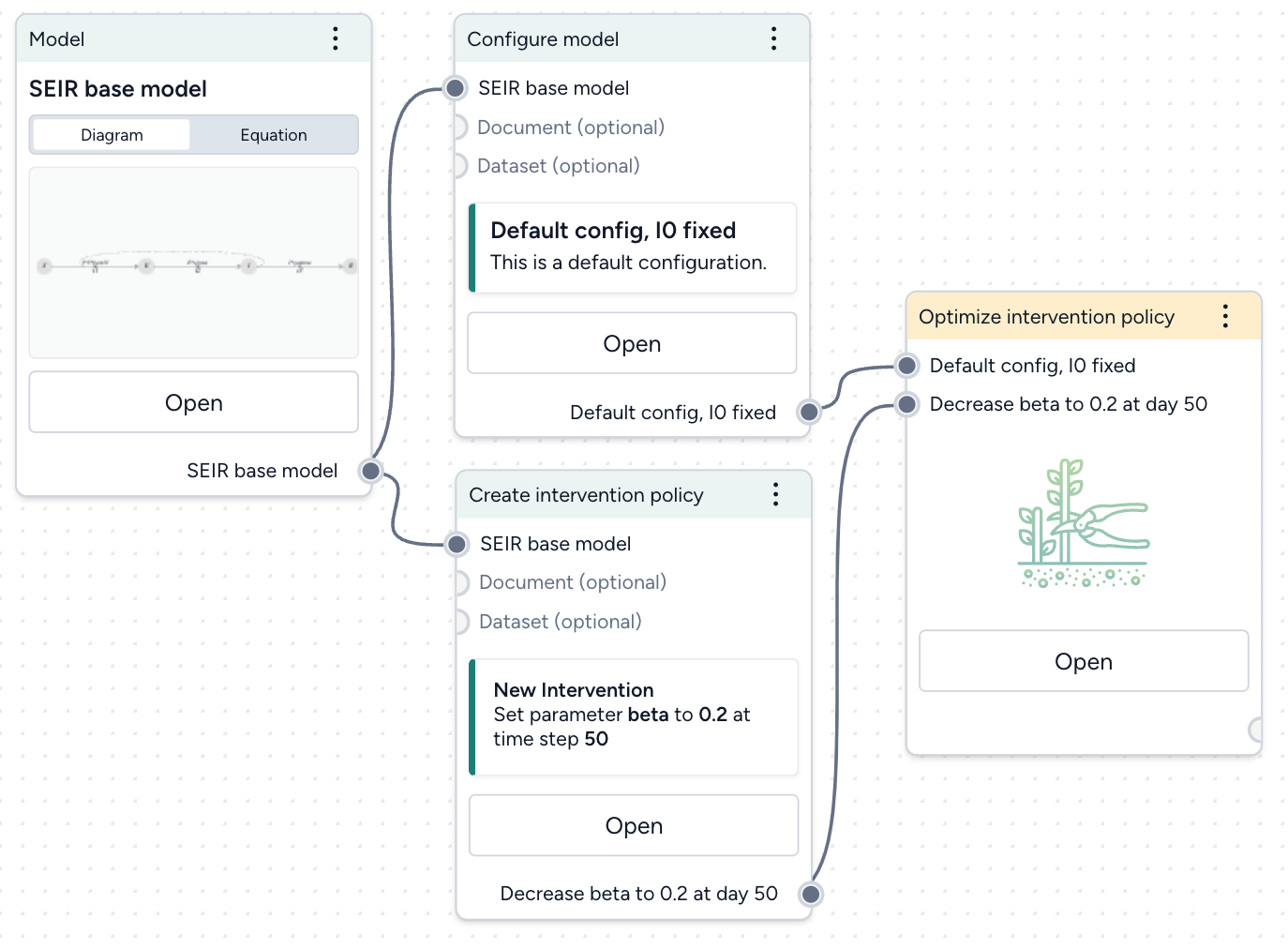

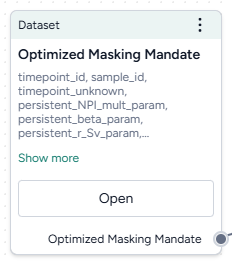

Optimize intervention policies¶

In Terarium, you can optimize interventions to meet specified constraints, allowing you to get answers to key decision maker questions faster. We want to find how effective masking needs to be to prevent hospitalizations from exceeding capacity.

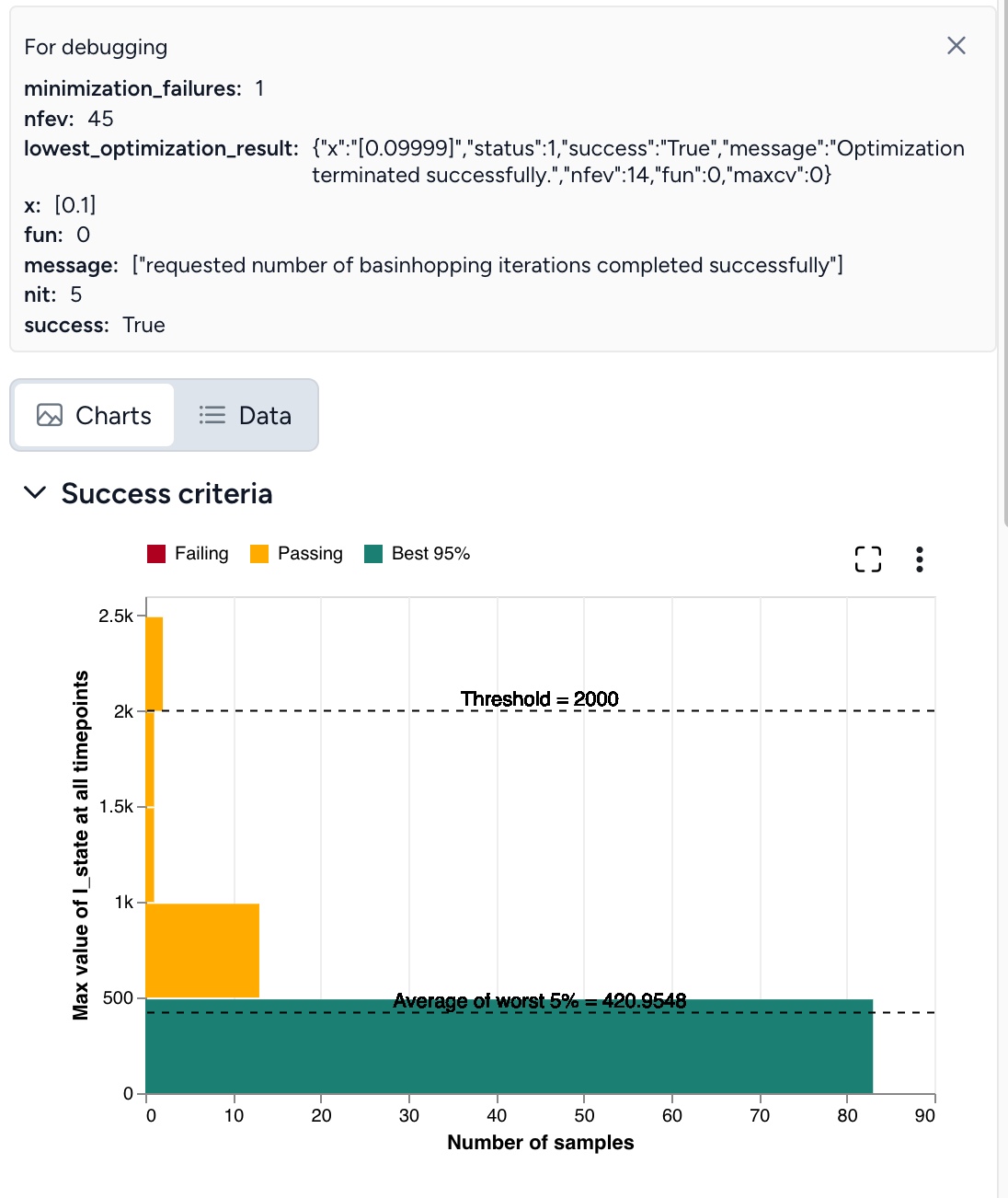

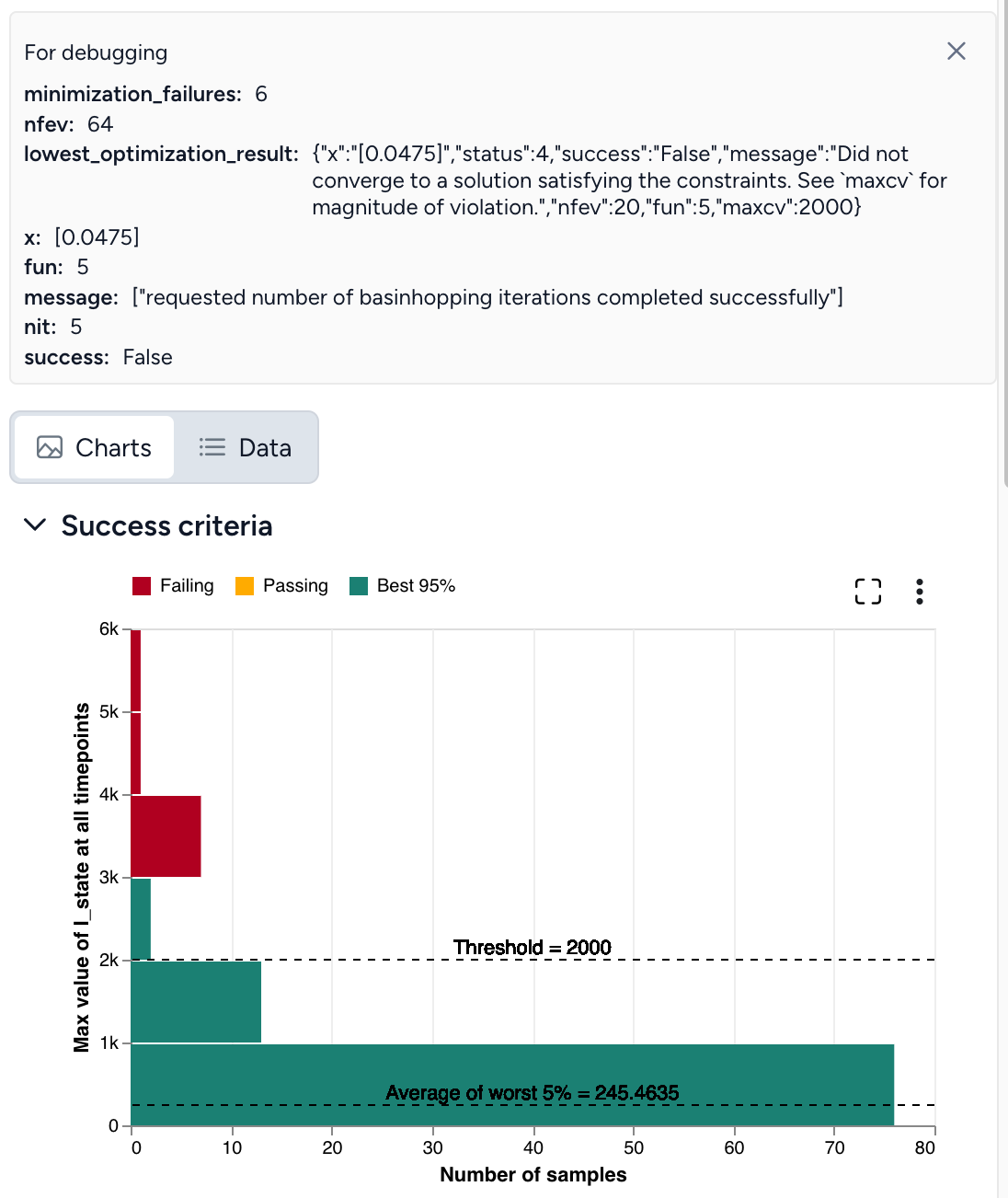

Optimize the intervention policy to find how effective masking needs to be to reduce hospitalizations

- Pipe the static intervention and model configuration into an Optimize intervention policy operator and click Open.

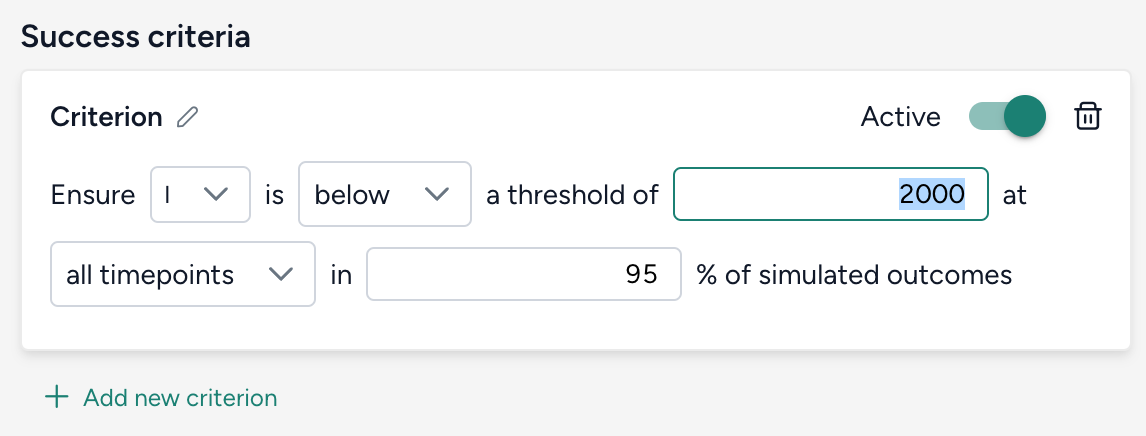

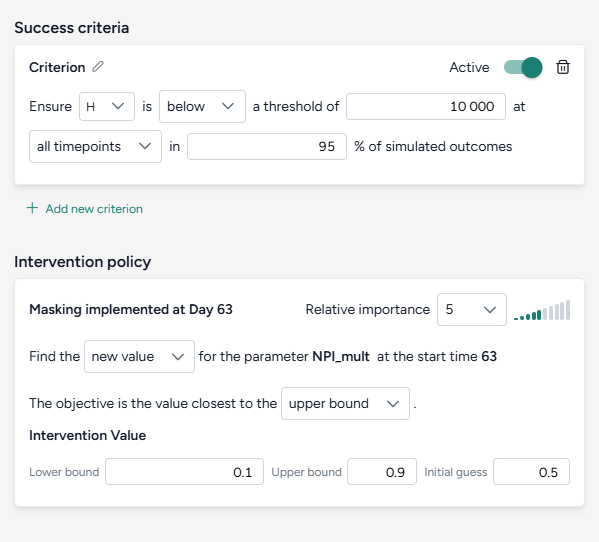

-

Set the success criteria:

- Ensure hospitalizations (H) are below 10,000 at all timepoints in 95% of simulated outcomes.

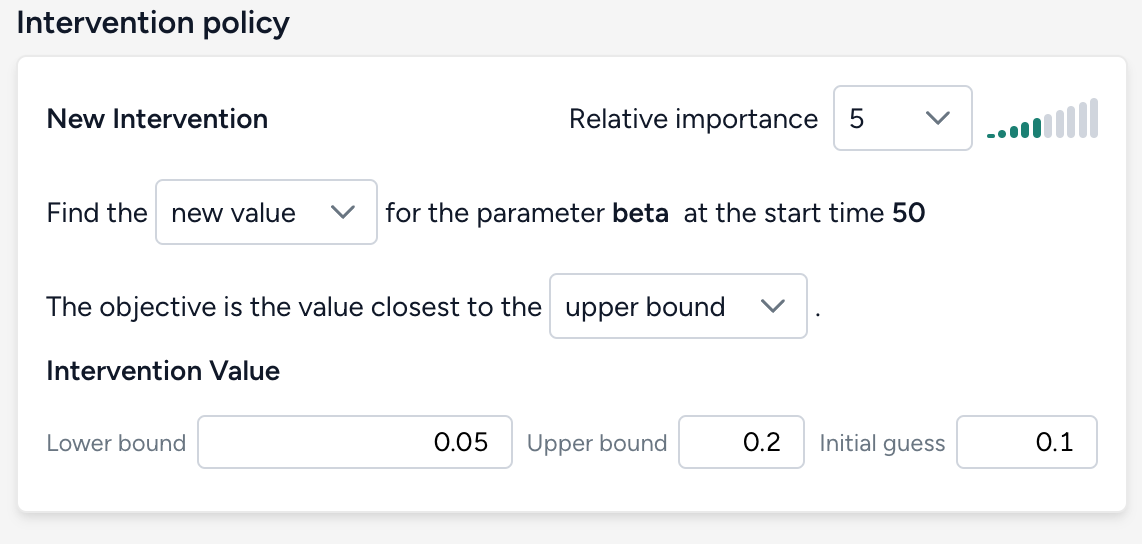

-

Specify a new intervention:

- Find a new value for the parameter NPI_mult with the objective being closest to the upper bound of the range from 10–90%.

- Set the initial guess to 50%.

-

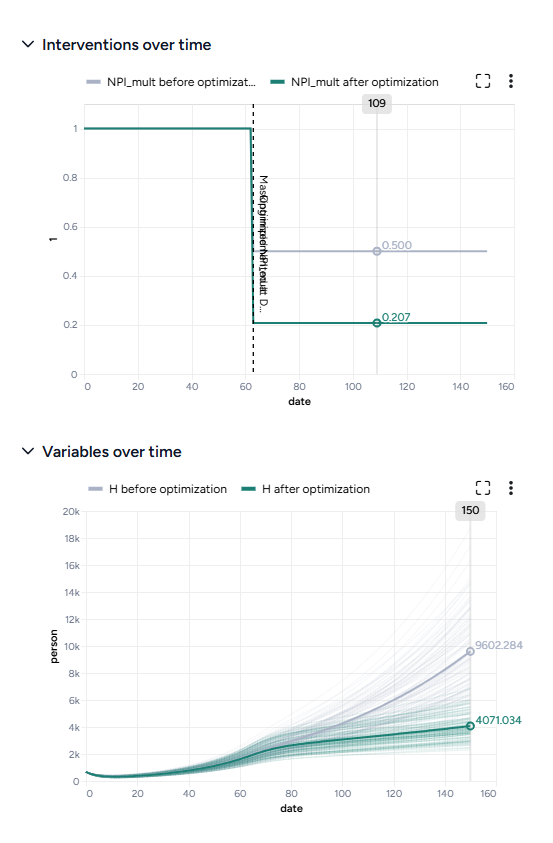

Simulate for 150 days and click Run.

By simulating the optimized intervention policy, you can see how the estimates of masking compliance affect peak hospitalizations:

- Initial guess: NPI_mult of 50%, which leads to peak hospitalizations of 9,602.

- Optimization: NPI_mult of 20.7%, which leads to peak hospitalizations of 4,071.

Compare datasets¶

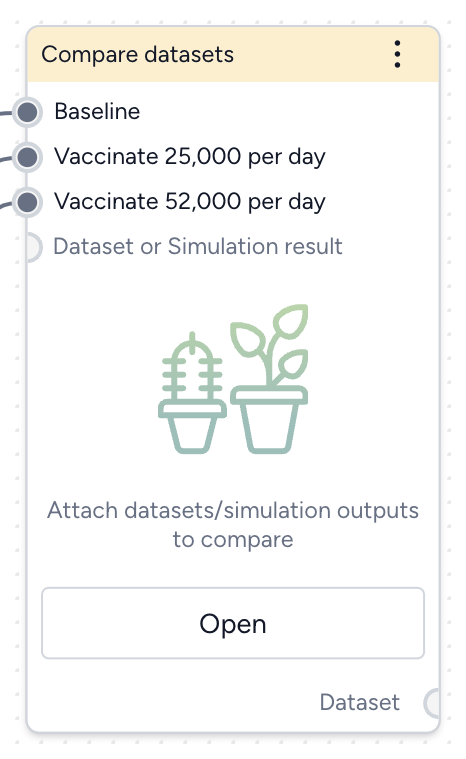

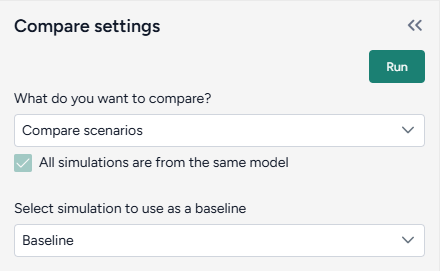

Finally, you can take the results of your simulations, interventions, and optimizations and compare them to see which works best at reducing hospitalizations. The Compare datasets operator lets you compare scenarios based on the various simulation results you've generated.

Optimize the intervention policy to find how effective masking needs to be to reduce hospitalizations

- Pipe the datasets you created from simulating your different intervention policies into a Compare datasets operator and click Open.

- Select Compare scenarios, choose the baseline dataset, and click Run.

- In the Output settings, select H (hospitalizations) to plot the variable over time for each of the datasets.

You can see the effectiveness of the different intervention policies on a single plot. Starting masking at day 63 is most effective at reducing hospitalizations.

What's next?¶

We've completed the sample SEIRHD model workflow! You now have the tools you need to start uploading, transforming, and simulating models and model resources in Terarium.

Instructional videos¶

The following videos show how to use Terarium to, for example:

- Create models.

- Edit models.

- Work with data.

- Run what-if scenarios.

- Calibrate models.

- Create, simulate, and optimize intervention policies.

- Stratify models.

Introduction¶

Working with data¶

Creating a model from equations¶

Editing a model¶

Calibration¶

Simulating intervention policies¶

Optimizing intervention policies¶

Stratification¶

Ended: Get started

Gather modeling resources¶

With Terarium, you can gather, store and manage resources needed for your modeling and simulation workflows. You can pull in documents, models, and datasets from:

Documents, models, and datasets appear in your project resources. You can transform and simulate them by dragging them into a workflow.

Upload resources¶

Using the Resources panel, you can import resources of the following types:

-

Documents

- PDF files (.PDF)

- Markdown files (.MD)

- Text files (.TXT)

Note

Uploaded documents run through an extraction process that, depending on the size of the PDF, may take some time.

-

Models

- PetriNet models in Systems Biology Markup Language (SBML) format (.XML or .SBML)

- Terarium model and model configuration formats (.JSON and .modelconfig)

- StockFlow models in Vensim format (.MDL)

- StockFlow models in Stella formats (.XMILE, .ITMX, .STMX)

-

Datasets

- Comma-separated values (.CSV)

- NetCDF (.NC)

Upload resources

- Do one of the following actions:

- Drag your files into the Resources panel.

- Click Upload and then click open a file browser to navigate to the location of the files you want to add.

- Click Upload.

PDF Extraction¶

Most resources you upload are available for use right away. When you upload a PDF document however, Terarium begins extracting any linear ordinary differential equations it finds in the text. Depending on the size of the PDF, this process can take some time.

Note

The extractor isn't optimized to handle every way that equations can represent models. Before using any extracted equations to create a model from equations, check and edit them if necessary.

Check the status of a PDF extraction

- Click Notifications .

Search for and copy resources from other projects¶

You can get resources by copying them from other projects in Terarium. If you know their location, you can get them directly from the source project. Otherwise, use the project search on the home page to find relevant resources.

Find projects containing resources of interest

The project search finds projects and resources by keyword. Keywords are checked against the names of projects and resources such as models, datasets, documents, model configurations, intervention policies, and workflows.

- Click the Terarium logo to return to the home page.

- Enter your keywords in the search field and press Enter.

- In the results, click the project name to view the source project overview or click the resource name to open it.

Get a model or dataset from another project

- Open the project that contains the model or dataset.

- Open the model or dataset by clicking its name in the Resources panel.

- Next to the model or dataset name, click > Add to project and select your project.

Get a document from another project

- Open the project that contains the document.

- Open the document by clicking its name in the Resources panel.

- Click > Download this file and save it to your computer.

- Open your project and reupload the document.

Build a workflow graph¶

A workflow is a visual canvas for building and running complex operations (calibration, simulation, and stratification) on models and data.

Create a new blank workflow

- In the Resources panel, click New in the Workflows section.

- Enter a name for the workflow and click Create.

Create a new workflow based on a template

- In the Resources panel, click New in the Workflows section.

- Select the template for the type of workflow you want to create.

-

Enter a name for the workflow, set the required inputs and outputs, and then click Create.

Note

Before you can use a template, your project must contain the inputs (models, model configurations, or datasets) you want to use.

Create new workflows based on templates¶

The following workflow templates streamline the process of building common modeling workflows. They provide preconfigured and linked resources and operators tailored to your objectives, such as analyzing uncertainty, forecasting potential outcomes, or comparing intervention strategies.

Note

- Before you can fill out a template, your project must contain the inputs (models, model configurations, or datasets) you want to use.

- Before you can see the results of a templated workflow, you must configure and run any Calibrate, Simulate, or Compare datasets operators it contains.

- You can create new intervention policies with the template. Once the workflow is created, you must create the Create intervention policy operator and add your intervention criteria.

Situational awareness

Use this template to determine what's likely to happen next. For example, you can:

- Anticipate the arrival of a new variants.

- Evaluate the potential impact of growing vaccine hesitancy and declining Non-Pharmaceutical Interventions (NPIs).

Fill out the Situational awareness template

To use the Situational awareness template, select the following inputs and outputs:

-

Inputs

- Model

- Model configuration

- Dataset

-

Outputs

- Metrics (model states) of interest

Complete the Situational awareness workflow

The new workflow first calibrates the model to historical data to obtain the best estimate of parameters for the present. Then it forecasts the model into the near future. To see the results, you first need to open the Calibrate operator and:

- Map the model variables to the dataset columns.

- Run the calibration.

This creates:

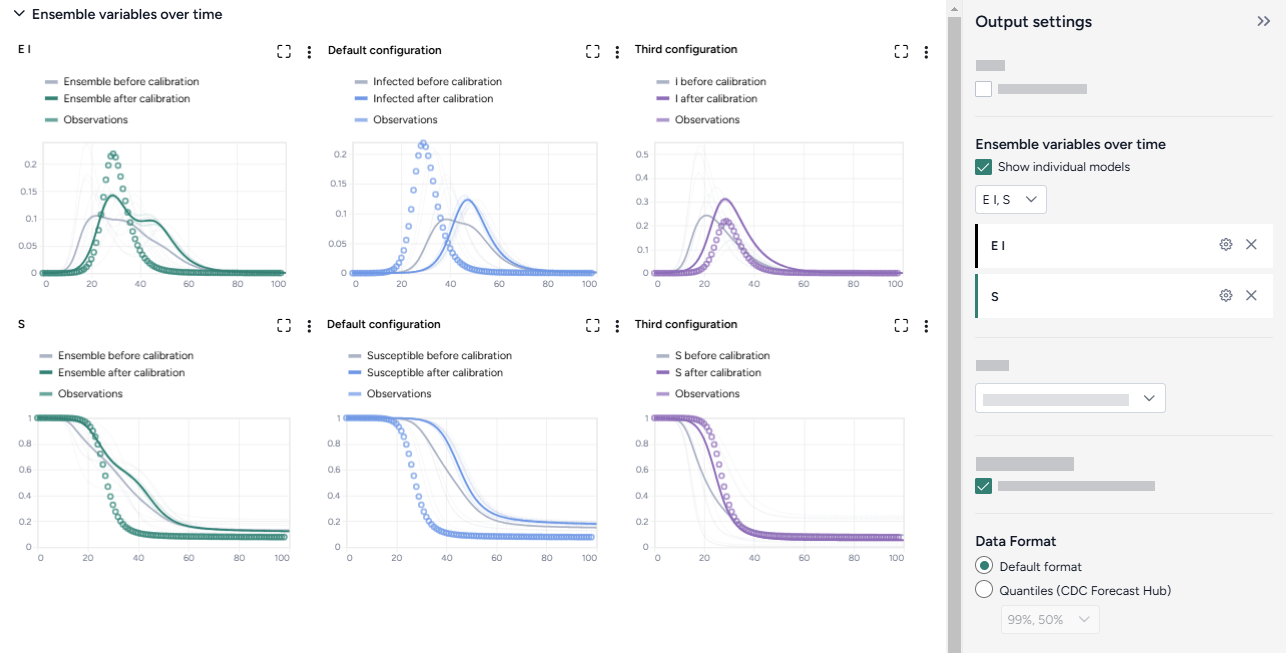

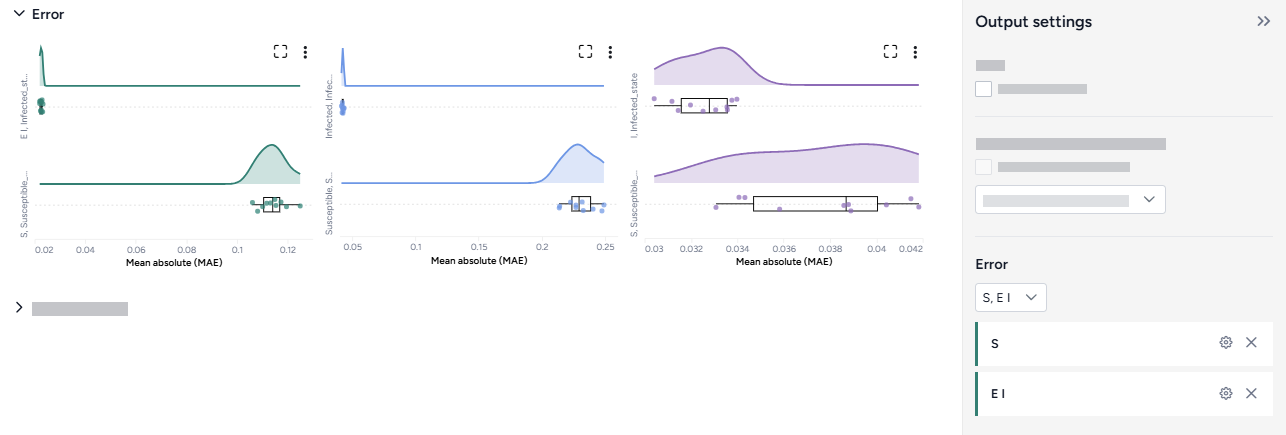

- Charts comparing the selected model states before and after calibration with observations from the dataset.

- A new model calibrated to the dataset.

Sensitivity analysis

Use this template to determine which model parameters introduce the most uncertainty in your outcomes of interest. For example, you can explore:

- Unknown severity of new variant.

- Unknown speed of waning immunity.

Fill out the Sensitivity analysis template

To use the Situational awareness template, select the following inputs and outputs:

-

Inputs

- Model

- Model configuration

- One or more uncertain parameters of interest and the ranges to explore

- Simulation settings (optional)

-

Outputs

- Metrics (model states) of interest

Complete the Sensitivity analysis workflow

The new workflow first configures the model with parameter distributions that reflect all the sources of uncertainty. Then it simulates the model into into near future. To see the results, you first need to open the Simulate operator, edit any settings, and run it. This creates:

- A sensitivity analysis chart for each selected model state and pairwise comparison charts for each selected parameter.

- A simulation results dataset.

Decision making

Use this template to determine the impact of different interventions. For example, you can find:

- The impact of several combinations of vaccination and Non-Pharmaceutical Interventions (NPIs) levels.

- Whether it's better to implement an intervention in all locations, select locations, or not at all.

Fill out the Decision making template

To use the Decision making template, select the following inputs and outputs:

-

Inputs

- Model

- Model configuration

- One or more intervention policies

- Simulation settings (optional)

-

Outputs

- Metrics (model states) of interest

Complete the Decision making workflow

The new workflow first runs simulations for the baseline (no intervention) and each intervention policy. It then compares the relative impact of each intervention policy to the baseline. To see the results, you first need to:

- Open and run each Simulate operator.

- Open and run the Compare datasets operator.

This creates a comparison of the simulated baseline and intervention policies.

Horizon scanning

Use this template to determine how extreme scenarios impact the outcome of different interventions. For example, you can explore:

- Potential emergence of a new variant.

- Rapidly waning immunity.

Fill out the Horizon scanning template

To use the Horizon scanning template, select the following inputs and outputs:

-

Inputs

- Model

- Model configuration

- One or more uncertain parameters of interest and the ranges to explore

- One or more intervention policies (optional)

- Simulation settings (optional)

-

Outputs

- Metrics (model states) of interest

Complete the Horizon scanning workflow

The new workflow first configures the model to represent the cartesian product of the extremes of uncertainty for some parameters. It then simulates into the near future with different intervention policies and compares the outcomes. To see the results, you first need to:

- Open and run each Simulate operator.

- Open and run the Compare datasets operator.

This creates a comparison of the simulated extreme scenarios.

Value of information

Use this template to determine how uncertainty impacts the outcomes of different interventions. For example, you can determine whether:

- Uncertainty in severity changes the priority of which group to target for vaccination.

- Disease severity impacts the outcome of different social distancing policies.

Fill out the Value of information template

To use the Value of information template, select the following inputs and outputs:

-

Inputs

- Model

- Model configuration

- One or more uncertain parameters of interest and the ranges to explore

- One or more intervention policies

- Simulation settings (optional)

-

Outputs

- Metrics (model states) of interest

Complete the Value of information workflow

The new workflow first configures the model with parameter distributions that reflect all the sources of uncertainty. It then simulates into the near future with different intervention policies. To see the results, you first need to:

- Open and run each Simulate operator.

- Open and run the Compare datasets operator.

This creates a comparison of the uncertainty across the different interventions.

Reproduce models from literature

Use this template to reproduce models from literature and then compare them to find the best starting point. For example, you can determine whether:

- The results from a paper are reproducible.

- The best model from a group of recent papers to explore disease transmission.

Fill out the Reproduce models from literature template

To use the Reproduce models from literature template, select the following inputs and outputs:

-

Inputs

- One or more documents

- A brief description of your goal for comparing the resulting models (optional)

-

Outputs

- New models extracted from the documents

- Model configurations for each model

- Comparison of the models tailored to your goal

- Simulation results for the selected model configurations

Complete the Reproduce models from literature workflow

The new workflow first extracts the models from the documents. It then compares the models according to your goal and configures and simulates them into the near future. To see the results, you first need to:

- Open each Create model from equations operator, select the relevant equations from the paper, and run the operator to create the model.

- Open and run the Compare datasets operator.

- Open and edit the Configure model operators.

- Open and run the Simulate operators.

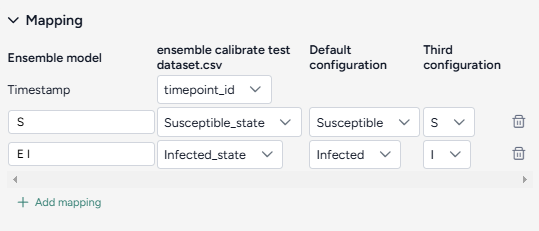

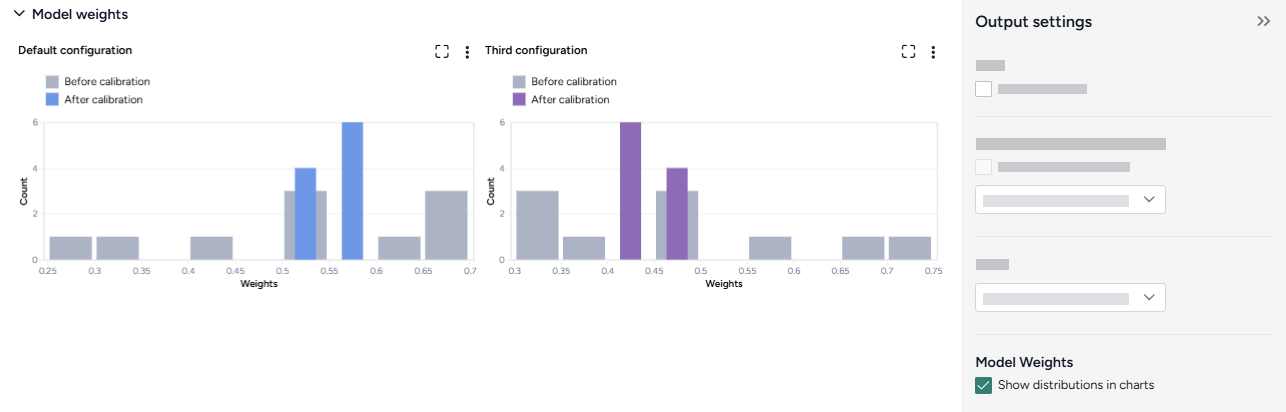

Calibrate an ensemble model

Use this template to create a more accurate model by combining multiple models in an ensemble. For example, you can determine how to:

- Leverage the strengths of each model to make the most accurate model possible.

Fill out the Calibrate an ensemble model template

To use the Calibrate an ensemble model template, select the following inputs and outputs:

-

Inputs

- A historical dataset

- Two or more models, each with their own model configurations

- A mapping of the timestamp values that the dataset and models share

- Additional mappings for each variable of interest that the dataset and models share

-

Outputs

- Simulation results each selected model configuration

- Calibrations against the historical for each of the selected model configurations

- Calibrated ensemble model based on each of the calibrations

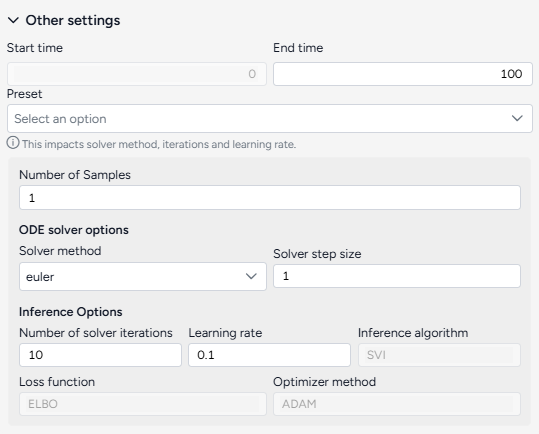

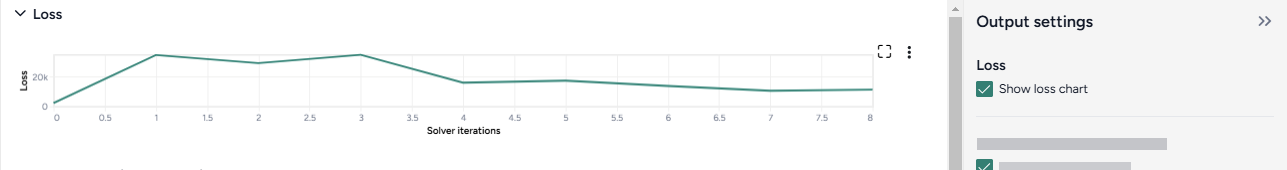

Complete the Calibrate an ensemble model workflow

The new workflow first simulates and calibrates each model individually, then calibrates the ensemble. To see the results, you first need to:

- Open and run each Simulate operator.

- Open and run each Calibrate operator.

- Open and run the Calibrate ensemble operator.

Add resources and operators to a workflow¶

Workflows consist of resources (models, datasets, and documents) that you can feed into a series of operators that transform or simulate them.

Each resource or operator is a "node" with a title, thumbnail preview, and a set of inputs and outputs.

Add a resource to the workflow

- Drag the model, dataset, or document in from the Resources panel.

Add an operator to the workflow

Perform one of the following actions:

- Right-click anywhere on the graph and then select an operation from the menu.

- Click Add component and then select the operation from the list.

Connect resources and operators¶

Inputs and outputs on nodes let you resources and operators together to form complex model operations.

Connect resources and operators already in the workflow

- Click the output of one resource or operator and then click the corresponding input on another operator.

Example

- To configure a Calibrate operation to use a dataset, first click the output on the right side of the Dataset operator and then click the Dataset input on the left side of the Calibrate operator.

Connect resources and operators to a new operator

- Hover over the output of the resource or operator, click Link , and then select an operator.

Remove a connection between resources and operators

- Hover over the input or output and click Unlink.

Operators with yellow headers

An operator with a yellow header indicates that a resource or indicator that flows into it has changed and the operator needs to be rerun.

Edit resource and operator details¶

Resources and operators in the workflow graph summarize the data and inputs/outputs that they represent. You can drill down to view more details or settings.

View resource or operator details

Perform one of the following actions:

- Click Open.

- Click > Open in new window.

Duplicate a resource or operator

- Click > Duplicate

Manage a workflow¶

To organize your workflow graph, you can move, rearrange, or remove any of the operators.

Save a workflow

Terarium automatically saves the state of your workflow as you make changes.

Rename a workflow

- Click > Rename, type a unique name for the workflow, and press Enter.

Move a workflow operator

- Click the title of the operator and drag it to another location on the graph.

Remove a workflow operator

- Click > Remove.

Zoom to fit workflow

You can quickly zoom the canvas to fit your whole workflow to the current window.

Note

In some cases, parts of your workflow may be just off screen after the zoom.

- Click Reset zoom.

Review and transform data ↵

Working with data¶

You can use uploaded datasets or simulation results to configure and calibrate models. If the data doesn't align with your intended analysis, you can transform it by:

- Creating new variables

- Calculating summary statistics

- Filtering data

- Joining datasets

The Transform data operator can also serve as a place to visually plot and compare multiple datasets or simulation results.

Note

For information about uploading datasets, see Gather modeling resources.

Dataset resource¶

A dataset resource can represent:

- A dataset you've uploaded.

- A dataset you've modified and saved.

- The output of a simulation or an optimized intervention policy.

In a workflow graph, a dataset resource lists the columns it contains. You can use it to:

- Open and explore the raw data.

- Run data transformations, model configurations, and model calibrations.

-

Inputs

- None

-

Outputs

- Dataset

Add a dataset resource to a workflow

- Drag the resource from the Datasets section of the Resources panel.

What can I do with a dataset resource?¶

Hover over the output of the Dataset resource and click link to use the dataset as an input to one of the following operators.

-

Data

- Transform dataset

Guide an AI assistant to modify or visualize the dataset. - Compare dataset

Compare the impacts of two or more interventions or rank interventions.

- Transform dataset

-

Configuration and intervention

- Configure model

Use the dataset to extract initial values and parameters for the condition you want to test. - Validate configuration

Use the dataset to validate a configuration.

- Configure model

-

Simulation

- Calibrate

Use the dataset to improve the performance of a model by updating the value of configuration parameters. - Calibrate ensemble

Fit a model composed of several other models to the dataset.

- Calibrate

Review and enrich a dataset¶

Once you have uploaded a dataset into your project, you can open it to:

- Explore and summarize its data and columns.

- Manually add metadata that explains the data in each column.

- Automatically enrich metadata using documents in your dataset or without additional context.

Review a dataset¶

To get an understanding of your data, you can open a dataset and review its columns and a selection of its rows. A dataset resource previews up to 100 rows of data.

Open a dataset

-

Perform one of the following actions:

- In the Resources panel, click the name of the dataset.

- On a Dataset node in the workflow graph, click Open.

View the raw data in a dataset

- Click Data in the navigation list on the right.

Download a dataset

- Next to the dataset name, click > Download.

Enrich a dataset¶

If your dataset lacks descriptive details about what each column contains, you can use Terarium's dataset enrichment capability to complete the:

- Units: What the column measures (dates, cases, people) or contains (text).

- Descriptions: A short plain language explanation of the column's contents.

- Concepts: Epidemiological concepts that relate to the data in the column. Helpful in mapping data to model variables.

- Distributions of values

Terarium's enrichment service uses an AI language model to generate column details based on either:

- Contextual clues in the contents of a document in your project.

- The column headers in the dataset. In this case, the language model attempts to define the columns as if they relate to a general epidemiological context.

Note

- Curating concepts improves structural comparison and alignment of models and data.

- If Terarium can't determine what a column represents, it fills out the description to summarize distribution of values it contains.

Enrich dataset metadata

- Click Enrich metadata.

-

Perform one of the following actions:

- To enrich metadata without selecting a document, click Generate information without context.

- To use a document, select the document title.

-

Click Enrich.

- Review the updated description and column information.

- Click Save.

Add or edit dataset metadata

- Edit the Name, Unit, Data type, Concept, or Description of any field.

- Click Save.

Transform a dataset¶

If a dataset doesn't align with your modeling goals, you can transform it by cleaning and modifying it or combining it with other datasets. Supported transformations include:

-

Manipulation

- Creating new variables.

- Filtering the data.

- Joining two or more datasets.

- Performing mathematical operations.

- Adding or dropping columns.

- Sorting the data.

- Handling missing values.

- Converting incidence data (such as daily new case counts) to prevalence data (total case counts at any given time).

-

Visualization and summarization

- Calculating summary statistics.

- Describing the dataset.

- Plotting the data.

- Answering specific questions about the data.

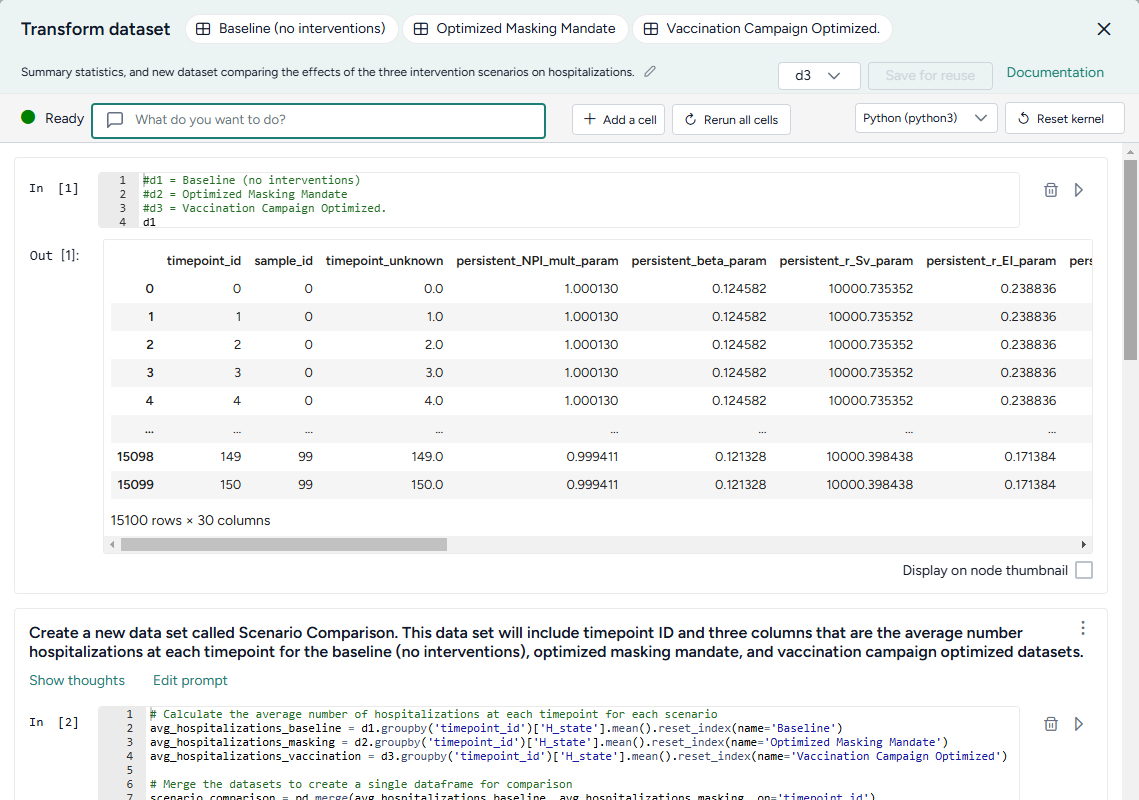

The Transform dataset operator is a code notebook with an interactive AI assistant. You describe in plain language the changes you want to make, and the large language model (LLM)-powered assistant automatically generates the code for you.

Note

The Transform dataset operator adapts to your level of coding experience. You can:

- Work exclusively by prompting the assistant with plain language.

- Edit and rerun any of the assistant-generated code.

- Enter your own executable code to make custom transformations.

Transform dataset operator¶

In a workflow, the Transform dataset operator takes one or more datasets or simulation results as inputs and outputs a transformed dataset.

Tip

For complex transformations with multiple steps, it can be helpful to chain multiple Transform dataset operators together. This allows you to:

- Keep each notebook short and readable.

- Isolate transformations that take a long time so you don't have to rerun them multiple times.

- Access intermediate results for testing or comparison.

You can choose any step in your transformation process as the thumbnail preview.

Add the Transform dataset operator to a workflow

-

Perform one of the following actions:

- On a resource or operator that outputs a dataset, hover over the output and click Link > Transform dataset.

- Right-click anywhere on the workflow graph, select Data > Transform dataset, and then connect the output of one or more Datasets or Simulations to the Transform dataset inputs.

Modify data in the Transform dataset code notebook¶

Inside the Transform dataset operator is a code notebook. In the notebook, you can prompt an AI assistant to answer questions about or modify your data. If you're comfortable writing code, you can edit anything the assistant creates or add your own custom code.

Prompts and responses are written to cells where you can preview, edit, and run code. Each cell builds on the previous ones, letting you gradually make complex changes and save the history of your work. You can insert prompts or cells at any point in the chain of transformations.

Tip

Wait until the status of the AI assistant is Ready (not Offline or Busy) before attempting to make any transformations.

Open the Transform dataset code notebook

- Make sure you've connected one or more datasets to the Transform dataset operator and then click Open.

Rerun a code notebook

When you reopen a Transform dataset notebook, the code environment is completely fresh. The initial datasets may be preloaded, but none of the transformations you previously made will be. To load them all:

- Click Rerun all cells.

Reset the kernel

From time to time, the AI assistant may get caught in a loop or stuck in a long-running transformation. To reset it:

Note

Resetting the kernel doesn't delete your prompts or the code cells in the notebook. You can still access and reload them at any time.

- Click Reset kernel.

- To reload the transformations in the notebook, click Rerun all cells.

Prompt the AI assistant to transform data¶

The Transform dataset AI assistant interprets plain language to answer questions about or transform your data.

Tip

The AI assistant can perform more than one command at a time.

Prompt or question the AI assistant

- Click in the text box at the top of the page and then perform one of the following actions:

- Select a suggested prompt and edit it to fit your dataset and the transformation you want to make.

- Enter a question or describe the transformation you want to make.

- Click Submit .

- Scroll down to the new code cell to inspect the transformation.

Choose where to insert a prompt

By default, new prompts and responses appear at the bottom of the notebook. To go back and insert intermediate steps in your transformation, you can change where your prompts appear. Note that if you do this, you will need to rerun any downstream transformations.

- Select the cell above where you want to insert the new prompt and response.

- Submit your new prompt.

How to write better prompts¶

The AI assistant generates better code when given specific instructions. Unintended actions or hallucinations are more likely to occur when instructions are vague. Describe what you want in steps, and clearly identify source datasets, columns, and actions.

The assistant often doesn't create previews or new datasets unless prompted. Include in your prompts whether you want to:

-

Preview your data:

Add a new column that keeps a running total of infections. Show me the first 10 rows. -

Create an intermediate dataset:

Create a new dataset named "result". The first column is named "fips" and its values are...

If you're not satisfied with a response, you can generate a new one or modify your prompt to refine what you'd like to see.

Tip

If the assistant doesn't produce the desired results, you can keep your transformation process well organized by adding more details to your prompt and then regenerating the responses. This ensures that any unnecessary results don't get saved in the notebook.

Change your prompt

- Click Edit prompt, change the text as needed, and then press Enter.

Get a new response to your prompt

- Click > Re-run answer.

How the AI assistant interprets prompts¶

To give you a sense whether it correctly interpreted your prompt, the assistant:

- Records its thoughts about your prompt (

I need to filter the dataset to only include rows with location equal to 'US'). - Shows how it intends to perform the transformation (

DatasetToolset.generate_python_code). - Presents commented code that explains what it's done.

When the response is complete, the code cell may also contain:

- A direct answer to your question.

- A preview of the transformed data.

- Any applicable error codes.

- Any requested visualizations.

Show or hide the assistant's thoughts about your prompt

- Click Show/Hide thoughts.

Add or edit code¶

At any time, you can edit the code generated by the AI assistant or enter your own custom code. The notebook environment supports the following languages, each extended with commonly used data manipulation and scientific operation libraries.

Note

The use of Julia is currently disabled.

-

Python libraries

- pandas for organizing, cleaning, and analyzing data tables and time series.

- numpy for handling of large arrays of numbers and performing mathematical operations.

- scipy for performing advanced scientific operations, including optimization, integration, and interpolation.

- pickle for saving and reloading complex data structures.

-

Julia libraries

- DataFrames for manipulating data tables.

- CSV for reading, writing, and processing CSV files.

- HTTP for sending and receiving data over the Internet.

- JSON3 for working with JSON data.

- DisplayAs for displaying data.

-

R libraries

- data.frame for manipulating data tables.

Tip

More libraries are available in the code notebook, but you may need to import them before use.

-

To list the available packages, click Add a cell and then enter and run:

pip listPkg.installed()installed.packages() -

To import a package, click Add a cell and then enter and run:

import <package_name>using <package_name>library(<package_name>)

Additional libraries that may be useful for data transformations

-

Data manipulation and analysis

-

Data visualization

- cartopy for creating maps and visualizing geographic data.

- matplotlib for creating static, animated, and interactive visualizations.

-

Machine learning

- scikit-learn for creating machine learning models.

- torch for building, training, and experimenting with machine learning models.

-

Image processing

- scikit-image for processing and analyzing images.

-

Graph and network analysis

- networkx for working with networks and graphs.

Change the language of the code notebook

The Transform dataset AI assistant writes Python code by default. You can switch between Python, R, or Julia code at any time.

- Use the language dropdown above the code cells.

Make changes to a transformation

- Directly edit the code in the In cell and then click Run .

Add your own custom code

- Scroll to the bottom of the window and click Add a cell.

- Enter your code in the In cell and then click Run .

Choose where to insert your custom code

By default, new code cells appear at the bottom of the notebook. You can add intermediate steps in your transformation by changing where your code cells appear. Note that if you do this, you will need to rerun any downstream transformations.

- Select the cell above where you want to insert the new code cell.

- Click Add a cell.

Save transformed data¶

At times in your transformation or whenever specifically prompted, the AI assistant creates new transformed datasets as the output for the Transform dataset operator. This lets you return to previous versions of your dataset or choose the best one to save and use in your workflow.

When you're done making changes, you can connect the chosen output to any operators in the same workflow that take datasets as an input.

To use a transformed dataset in other workflows, save it as a project resource.

Choose a different output for the Transform dataset operator

- Use the Select a dataframe dropdown.

Save a transformed dataset to your project resources

You can save your transformations as a new dataset at any time.

- (Optional) If you created multiple outputs during your transformations, Select a dataframe to save.

- Click Save for reuse, enter a unique name in the text box, and then click Save.

Preview a transformation on the Transform dataset operator in the workflow graph

- Select Display on node thumbnail.

Download a transformed dataset

- Save the transformation output as a new dataset.

- Close the Transform dataset code notebook.

- In the Resources panel, click the name of the new dataset.

- Click > Download.

Transformation examples¶

The following sections show examples of how to prompt the Transform dataset AI assistant to perform commonly used transformations.

Example prompts

Some simple prompts that can be used as part of larger transformation processes include:

Filter the data to just location = "US"Convert the date column to timestamps and plot the dataCreate a new census column that is a rolling sum of 'value' over the previous 10 daysAdd a new column that is the cumulative sum of the valuesPlot the dataRename column 'cases' to 'I', column 'hospitalizations' to 'H', and 'deaths' to 'E'

Clean a dataset

You can use the AI assistant to clean your dataset by specifying column types, reformatting dates, and performing other common data preparation tasks.

Specify the type of data in a column

Reformat a column of numeric IDs to, for example, add back leading zeroes that were stripped off:

Set the data type of the column "fips" to "string". Add leading zeros to the "fips" column to a length of 5 characters.

Reformat dates

Datasets with inconsistent date formats can interfere with accurate interpretation and integration into model parameters:

Set the data type of the column "t0" to datetime with format like YYYY-MM-DD hh:mm:ss UTC

Combine datasets

Before you combine datasets, make sure they share at least one common column like name, ID, date, or location. You can ask the AI assistant to link them by matching records based on the common data so that information aligns correctly.

- Connect the outputs of each dataset to the input of a Transform dataset operator and then click Open.

-

Ask the assistant to:

Join d1 and d2 where date, county, and state match. Save the result as a new dataset and show me the first 10 rows.Tip

You can also specify what type of join (such as inner join, left join, right join, or full outer join) you want the assistant to perform.

-

To save the dataset as a new resource in your project, change the dataframe and click Save for reuse.

Plot a dataset

You can visualize your data to explore patterns, compare quantities, identify relationships, analyze distributions, and capture insights tailored your analysis. Supported visualizations include:

- Line plots

- Bar charts

- Scatter plots

- Box plots

- Histograms

- Pie charts

- Heatmaps

- Violin plots

- Bubble charts

- Area charts

Tip

To refine your visualizations, edit your prompt to add more details about what you want to see (for example, add Insert a legend to a prompt that initially only requests a plot).

-

Ask the assistant to plot your data. For the best results, be as specific as possible about what you want to see:

plot the number of hospitalizations over the 150 days for the baseline, masking, and vaccination interventions. -

To refine the visualization, perform one of the following actions:

- Edit your prompt to add more information that explains the changes you want.

- Edit the generated code and then click Run .

-

(Optional) To share the image:

- Select Display on node thumbnail to use the image as the thumbnail on the Transform dataset operator in the workflow.

- Right-click the image, select Copy image, and then paste it into your project overview.

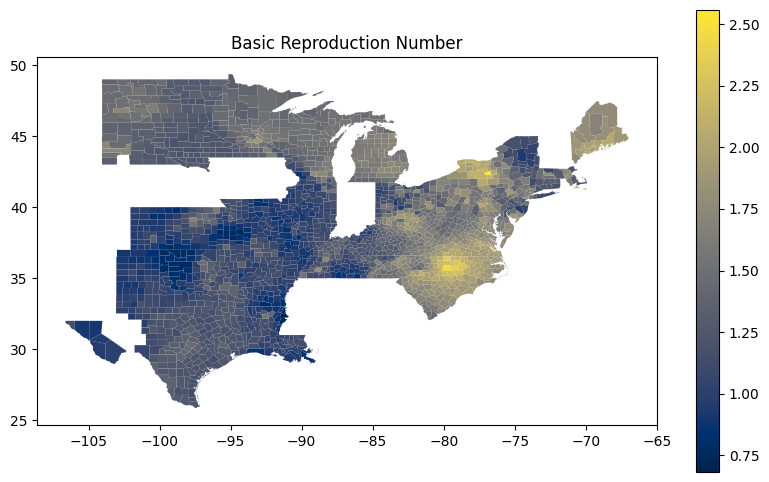

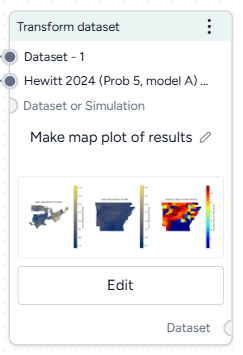

Create a map-based visualization

The AI assistant can connect to third-party code repositories and data visualization libraries to incorporate geolocation data and then create map plots.

These prompts ask the assistant to get U.S. county-level data from plotly and then use using matplotlib and geopandas handle and visualize geographic data structures.

Write me code that downloads the US counties geojson from plotly GitHub using urlopen

Use matplotlib to make a figure. Create a choropleth map from the column "Rl" in the geopandas dataframe "new_df_all". Use the "cividis" colormap. Add a legend.

Compare datasets

You can use the AI assistant to compare multiple datasets or simulation results.

See the Working with Data workflow in the Terarium Sample Project. It takes three datasets generated by optimizing intervention policies and then:

- Combines them into a new scenario comparison dataset.

- Calculates summary statistics for hospitalizations in each dataset.

- Identifies the timepoint at which the maximum number of hospitalizations occur in each dataset.

- In a separate data transformation, plots hospitalizations for each intervention over time.

Convert incidence data to prevalence data

If you have an epidemiological dataset that contains incidence data (such as new cases per day), you can prompt the AI assistant to convert it to prevalence data (such as total cases at any given time). You will need to specify:

- How long it takes people to recover.

- The susceptible population.

This prompt converts daily case counts into prevalence data. It uses user-supplied recovery and population data to calculate total cases:

let's assume avg time to recover is 14 days and time to exit hosp is 10 days. Can you convert this data into **prevalence** data? please map it to SIRHD. Assume a population of 150 million.

For more information on the logic of how the AI assistant converts from incidence to prevalence data, see the instructions the assistant follows in these cases.

Calculate peak times

Calculating peak times can help you identify critical periods of disease spread, enabling targeted interventions.

This prompt takes a collection of daily infection rates for various FIPs codes and identifies the peak time for each one:

Create a column named "peak_time". The first column is "fips". The second column is "peak_time", and its values are the values of the "timepoint" column for which the values of the FIPS columns are at a maximum.

Compare datasets¶

You can compare the impacts of two or more interventions or rank interventions using the Compare datasets operator.

Compare datasets operator¶

In a workflow, the Compare datasets operator takes two or more datasets or simulation results as inputs and plots them. It outputs a dataset comparison, which can be used as a dataset in other operators.

-

Inputs

Two or more datasets or simulation results

Tip

Use descriptive names for your datasets and simulation results. This will help you interpret the comparison.

-

Outputs

Dataset comparison

Add the Compare datasets operator to a workflow

-

Perform one of the following actions:

- On a resource or operator that outputs a dataset or simulation result, click Link > Compare datasets.

- Right-click anywhere on the workflow graph, select Data > Compare datasets, and then connect the output of two or more datasets or simulation results to the Compare datasets inputs.

Compare datasets¶

You can visually compare the impact of interventions or rank interventions based on multiple criteria.

Open the Compare datasets operator

- Make sure you've connected two or more datasets or simulation results to the Compare datasets operator and then click Open.

Compare the impact of interventions¶

You can assess how different interventions influence outcomes by directly comparing their effects on key variables.

Define the comparison¶

You can set up your dataset comparison by selecting a baseline and adjusting key options to align with your analysis goals.

Define the comparison

- Select Compare scenarios.

- (Optional) Specify which dataset is the baseline simulation.

- (Optional) Select Average treatment effect to include a summary of the overall impact of interventions in the resulting comparison tables.

Customize the comparison plot¶

You can tailor the resulting comparison plots to highlight the most relevant aspects of your interventions.

Customize the comparison plots

- Select the variables you want to plot.

-

Select how to plot the values. You can show:

- Raw values.

- Percent change with respect to the baseline.

- Difference from the baseline.

-

Select the data format to be displayed in the plot:

- Default (mean)

- Quantiles (specify upper and lower bounds).

Annotate charts¶

Adding annotations to charts helps highlight key insights and guide interpretation of data. You can create annotations manually or using AI assistance.

Add annotations that call out key values and timesteps

To highlight notable findings, you can manually add annotations that label plotted values at key timesteps.

- Click anywhere on the chart to add a callout.

- To add more callouts without clearing the first one, hold down Shift and click a new area of the chart.

Prompt an AI assistant to add chart annotations

You can prompt an AI assistant to automatically create annotations on the variables over time and comparison charts. Annotations are labelled or unlabelled lines that mark specific timestamps or peak values. Examples of AI-assisted annotations are listed below.

- Click Options .

-

Describe the annotations you want to add and press Enter.

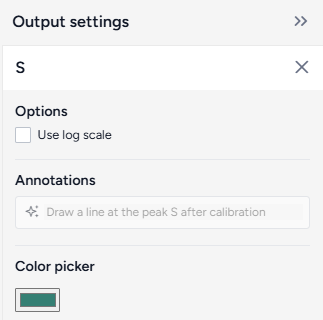

Draw a vertical line at day 100Draw a line at the peak S after calibrationDraw a horizontal line at the peak of default configuration Susceptible after calibration. Label it as "important"```{ .text .wrap } Draw a line at x = 40 only for ensemble after calibrationDraw a vertical line at x is 10. Don't add the label

Display options¶

You can customize the appearance of your charts to enhance readability and organization of the results.

Access additional chart settings

To access additional options for each chart:

- Click Options .

Change the chart scale

By default, charts are shown in linear scale. You can switch to log scale to view large ranges, exponential trends, and improve visibility of small variations.

- Select or clear Use log scale.

Hide in node

The variables you choose to plot appear in the results panel and as thumbnails on the Compare datasets operator in the workflow. You can hide the thumbnail preview to minimize the space the Compare datasets node takes up.

- Select Hide in node.

Change parameter colors

You can change the color of any variable to make your charts easier to read.

- Click the color picker and choose a new color from the palette or use the eye dropper to select a color shown on your screen.

Rank interventions¶

More info coming soon.

Ended: Review and transform data

Modeling ↵

Working with a model¶

A model is an abstract representation that approximates the behavior of a system. In Terarium, you can build a chain of complex operations to recreate, edit, configure, stratify, calibrate, and simulate models.

Note

For information about:

- Uploading models, see Gather modeling resources.

- Creating models, see Edit model.

Model resource¶

A model resource represents a model you've uploaded to or created in Terarium.

In a workflow graph, a model resource shows its underlying diagram or equations. You can use the resource to:

- Open, review, and enrich the model variables, parameters, observables, and transitions.

- Edit or stratify the model.

- Compare it to other models.

- Create model configurations or intervention policies.

-

Inputs

- None

-

Outputs

- Model

Add a model resource to a workflow

- Drag the resource from the Models section of the Resources panel.

Copy a model

- Add the Model operator to a workflow graph and connect it to an Edit model operator.

- Click Open on the Edit model operator.

- Click Save for re-use, enter a name for the copy, and click Save.

What can I do with a model resource?¶

Hover over the output of the model resource and click link to use the model as an input to one of the following operators.

-

Modeling

- Edit model: Add, remove, or change state variables, transitions, parameters, rate laws, and observables.

- Stratify model: Divide populations into subsets along demographic characteristics such as age and location.

- Compare models: Compare side-by-side with other models to understand their similarities and differences.

-

Configuration and intervention

- Configure model: Set the initial values and parameters for the condition you want to test

- Create intervention policy: Create static and dynamic interventions for "what-if" scenarios.

Review and enrich a model¶

Once you have uploaded or created a model in your project, you can open it to:

- Explore its diagram, equations, state variables, parameters, observables, and transitions.

- Manually add metadata that explains the model components.

- Automatically enrich metadata using documents in your dataset or without additional context.

Review a model¶

To get an understanding of a model, you can open a detailed view that summarizes the following extracted details:

- Description

- Diagram

- Model equations

- State variables

- Parameters

- Observables

- Transitions

- Time

Open a model

- Click the model name in the Resources panel.

Download a model

- Next to the model name, click > Download.

Rename a model

- Click > Rename, type a unique name for the model, and press Enter.

Enrich model metadata¶

If your model lacks descriptive details about its variables and parameters, you can use Terarium's model enrichment capability to complete the:

- Names: A meaningful label that describes what the variable or parameter stands for.

-

Units: What the variable or parameter measures (people, cases).

Note

Transitions don't have units.

-

Descriptions: A short plain language explanation of the variable or parameter's contents.

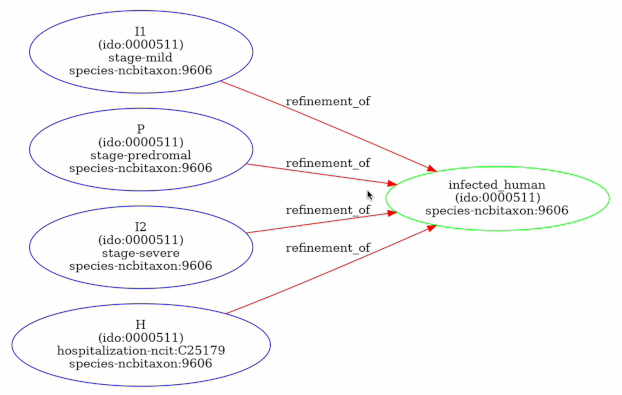

- Concepts: Epidemiological concepts related to the variable or parameter. Useful for comparing models and mapping variables and parameters to data columns.

Note

Enrichment can also provide geolocation information if, for example, your model is stratified by geographic areas such as states or territories.

Terarium's enrichment service uses an AI language model to automatically populate model metadata based on either:

- Contextual clues in the contents of a document in your project.

- The variable or parameter names in the model. In this case, the language model attempts to define the metadata as if they relate to a general epidemiological context.

Enrich model metadata

- Click Enrich metadata.

-

Perform one of the following actions:

- To enrich metadata without selecting a document, click Generate information without context.

- To use a document, select the document title.

-

Click Enrich.

- Review the updated metadata.

- Click Save.

Add or edit model metadata

- Edit the Name, Unit, Description, or Concept.

- Click Save.

Create a model from equations¶

The Create model from equations operator helps you to recreate a model from literature or build a new model from LaTeX equations . In this process, you:

- Choose or enter the equations you want to include in the model.

- Create the model as an output or resource for use in other modeling and configuration processes.

Note

When you upload a document to your project, Terarium automatically extracts any ordinary differential equations it contains and converts them to LaTeX. However, the extraction doesn't handle all the ways that equations can represent models. Before using any equations, check and edit them if needed.

Create model from equations operator¶

In a workflow, the Create model from equations operator takes an optional document as an input and outputs a new model. You can use the operator without any inputs by entering or uploading LaTeX equations that represent the model you want to create.

Once you have created a model, the operator in the workflow shows its underlying diagram or equations.

How it works: Model Service

-

Inputs

Document (optional)

-

Outputs

Add the Create model from equations operator to a workflow

-

Perform one of the following actions:

- Hover over the output of a Document and click Link > Create model from equations.

-

Right-click the workflow graph and select Modeling > Create models from equations.

If needed, connect the output of a Document to the Create model from equations input.

Choose the equations¶

You can create a model from a set of ordinary differential equations by:

- Selecting equations from a document in your project.

- Uploading and extracting equations from an image.

- Manually entering equations as LaTeX code.

To ensure the best results, Terarium uses a set of LaTeX formatting guidelines when converting extracted equations. It is recommended that you follow these guidelines for any LaTeX you add or edit as well.

Select equations from a document¶

To recreate a model from literature, you can select any ordinary differential equations extracted from an input document. Terarium represents each equation as LaTeX.

In some cases you may want to change the LaTeX either to correct or and new details. As you edit the LaTeX, the equation is automatically updated.

Select equations from a document

- In the workflow, make sure the document is connected to the operator input and then click Open.

- In the Input panel, review and select the equations you want to include in the model.

Edit equations extracted from a document

Note

When you modify equations extracted from a document, your changes are only saved to the current Create model from equations operator. If you reuse the document in another Create model from equations operator, you need to make the edits again.

- Click an equation to jump to where it's found in the document and reveal the converted LaTeX code.

- Edit the code as necessary and verify that the updated equation matches your edits.

- Select the check box next to the equation to include it in the model.

Extract equations from an image¶

In some cases, Terarium may not extract all the equations you want from a document. Or you may have equations from other sources that you want to bring into your project. In these cases, you can capture a screenshot of the equations and load them into Terarium for automatic extraction.

Extract equations from an image

- Take a screenshot of the equations you want to use or copy a saved image of the equations.

- Click inside the text box and paste your image. For example, right click and select Paste or press Ctrl+V.

- Click Add.

- Review the new equations. Click to reveal the LaTeX code and edit it if necessary.

Enter your own equations¶

In addition to selecting extracted equations, you can also paste or enter LaTeX code from elsewhere.

Manually enter equations

- Use the text box to add LaTeX equations to enter a new equation and click Add.

- Repeat step 1 for each equation you want to add.

Manually copy an equation from a document

If the automatic extraction missed an equation from your document, you can still copy it and add it separately.

- Select the text in the document viewer and then click Copy text.

- Paste the equation into the Input text box and edit as necessary.

- Click Add.

Recommended LaTeX format¶

The Create model from equations operator works with LaTeX equations. Before it creates a model, Terarium uses an AI assistant to "clean" your edited equations according to the following guidelines. You can follow these same guidelines yourself or enter equations as you normally would and then check the AI-cleaned equations for errors such as missing terms or duplicated parameters.

-

Derivatives¶

-

Write derivatives in Leibniz notation, not Newton or Lagrange notation.

Recommended:

\frac{d X}{d t}

Not recommended:\dot{X}

Not recommended:X^\primeorX' -

Represent partial derivatives of one-variable functions as ordinary derivatives.

Recommended:

\frac{d X}{d t}

Not recommended:\partial_t X

Not recommended:\frac{\partial X}{\partial t} -

Place first-order derivatives to the left of the equal sign.

-

-

Mathematical notations¶

- Avoid the use of:

- Capital sigma (

Σ) and pi (Π) notations for summation and product. - Non-ASCII characters.

- Homoglyphs (characters that look similar but have different meanings).

- Capital sigma (

-

To indicate multiplication, use

*.Recommended:

"b * S(t) * I(t)Not recommended:b S(t) I(t) -

Rewrite expressions with negative exponents as explicit fractions.

Recommended:

"\frac1{{ N }}Not recommended:N^1

- Avoid the use of:

-

Parentheses¶

- When grouping algebraic expressions, don't use square brackets

[ ], curly braces{ }, or angle brackets< >. Use parentheses( )if needed. -

Always expand expressions surrounded by parentheses using the order of mathematical operations.

Recommended:

\alpha * x(t) * y(t) + \beta * x(t) * z(t)Not recommended:x(t) (\alpha y(t) + \beta z(t))

- When grouping algebraic expressions, don't use square brackets

-

Variable and symbol usage¶

-

For variables that have time

tdependence, write the dependence explicitly as(t),Recommended:

X(t)

Not recommended:X -

For variables and names, avoid the use of words or multiple character.

- If needed, use camel case (

susceptiblePopulationSize) to combine multi-word or multi-character names. - Replace any variant form of Greek letters (

\varepsilon) with their main form (\epsilon) when representing a parameter or variable. - Don't separate equations by punctuation (commas, periods, or semicolons).

-

-

Superscripts and subscripts¶

- To denote indices, use LaTeX subscripts

_instead of superscripts and LaTeX superscripts^. - Use LaTeX subscripts

_instead of Unicode subscripts. Wrap all characters in the subscript in curly brackets{...}.

- To denote indices, use LaTeX subscripts

Create the model¶

Once you have selected the equations you want to use, you can create a new model as:

- An output you can connect to other operators in the same workflow.

- A project resource that you can use in any of your workflows.

- A downloadable JSON file you can use in external tools.

Note

Before it creates a model, Terarium uses an AI assistant to "clean" the selected equations according to the LaTeX formatting guidelines. When the model is ready, the Input panel shows the equations "Edited by AI" that appear in the model.

Create a new model from the selected equations

When you run the Create model from equations operator, the newly created model becomes an output you can connect to other operations in the same workflow.

-

Click Run.

Run options for creating models

Terarium supports two methods for creating models from equations:

- MIRA uses LLM assistance to standardize LaTeX equations , translate them to SymPy equations , and then create a Petri Net model.

- SKEMA uses regular expressions to rigidly parse LaTeX equations and create a Petri Net model.

MIRA is the default and recommended model. SKEMA can be used as a workaround when MIRA generates errors or inaccurate results. SKEMA is most reliable for equations with no parentheses, no production/degradation, and no complex rate law expressions.

-

Review the new equations. If you need to make changes, edit the equations in the Input panel and click Run again.

- If needed, use the Output panel to enrich the model metadata and then click Save.

Save the new model as a resource for use in other workflows

By default, the new model only appears as an output of the Create new model. You can make it available for use in other workflows by saving it as a project resource.

- In the Output panel, click Save for re-use and choose a name for the new model.

Download the new model

- Next to the model name, click > Download.

Edit a model¶

Model editing lets you build on existing models. Supported edits include:

- Answering questions about, adding, removing, or changing state variables, transitions, parameters, rate laws, and observables.

- Renaming model elements.

- Setting variable or parameter units.

- Replacing parameters with more complex formulas.

- Resetting the model to its original state.

The Edit model operator is a code notebook with an interactive AI assistant. You describe in plain language the changes you want to make, and the large language model (LLM)-powered assistant automatically generates the code for you.

Note

The Edit model operator adapts to your coding experience. You can:

- Use plain language to prompt an AI assistant for a no-code experience.

- Edit and rerun AI-generated code.

- Write own executable code to make custom edits.

Note

For more examples and information about model editing, see the MIRA training material.

Edit model operator¶

In a workflow, the Edit model operator takes a model or model configuration as an input and outputs an edited model.

Tip

For complex edits with multiple steps, it can be helpful to chain multiple Edit model operators together. This allows you to:

- Keep each notebook short and readable.

- Access intermediate results for testing or comparison.